DAVE: Distribution-aware Attribution via ViT Gradient Decomposition

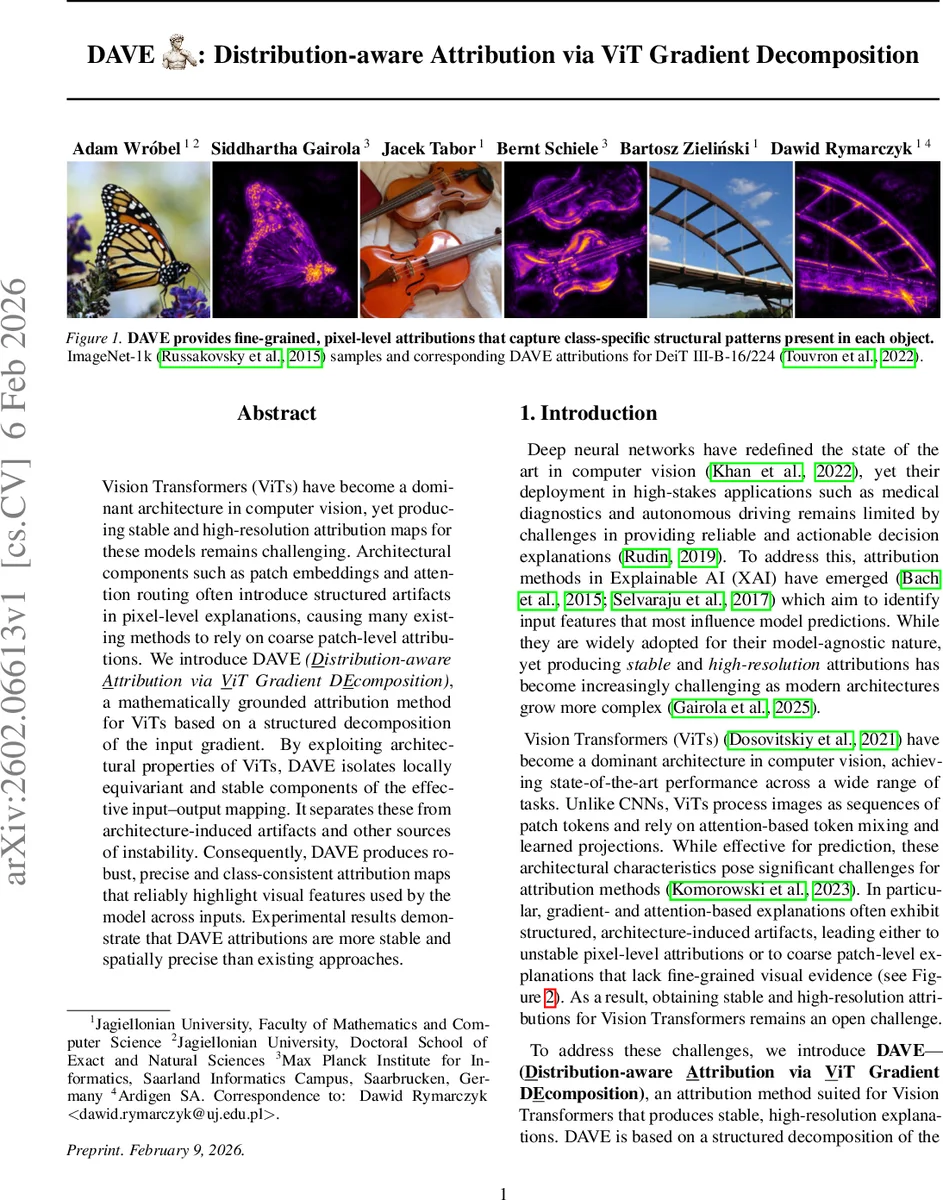

Vision Transformers (ViTs) have become a dominant architecture in computer vision, yet producing stable and high-resolution attribution maps for these models remains challenging. Architectural components such as patch embeddings and attention routing often introduce structured artifacts in pixel-level explanations, causing many existing methods to rely on coarse patch-level attributions. We introduce DAVE \textit{(\underline{D}istribution-aware \underline{A}ttribution via \underline{V}iT Gradient D\underline{E}composition)}, a mathematically grounded attribution method for ViTs based on a structured decomposition of the input gradient. By exploiting architectural properties of ViTs, DAVE isolates locally equivariant and stable components of the effective input–output mapping. It separates these from architecture-induced artifacts and other sources of instability.

💡 Research Summary

The paper tackles the long‑standing problem of generating stable, high‑resolution attribution maps for Vision Transformers (ViTs). While ViTs have become the dominant architecture in computer vision, their unique components—patch embeddings, token‑mixing attention, and layer‑wise normalizations—introduce structured artifacts that severely degrade gradient‑based explanations. Existing methods either produce coarse, patch‑level maps or suffer from grid‑like noise that varies dramatically under small input perturbations.

Core Idea

The authors propose DAVE (Distribution‑aware Attribution via ViT Gradient Decomposition), a mathematically grounded framework that decomposes the input‑gradient of a ViT into two distinct parts: (1) an effective transformation (L(X)) that represents the direct, input‑conditioned linear operation applied by each layer, and (2) an operator‑variation term ((\partial L/\partial X)·X) that captures how this linear operator itself changes with the input. The operator‑variation term is shown to amplify high‑frequency, unstable components, leading to noisy attributions. DAVE discards this term entirely, retaining only the effective transformation as the baseline attribution signal.

Equivariance Filtering

Even the effective transformation contains architecture‑induced patterns (e.g., patch‑grid artifacts) that are not informative for a specific prediction. To suppress these, DAVE introduces a Reynolds‑inspired filtering operator. By sampling a compact group of small spatial transformations (rotations, translations) around the identity and averaging the transformed effective transformations, the method extracts a locally equivariant component that remains consistent under such perturbations. Formally:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment