Think Proprioceptively: Embodied Visual Reasoning for VLA Manipulation

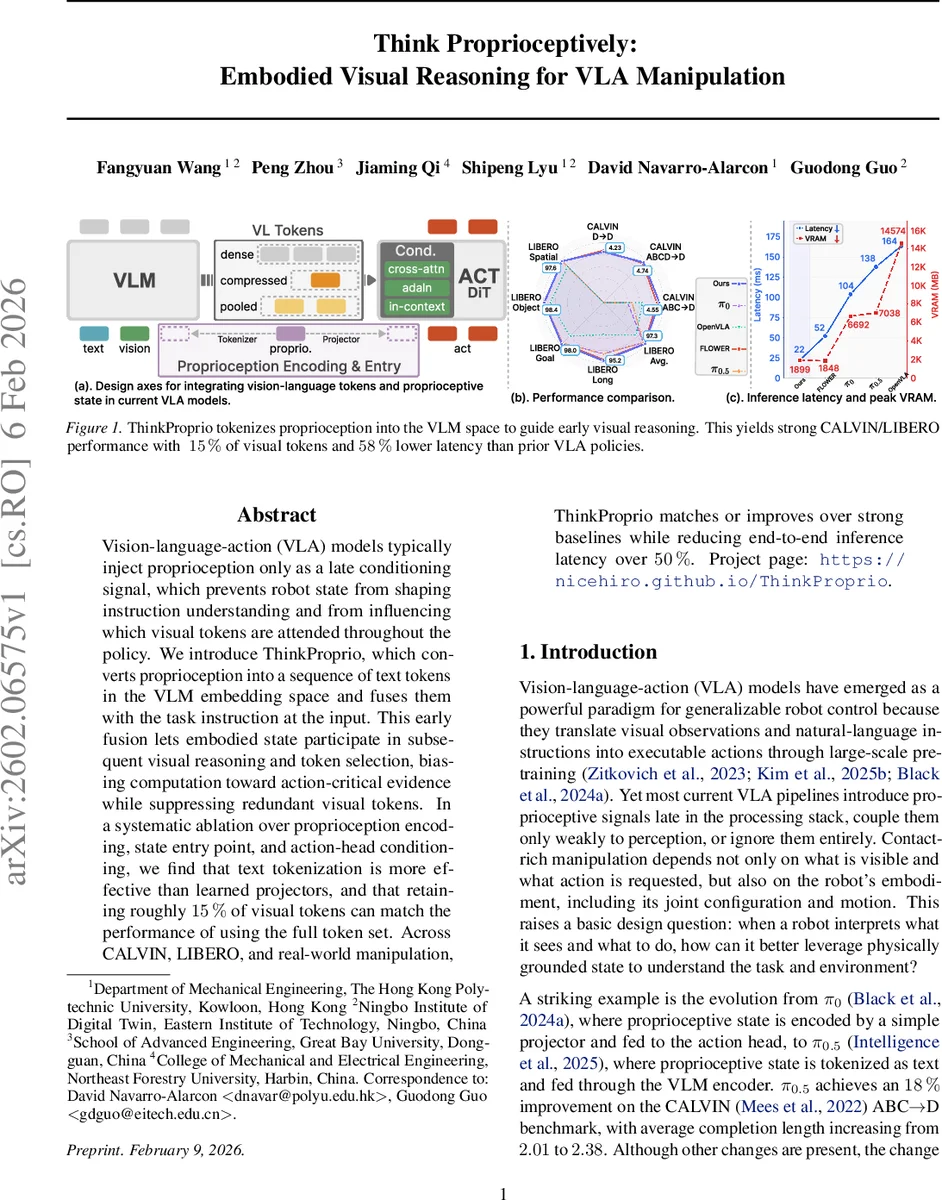

Vision-language-action (VLA) models typically inject proprioception only as a late conditioning signal, which prevents robot state from shaping instruction understanding and from influencing which visual tokens are attended throughout the policy. We introduce ThinkProprio, which converts proprioception into a sequence of text tokens in the VLM embedding space and fuses them with the task instruction at the input. This early fusion lets embodied state participate in subsequent visual reasoning and token selection, biasing computation toward action-critical evidence while suppressing redundant visual tokens. In a systematic ablation over proprioception encoding, state entry point, and action-head conditioning, we find that text tokenization is more effective than learned projectors, and that retaining roughly 15% of visual tokens can match the performance of using the full token set. Across CALVIN, LIBERO, and real-world manipulation, ThinkProprio matches or improves over strong baselines while reducing end-to-end inference latency over 50%.

💡 Research Summary

The paper addresses a fundamental limitation of current Vision‑Language‑Action (VLA) systems: proprioceptive information (joint angles, end‑effector pose, etc.) is typically injected only at the final action‑head stage, preventing the robot’s physical state from influencing how visual inputs are interpreted and which visual tokens are attended during reasoning. To overcome this, the authors propose ThinkProprio, a novel architecture that tokenizes proprioception directly into the embedding space of a pretrained Vision‑Language Model (VLM) and fuses these tokens with the natural‑language instruction at the very beginning of the processing pipeline.

Proprioceptive tokenization: Continuous proprioceptive vectors are uniformly discretized into B bins per dimension, then each bin index is mapped to one of the last B token IDs in the VLM vocabulary. The corresponding token embeddings are retrieved from the VLM’s token‑embedding table, yielding a set of proprioceptive tokens that share the same dimensionality and semantic space as language tokens. This eliminates the need for a learned projection layer and leverages the pretrained text encoder directly.

Physically‑grounded visual token selection: The concatenated instruction‑plus‑proprioception tokens (the “guidance tokens”) are used to compute a query for every visual patch token. After RMS‑normalization, each visual token attends to the guidance tokens, producing a query matrix Q. A similarity score matrix S between Q and the visual tokens is then perturbed with Gumbel noise and scaled by a temperature α that anneals from a high to a low value during training. A vote‑based discrete selection is performed: each row of the noisy score matrix casts a vote for the visual token with the highest perturbed score; any visual token receiving at least one vote is retained. To keep the operation differentiable, a Straight‑Through Estimator (STE) combines the hard binary mask with the soft selection probabilities derived from the Gumbel‑softmax relaxation. The result is a compact set of visual tokens (≈15 % of the original) plus a global context token that preserves coarse scene information.

Model pipeline: The compact visual token set, the global context token, the proprioceptive tokens, and the language tokens are concatenated and fed into the pretrained VLM. The VLM produces fused conditioning features C, which are then consumed by a diffusion‑based action head (Flow model). The action head receives the time‑step scalar T via global AdaLN‑style modulation and cross‑attends to C. Because proprioception is already embedded in C, no separate conditioning path is required.

Training objective: The authors adopt flow‑matching loss: a random diffusion time T is sampled, the target action chunk is noised, and the action head predicts the velocity field that would denoise it. The loss is the L2 distance between the predicted velocity and the true noise‑minus‑action term.

Empirical evaluation: Experiments are conducted on two simulation benchmarks—CALVIN (chain‑of‑tasks language instructions) and LIBERO (various manipulation suites)—as well as on a real‑world robot platform. Key findings include:

- On CALVIN’s ABC→D split, ThinkProprio improves average completed chain length from 4.44 to 4.55 and raises success rate from 96.9 % to 97.3 % despite using only ~15 % of visual tokens.

- Inference latency drops from 52 ms to 22 ms (≈58 % reduction) and VRAM consumption is markedly lower.

- Similar gains are observed on LIBERO, with modest improvements in success rates and comparable latency reductions.

Ablation studies reveal that:

- Text‑tokenization of proprioception outperforms learned linear projections.

- Early fusion (placing proprioceptive tokens at the input) yields the largest performance boost compared to mid‑network or late‑stage insertion.

- Cross‑attention conditioning of the action head is more effective than AdaLN or in‑context concatenation, especially when combined with the token‑selection mechanism.

Real‑world validation demonstrates that the same policy trained in simulation transfers to a physical robot with negligible performance loss, confirming that the compact token set retains sufficient scene information for robust manipulation.

Contributions and impact: ThinkProprio introduces a principled way to make robot state an active participant in visual reasoning, rather than a passive conditioning signal. By converting proprioception into VLM‑compatible text tokens and using it to guide visual token pruning, the method achieves both computational efficiency and state‑aware perception. This work opens a new direction for designing embodied VLA systems where embodiment information shapes perception from the earliest stages, potentially benefiting a wide range of tasks that require tight integration of vision, language, and motor control.

Comments & Academic Discussion

Loading comments...

Leave a Comment