The Law of Task-Achieving Body Motion: Axiomatizing Success of Robot Manipulation Actions

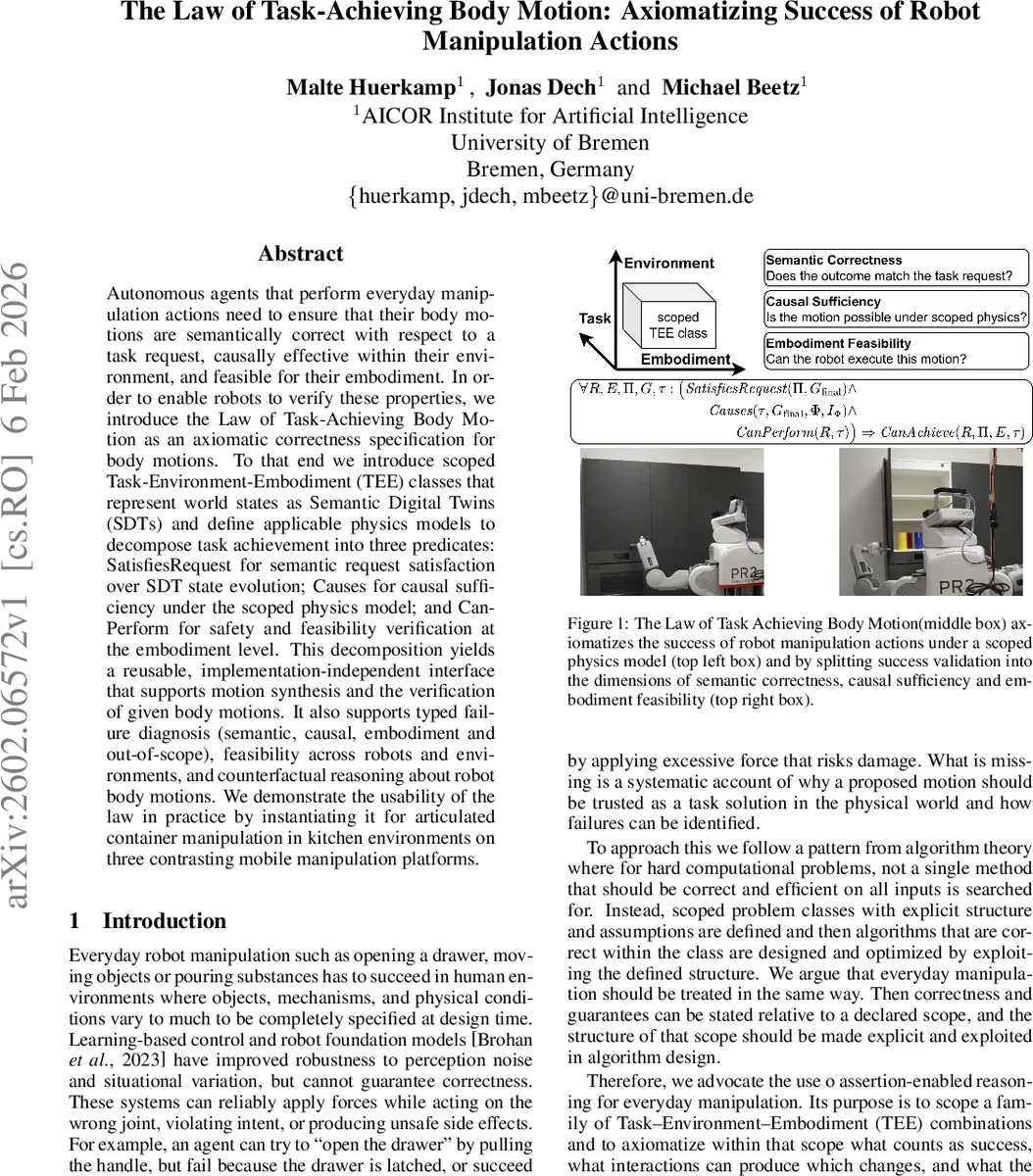

Autonomous agents that perform everyday manipulation actions need to ensure that their body motions are semantically correct with respect to a task request, causally effective within their environment, and feasible for their embodiment. In order to enable robots to verify these properties, we introduce the Law of Task-Achieving Body Motion as an axiomatic correctness specification for body motions. To that end we introduce scoped Task-Environment-Embodiment (TEE) classes that represent world states as Semantic Digital Twins (SDTs) and define applicable physics models to decompose task achievement into three predicates: SatisfiesRequest for semantic request satisfaction over SDT state evolution; Causes for causal sufficiency under the scoped physics model; and CanPerform for safety and feasibility verification at the embodiment level. This decomposition yields a reusable, implementation-independent interface that supports motion synthesis and the verification of given body motions. It also supports typed failure diagnosis (semantic, causal, embodiment and out-of-scope), feasibility across robots and environments, and counterfactual reasoning about robot body motions. We demonstrate the usability of the law in practice by instantiating it for articulated container manipulation in kitchen environments on three contrasting mobile manipulation platforms

💡 Research Summary

The paper introduces a formal correctness specification for robot manipulation called the “Law of Task‑Achieving Body Motion.” The authors argue that everyday manipulation tasks must be correct on three distinct dimensions: semantic (the motion must satisfy the task request), causal (the motion must be sufficient to bring about the desired world change under a scoped physics model), and embodiment (the robot must be physically capable of executing the motion). To make these dimensions amenable to systematic verification, they define scoped Task‑Environment‑Embodiment (TEE) classes. A TEE class bundles a family of task types, a family of environment models, a family of robot models, a governing physics model Φ, and a set of validity intervals IΦ for the physical parameters on which Φ is trusted. Only within the declared scope are guarantees made; outside the interval the system abstains from making any claim.

World states are represented as Semantic Digital Twins (SDTs), which are directed attributed graphs containing named entities, semantic and spatial relations, and physical properties. This structured representation enables logical queries for semantic verification and supplies all necessary data for physics simulation. The authors decompose the overall state transition into a robot‑centric body‑motion function Γ and an environment‑centric physics function Φ, thereby separating robot agency from environmental causality.

The core of the contribution is an axiom schema:

∀R,E,Π,G,τ : (SatisfiesRequest(Π,G_final) ∧ Causes(τ,G_final,Φ,IΦ) ∧ CanPerform(R,τ)) ⇒ CanAchieve(R,E,Π,τ)

The three predicates are defined as follows:

- SatisfiesRequest(Π,G_final) – checks that the final SDT satisfies the logical goal specification of the task. Implementations can use scene‑graph query engines or knowledge‑base reasoning.

- Causes(τ,G_final,Φ,IΦ) – asserts that the motion τ, when simulated under Φ, yields a state approximating G_final and that all physical parameters lie within the trusted interval IΦ. If parameters fall outside IΦ the predicate fails with an OUT‑OF‑SCOPE flag.

- CanPerform(R,τ) – verifies that τ respects the robot’s kinematic and dynamic limits and that no self‑collision occurs. Standard inverse‑kinematics solvers, whole‑body controllers, and collision‑checking libraries can be employed.

Because the law is expressed purely as logical relations, it can be used in multiple modes:

- Motion Generation – given (R,E,Π,G) search for τ that satisfies all three predicates, turning the law into a task‑level specification for planners, learning‑based generators, or LLM‑driven proposals.

- Explanation and Diagnosis – given an observed τ, evaluate each predicate to pinpoint whether failure is semantic, causal, embodiment‑related, or out‑of‑scope.

- Counterfactual Reasoning – modify any of the arguments (e.g., substitute a different robot or alter the environment) and re‑evaluate the predicates to answer “what‑if” questions.

- Learning‑from‑Demonstration – use the predicates as loss terms or constraints to shape data‑driven models toward motions that are provably correct.

The authors instantiate the framework for articulated container manipulation in kitchen settings (opening/closing drawers, cupboard doors, oven doors). They define a specific TEE class D_artic, construct SDT schemas for kitchen scenes, and set physics‑parameter intervals based on calibrated rigid‑body models. Three mobile manipulators (e.g., PR2, Fetch, TurtleBot‑Arm) are used as embodiments. For each robot, the law is applied in the four usage modes. The experiments demonstrate that generated motions satisfy all three predicates, while failure cases (e.g., parameters outside IΦ, joint‑limit violations, or simulation‑goal mismatches) are correctly identified and classified.

Overall, the paper provides a rigorous, reusable logical interface that bridges high‑level task semantics with low‑level physical execution. By making the scope explicit and separating semantic, causal, and embodiment concerns, the Law of Task‑Achieving Body Motion enables systematic verification, synthesis, diagnosis, and counterfactual analysis of robot manipulation across diverse robots and environments, paving the way for more trustworthy autonomous systems in human‑centric domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment