Adaptive Uncertainty-Aware Tree Search for Robust Reasoning

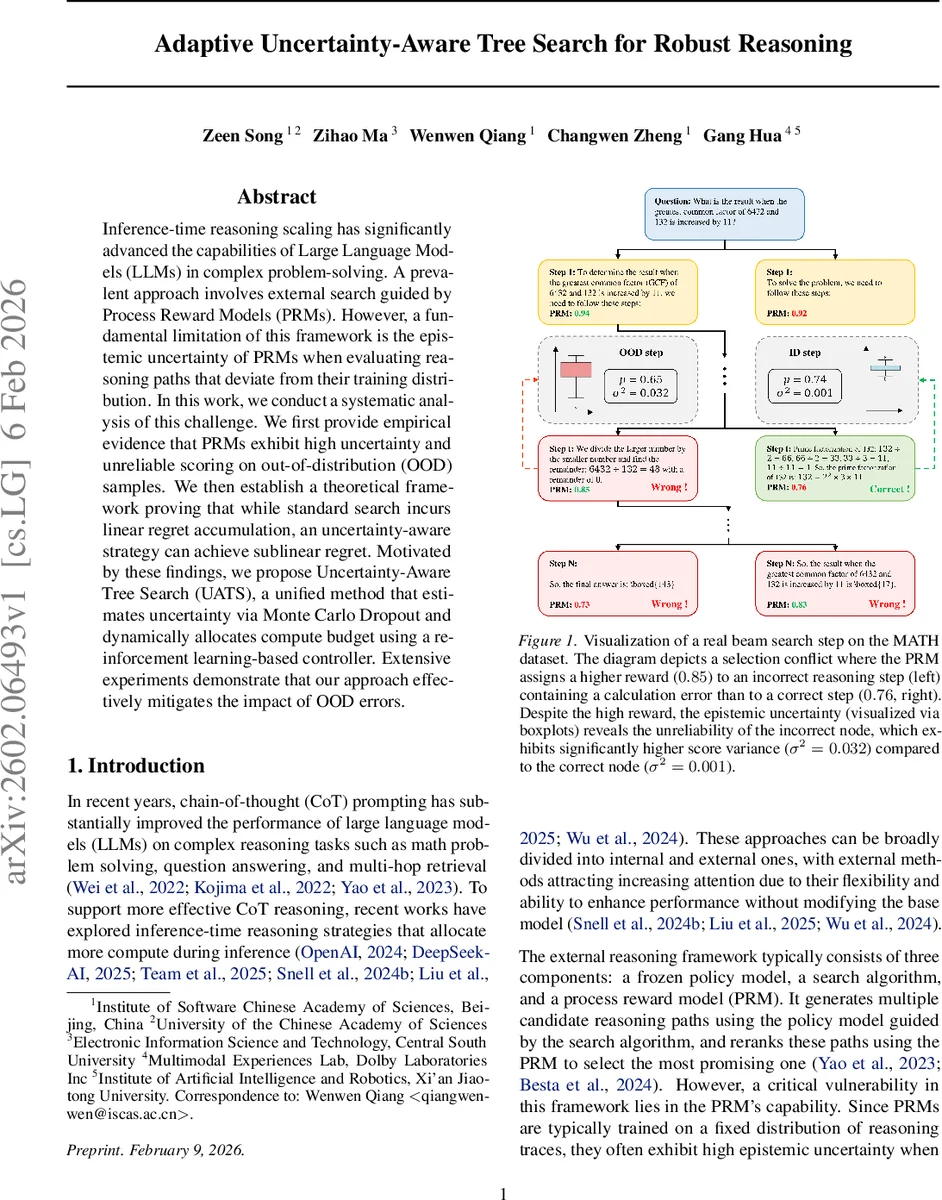

Inference-time reasoning scaling has significantly advanced the capabilities of Large Language Models (LLMs) in complex problem-solving. A prevalent approach involves external search guided by Process Reward Models (PRMs). However, a fundamental limitation of this framework is the epistemic uncertainty of PRMs when evaluating reasoning paths that deviate from their training distribution. In this work, we conduct a systematic analysis of this challenge. We first provide empirical evidence that PRMs exhibit high uncertainty and unreliable scoring on out-of-distribution (OOD) samples. We then establish a theoretical framework proving that while standard search incurs linear regret accumulation, an uncertainty-aware strategy can achieve sublinear regret. Motivated by these findings, we propose Uncertainty-Aware Tree Search (UATS), a unified method that estimates uncertainty via Monte Carlo Dropout and dynamically allocates compute budget using a reinforcement learning-based controller. Extensive experiments demonstrate that our approach effectively mitigates the impact of OOD errors.

💡 Research Summary

Paper Overview

The authors investigate a critical weakness in external reasoning systems that rely on Process Reward Models (PRMs) to evaluate intermediate reasoning steps generated by a frozen policy language model. While PRMs have proven effective as verifiers, they are trained on a fixed distribution of reasoning traces and therefore exhibit high epistemic uncertainty when scoring out‑of‑distribution (OOD) steps. This uncertainty can cause PRMs to assign over‑confident but erroneous scores, leading the search algorithm to discard correct reasoning paths.

Empirical Findings

Two open‑source PRMs (Math‑Shepherd‑PRM‑7B and Qwen2.5‑Math‑PRM‑7B) are evaluated on reasoning traces produced by two policy models (LLemma‑7B and Qwen‑2.5‑Instruct‑7B) over a subset of the MATH‑500 benchmark. The experiments measure (i) final answer accuracy and (ii) score variance obtained via Monte Monte Dropout as a proxy for epistemic uncertainty. Results show that when the policy and PRM are drawn from the same data distribution, accuracy is high (≈78 %) and variance is low (σ²≈0.001). In contrast, mismatched pairs suffer a steep accuracy drop (≈55 %) and a dramatic increase in variance (σ²≈0.032). A concrete failure case illustrates a PRM giving a higher reward (0.85) to an incorrect step while the correct step receives a lower reward (0.76); the variance signal reveals the unreliability of the high‑scoring node.

Theoretical Analysis

The paper formalizes the search process as a sequential decision problem. At each step t, the policy proposes M candidate prefixes h_{t,i}. The PRM provides a stochastic estimate \hat{R}(h) while the true reward is R*(h). Regret is defined as the cumulative difference between the reward of the selected prefix and the optimal one. If the algorithm ignores uncertainty and greedily selects the highest \hat{R}, the expected regret grows linearly with the number of steps (O(T)) because OOD candidates can be repeatedly chosen. By incorporating epistemic variance σ² into a UCB‑style selection rule (e.g., \hat{R} + β·σ), the authors prove that the expected regret becomes sublinear, bounded by O(√T). This establishes a rigorous justification for uncertainty‑aware search.

Proposed Method: Uncertainty‑Aware Tree Search (UATS)

UATS consists of three components:

-

Uncertainty Estimation – Monte Monte Dropout is applied N times (typically 10–20) to each candidate, yielding a mean score μ and variance σ².

-

Uncertainty‑Weighted Exploration – Candidates are ranked using a combination of μ and σ (e.g., μ − λ·σ for conservative pruning or μ + β·σ for optimistic exploration). High‑uncertainty nodes are selectively re‑evaluated with more dropout samples to reduce variance before final selection.

-

Dynamic Budget Allocation – The total compute budget C is modeled as a Markov Decision Process (MDP). A reinforcement‑learning controller learns to adjust hyper‑parameters such as beam width, depth, and the number of re‑evaluations based on the current state (e.g., remaining budget, observed uncertainties). The RL reward balances final answer accuracy against token consumption, encouraging efficient use of the budget.

Experimental Validation

The authors test UATS on two challenging mathematical reasoning benchmarks: MATH‑500 (500 problems) and AIME‑24 (Olympiad‑level problems). They evaluate multiple frozen policy models (Qwen‑2.5, Llama 3.1, Llama 3.2) and a diverse set of PRMs (including Skywork‑o1). Baselines include standard beam search, Best‑of‑N sampling, Tree‑of‑Thoughts, and adaptive verifier‑guided search. Across all settings, UATS consistently outperforms baselines, achieving 3–7 percentage‑point higher accuracy. The gains are most pronounced when the policy‑PRM pair is highly mismatched, confirming that uncertainty awareness mitigates OOD failures. Moreover, the additional cost of dropout‑based uncertainty estimation accounts for less than 10 % of the total compute budget, demonstrating practical efficiency.

Conclusions and Future Directions

The paper makes three major contributions:

-

It identifies and empirically quantifies the epistemic uncertainty problem in PRM‑based external reasoning.

-

It provides a unified theoretical framework showing that uncertainty‑aware selection yields provably lower regret than uncertainty‑agnostic strategies.

-

It introduces UATS, a concrete algorithm that integrates Monte Monte Dropout uncertainty estimates with a learned budget controller, delivering superior accuracy and compute efficiency on real‑world math reasoning tasks.

Future work could explore richer Bayesian uncertainty estimators (e.g., deep ensembles), online fine‑tuning of PRMs during inference, and extending the approach to non‑math domains such as code generation or multi‑modal reasoning.

Overall, the study convincingly demonstrates that accounting for PRM uncertainty is essential for robust, scalable external reasoning, and that the proposed UATS framework offers a viable path toward more reliable AI problem‑solving systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment