RelayGen: Intra-Generation Model Switching for Efficient Reasoning

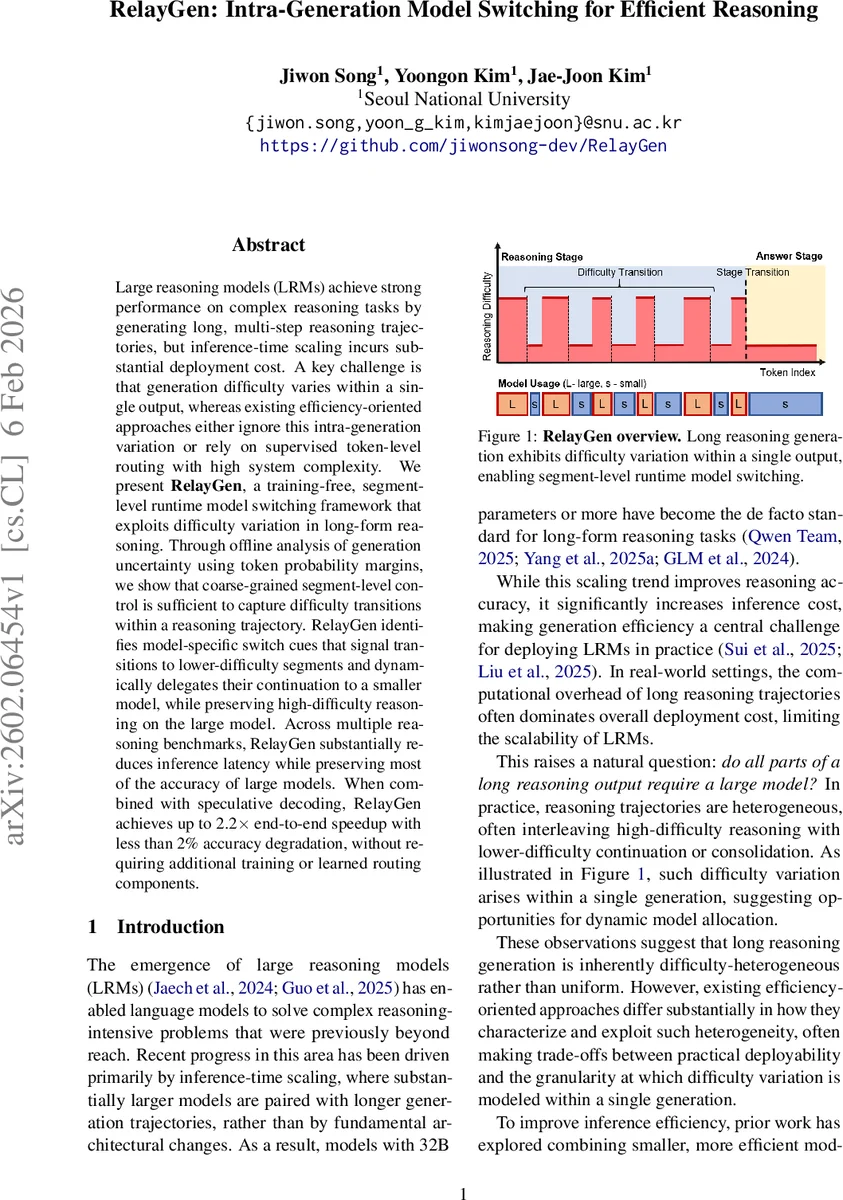

Large reasoning models (LRMs) achieve strong performance on complex reasoning tasks by generating long, multi-step reasoning trajectories, but inference-time scaling incurs substantial deployment cost. A key challenge is that generation difficulty varies within a single output, whereas existing efficiency-oriented approaches either ignore this intra-generation variation or rely on supervised token-level routing with high system complexity. We present \textbf{RelayGen}, a training-free, segment-level runtime model switching framework that exploits difficulty variation in long-form reasoning. Through offline analysis of generation uncertainty using token probability margins, we show that coarse-grained segment-level control is sufficient to capture difficulty transitions within a reasoning trajectory. RelayGen identifies model-specific switch cues that signal transitions to lower-difficulty segments and dynamically delegates their continuation to a smaller model, while preserving high-difficulty reasoning on the large model. Across multiple reasoning benchmarks, RelayGen substantially reduces inference latency while preserving most of the accuracy of large models. When combined with speculative decoding, RelayGen achieves up to 2.2$\times$ end-to-end speedup with less than 2% accuracy degradation, without requiring additional training or learned routing components.

💡 Research Summary

RelayGen addresses the growing inference cost of large reasoning models (LRMs) by exploiting the fact that the difficulty of generating a long reasoning trace is not uniform. The authors first conduct an empirical study on the probability margin (the difference between the top‑1 and top‑2 token probabilities) across thousands of reasoning examples generated by a 32‑billion‑parameter model (Qwen‑3‑32B). They observe substantial fluctuations: the “reasoning stage” contains many low‑margin tokens indicating high uncertainty, while the subsequent “answer stage” exhibits consistently high margins, reflecting low uncertainty. Moreover, certain discourse‑level cues such as “therefore”, “however”, and “wait” tend to precede segments with higher margins, suggesting they can serve as signals for transitions from difficult to easier generation phases.

Based on these observations, RelayGen introduces a training‑free, segment‑level runtime model‑switching framework. The key steps are: (1) offline profiling of a large model to identify reliable “switch cues”. For each candidate cue, the average post‑sentence margin is computed and compared to the global average; cues whose post‑sentence margin exceeds the global average by at least one standard error are selected. This selection requires no optimization or additional parameters. (2) During inference, the large model generates the initial reasoning segment. When a switch cue is encountered, control is handed over to a much smaller model (e.g., Qwen‑3‑0.6B) for the remainder of the current sentence and for the entire answer stage. The small model reuses the key‑value cache built by the large model, preserving context while reducing per‑token computation.

The framework is fully compatible with speculative decoding, a technique that uses a small model to propose candidate tokens that are later verified by a large model. Because RelayGen operates at the granularity of sentences or predefined segments, it can be combined with speculative decoding without the incompatibilities that arise with token‑level routing.

Experiments on several challenging benchmarks—AIME 2025, GPQA‑Diamond, and Math500—demonstrate that RelayGen, when paired with speculative decoding, achieves up to a 2.2× reduction in end‑to‑end latency while incurring less than 2 % absolute accuracy loss. A focused ablation where only the answer stage is delegated to the small model yields a 99.86 % answer‑matching rate (only one mismatch out of 728 samples), confirming that answer generation is highly stable when conditioned on the reasoning context. Compared to token‑level routing methods that require a learned router, RelayGen eliminates the need for extra training data, model updates, and complex deployment pipelines, offering a pragmatic solution for production environments.

The paper also discusses limitations: the set of switch cues is model‑ and dataset‑specific, so new domains may require fresh offline profiling; and the current cue‑based approach may miss subtle difficulty transitions not captured by lexical markers. Future work is suggested to explore automatic cue discovery and dynamic threshold adaptation to broaden applicability.

In summary, RelayGen provides a simple yet effective mechanism to allocate model capacity where it is most needed within a single generation, achieving substantial speedups with minimal accuracy degradation, and doing so without any additional training or specialized routing components. This contribution advances the practical deployment of large reasoning models by bridging the gap between raw performance and real‑world efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment