Don't Break the Boundary: Continual Unlearning for OOD Detection Based on Free Energy Repulsion

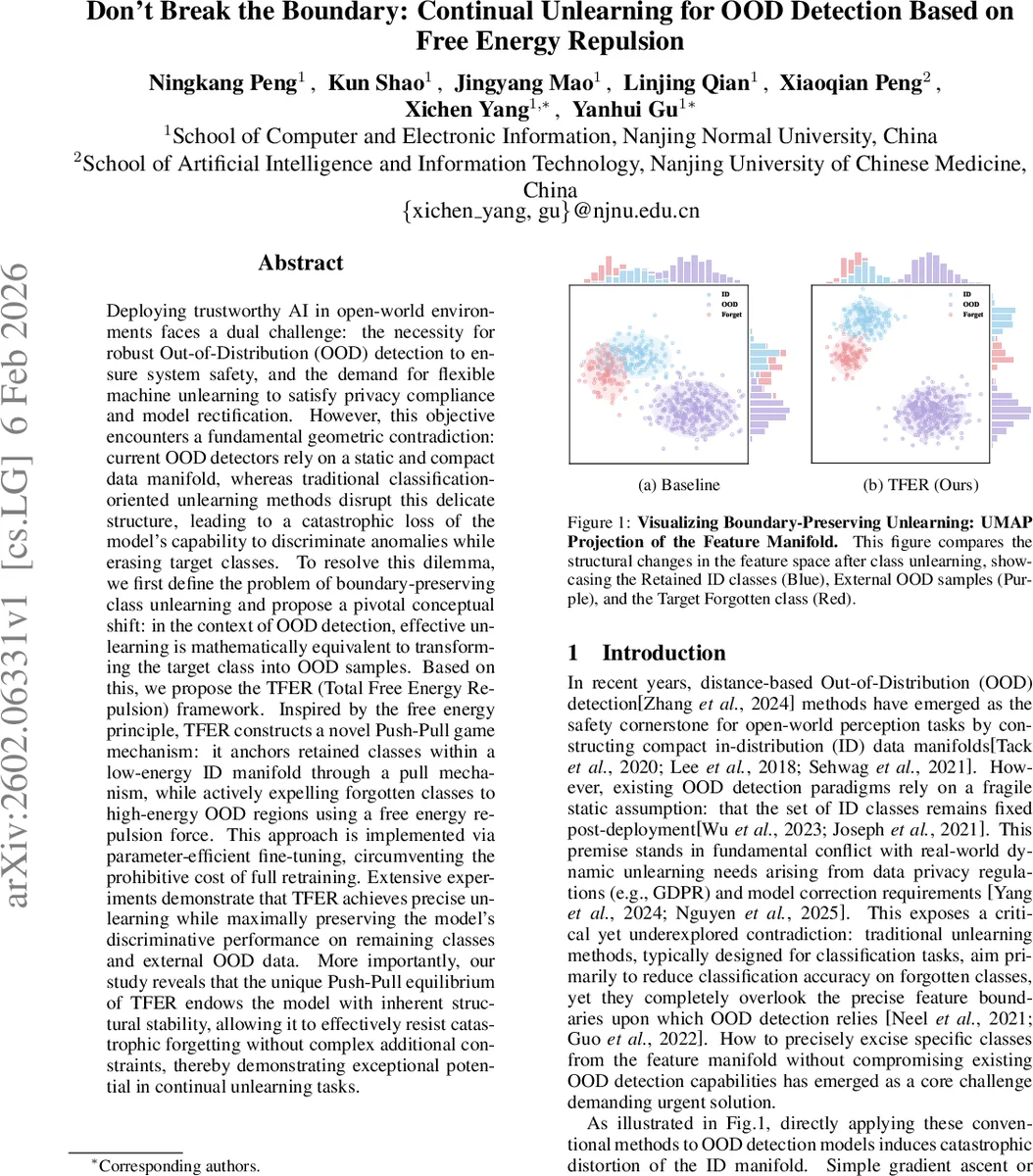

Deploying trustworthy AI in open-world environments faces a dual challenge: the necessity for robust Out-of-Distribution (OOD) detection to ensure system safety, and the demand for flexible machine unlearning to satisfy privacy compliance and model rectification. However, this objective encounters a fundamental geometric contradiction: current OOD detectors rely on a static and compact data manifold, whereas traditional classification-oriented unlearning methods disrupt this delicate structure, leading to a catastrophic loss of the model’s capability to discriminate anomalies while erasing target classes. To resolve this dilemma, we first define the problem of boundary-preserving class unlearning and propose a pivotal conceptual shift: in the context of OOD detection, effective unlearning is mathematically equivalent to transforming the target class into OOD samples. Based on this, we propose the TFER (Total Free Energy Repulsion) framework. Inspired by the free energy principle, TFER constructs a novel Push-Pull game mechanism: it anchors retained classes within a low-energy ID manifold through a pull mechanism, while actively expelling forgotten classes to high-energy OOD regions using a free energy repulsion force. This approach is implemented via parameter-efficient fine-tuning, circumventing the prohibitive cost of full retraining. Extensive experiments demonstrate that TFER achieves precise unlearning while maximally preserving the model’s discriminative performance on remaining classes and external OOD data. More importantly, our study reveals that the unique Push-Pull equilibrium of TFER endows the model with inherent structural stability, allowing it to effectively resist catastrophic forgetting without complex additional constraints, thereby demonstrating exceptional potential in continual unlearning tasks.

💡 Research Summary

The paper tackles a previously under‑explored conflict between two essential requirements for deploying trustworthy AI in open‑world settings: (1) maintaining a compact, static in‑distribution (ID) manifold for high‑quality out‑of‑distribution (OOD) detection, and (2) enabling flexible, privacy‑driven class unlearning. Existing OOD detectors rely on a tightly‑bound feature space, while conventional unlearning methods—designed for classification accuracy reduction—disrupt this space, causing catastrophic loss of OOD detection capability.

To resolve this, the authors first formalize “boundary‑preserving class unlearning” and introduce a new evaluation paradigm: Forget‑as‑OOD. In this view, successful unlearning means that the forgotten class is transformed into samples indistinguishable from natural OOD data. Consequently, OOD metrics (AUROC, FPR95) become the proper measure of unlearning effectiveness, rather than classification accuracy.

The proposed solution, Total Free Energy Repulsion (TFER), builds a Push‑Pull game on top of a hyperspherical prototype‑based OOD detector. Each retained class is represented by a set of von Mises‑Fisher prototypes; the model’s logits are computed as a log‑sum‑exp over prototype similarities.

Push (Unlearn) Mechanism – The total free energy of a sample with respect to retained classes is defined as

E(z; Y_Retain) = −log ∑{j∈Y_Retain} exp(L_j(z)).

The unlearning loss is L_unlearn(z_u) = log ∑{j∈Y_Retain} exp(L_j(z_u)).

Minimizing this loss forces the forgetting sample z_u to move away from all retained class manifolds simultaneously, effectively pushing it into a high‑energy region that typical OOD detectors treat as outliers.

Pull (Protect) Mechanism – To keep the retained manifold stable, a prototype‑based contrastive loss is employed:

L_protect = −log

Comments & Academic Discussion

Loading comments...

Leave a Comment