SAGE: Benchmarking and Improving Retrieval for Deep Research Agents

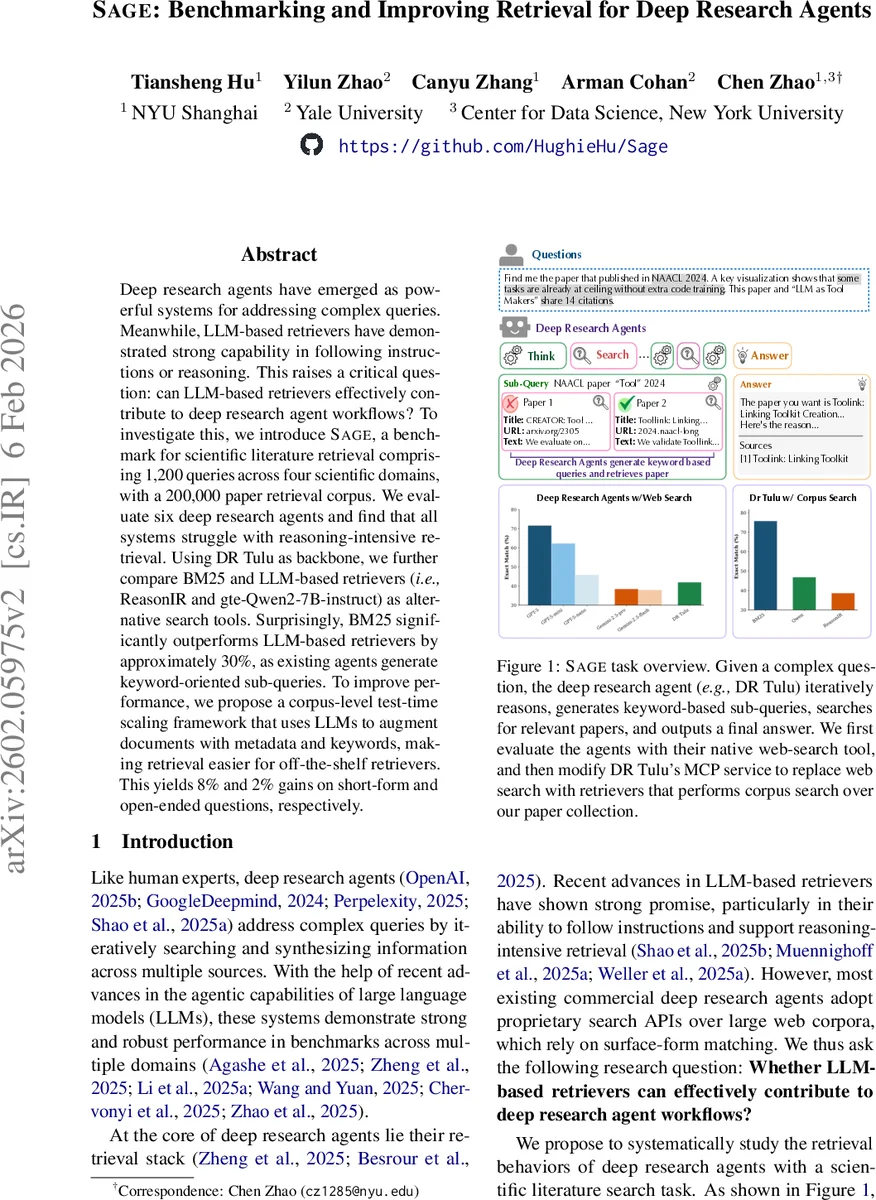

Deep research agents have emerged as powerful systems for addressing complex queries. Meanwhile, LLM-based retrievers have demonstrated strong capability in following instructions or reasoning. This raises a critical question: can LLM-based retrievers effectively contribute to deep research agent workflows? To investigate this, we introduce SAGE, a benchmark for scientific literature retrieval comprising 1,200 queries across four scientific domains, with a 200,000 paper retrieval corpus. We evaluate six deep research agents and find that all systems struggle with reasoning-intensive retrieval. Using DR Tulu as backbone, we further compare BM25 and LLM-based retrievers (i.e., ReasonIR and gte-Qwen2-7B-instruct) as alternative search tools. Surprisingly, BM25 significantly outperforms LLM-based retrievers by approximately 30%, as existing agents generate keyword-oriented sub-queries. To improve performance, we propose a corpus-level test-time scaling framework that uses LLMs to augment documents with metadata and keywords, making retrieval easier for off-the-shelf retrievers. This yields 8% and 2% gains on short-form and open-ended questions, respectively.

💡 Research Summary

The paper introduces SAGE, a new benchmark designed to evaluate the retrieval component of deep research agents on scientific literature. SAGE comprises 1,200 queries—600 short‑form, fact‑based questions that require intensive reasoning over metadata, figures, tables, and citation networks, and 600 open‑ended, real‑world scenario questions that ask agents to retrieve a ranked set of relevant papers. The benchmark covers four domains (Computer Science, Natural Science, Healthcare, and Humanities) with a fixed corpus of 200 k up‑to‑date open‑access papers (≈50 k per domain).

The authors first assess six state‑of‑the‑art deep research agents, including proprietary models (GPT‑5 variants, Gemini‑2.5‑Pro/Flash) and the open‑source DR‑Tulu, using each agent’s native web‑search API. All agents perform reasonably on simple fact‑lookup but struggle dramatically on the reasoning‑intensive queries; even the best system achieves only around 70 % exact‑match accuracy on short‑form questions.

To isolate the retrieval step, the study fixes DR‑Tulu as the reasoning backbone and swaps its search backend for three alternatives: a classic BM25 lexical retriever, the LLM‑based ReasonIR, and the instruction‑tuned gte‑Qwen2‑7B‑instruct. Surprisingly, BM25 outperforms the LLM‑based retrievers by roughly 30 % in exact‑match. The authors attribute this gap to the nature of the sub‑queries generated by current agents: they are largely keyword‑oriented, which aligns well with surface‑form matching but defeats the semantic matching strengths of LLM retrievers.

To address this mismatch, the paper proposes a corpus‑level test‑time scaling framework. An LLM is used to process every document in the corpus, automatically generating enriched metadata (year, authors, venue) and a concise set of domain‑specific keywords. These augmentations make the documents more amenable to lexical matching, effectively bridging the gap between the agents’ keyword‑driven sub‑queries and the retrieval engine. When applied, the framework yields an 8 % absolute gain in exact‑match for short‑form questions and a 2 % increase in weighted recall for open‑ended questions. Ablation experiments confirm that combining both metadata and keyword enrichment provides the largest boost, while each component alone still offers measurable improvements.

The paper’s contributions are threefold: (1) the release of SAGE, a large, multi‑domain benchmark that stresses reasoning over scientific literature; (2) a comprehensive evaluation showing that current LLM‑based retrievers collaborate poorly with deep research agents, and that traditional BM25 remains a strong baseline; (3) a practical test‑time scaling method that leverages LLMs to enrich the corpus, delivering consistent performance gains without modifying the agents themselves.

Limitations include the reliance on a single open‑source agent for the retrieval‑only experiments, and the computational cost of augmenting large corpora at inference time. Future work could explore joint optimization of agents and retrievers, dynamic corpus updates, and more sophisticated prompt engineering for LLM retrievers to better handle keyword‑style sub‑queries. Overall, the study highlights the importance of aligning retrieval mechanisms with the query generation behavior of autonomous agents and provides a concrete pathway to improve scientific literature search in autonomous research pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment