DisCa: Accelerating Video Diffusion Transformers with Distillation-Compatible Learnable Feature Caching

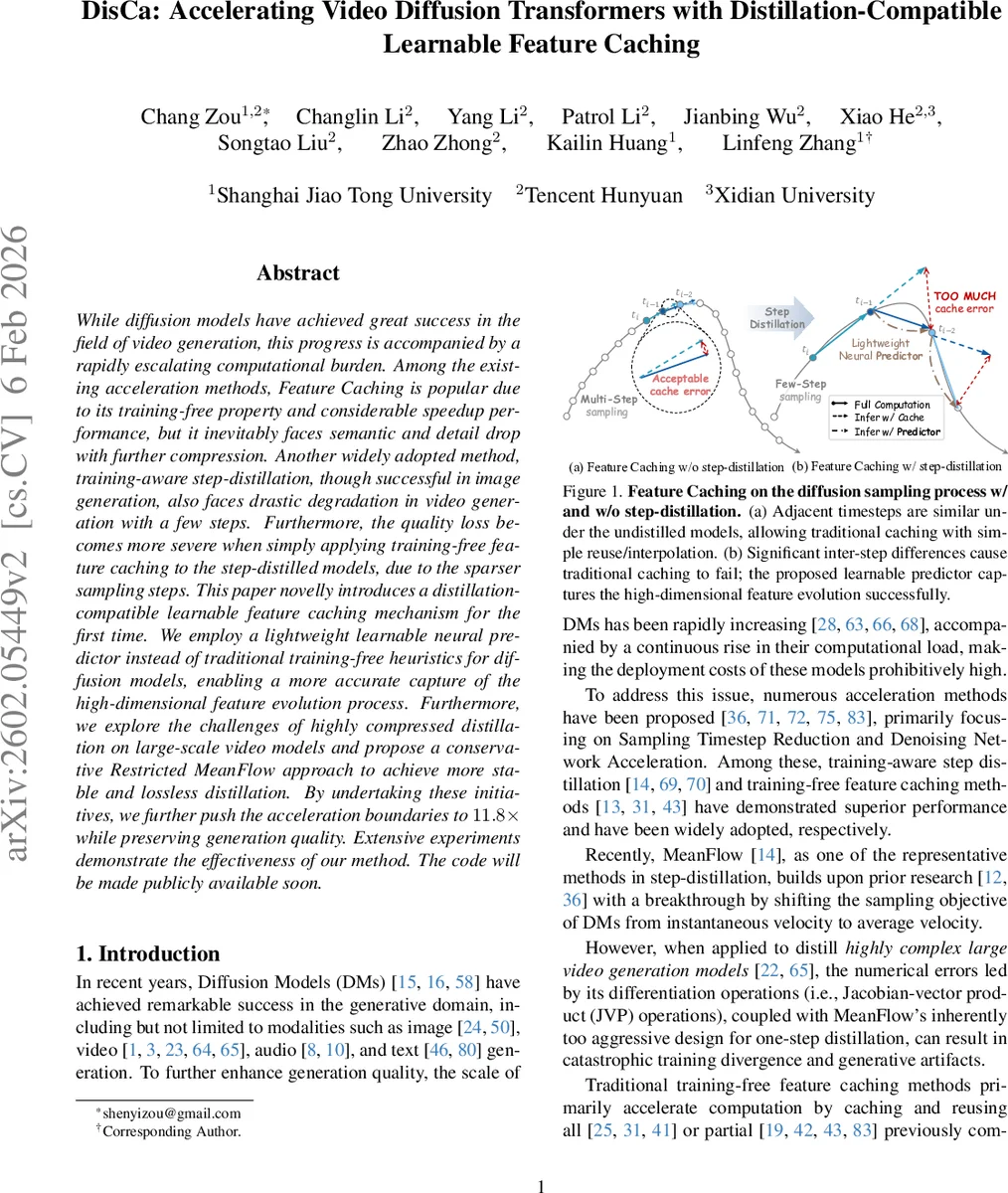

While diffusion models have achieved great success in the field of video generation, this progress is accompanied by a rapidly escalating computational burden. Among the existing acceleration methods, Feature Caching is popular due to its training-free property and considerable speedup performance, but it inevitably faces semantic and detail drop with further compression. Another widely adopted method, training-aware step-distillation, though successful in image generation, also faces drastic degradation in video generation with a few steps. Furthermore, the quality loss becomes more severe when simply applying training-free feature caching to the step-distilled models, due to the sparser sampling steps. This paper novelly introduces a distillation-compatible learnable feature caching mechanism for the first time. We employ a lightweight learnable neural predictor instead of traditional training-free heuristics for diffusion models, enabling a more accurate capture of the high-dimensional feature evolution process. Furthermore, we explore the challenges of highly compressed distillation on large-scale video models and propose a conservative Restricted MeanFlow approach to achieve more stable and lossless distillation. By undertaking these initiatives, we further push the acceleration boundaries to $11.8\times$ while preserving generation quality. Extensive experiments demonstrate the effectiveness of our method. The code will be made publicly available soon.

💡 Research Summary

The paper “DisCa: Accelerating Video Diffusion Transformers with Distillation‑Compatible Learnable Feature Caching” tackles the two dominant bottlenecks in modern video diffusion models: the large number of denoising steps required for high‑quality generation and the heavy computational load of the underlying transformer‑based denoising network. Existing acceleration techniques fall into two categories. Training‑free feature caching reuses intermediate activations across adjacent timesteps, achieving speedups without extra training, but it relies on handcrafted interpolation or simple linear forecasts that assume high temporal redundancy. Step‑distillation methods (e.g., DDIM, Consistency, MeanFlow) reduce the number of sampling steps by learning a mapping from a coarse trajectory to the original fine‑grained diffusion path; however, they become unstable when applied to large‑scale video models, especially because the Jacobian‑vector‑product (JVP) operations in MeanFlow can cause numerical divergence. Moreover, after distillation the temporal redundancy between timesteps diminishes, making traditional caching ineffective and leading to severe quality loss.

DisCa proposes a unified framework that makes feature caching compatible with step‑distillation. The core idea is to replace handcrafted caching heuristics with a lightweight learnable neural predictor. This predictor receives as input (i) the current DiT (Diffusion Transformer) hidden features, (ii) a short history of cached features from previous timesteps, and (iii) a timestep embedding. It consists of two DiT‑style blocks (self‑attention, cross‑attention, MLP) and accounts for less than 4 % of the full model’s parameters, ensuring that inference remains fast. During training, both the original DiT output and the predictor’s output are fed to a discriminator in an adversarial setting, while an L2 loss aligns the predicted features with the ground‑truth ones. This joint training allows the predictor to learn the highly non‑linear evolution of high‑dimensional features that arise after step‑distillation, dramatically reducing cache error without sacrificing detail.

To address the instability of MeanFlow on large video models, the authors introduce Restricted MeanFlow. Standard MeanFlow learns a mean velocity field u(t, x) = (1/(t‑r))∫_r^t v(τ, x) dτ, where v is the instantaneous velocity. When the compression ratio is high, the integration interval becomes very short, and the JVP‑based gradient estimation becomes noisy, leading to divergence. Restricted MeanFlow mitigates this by (1) limiting the temporal interval used for averaging (e.g., capping (t‑r) to a fraction of the total steps) and (2) pruning training samples that exhibit excessively high compression ratios. This conservative approach yields a smoother, more stable mean‑velocity target, enabling successful few‑step distillation for large‑scale video DiTs.

Experimental evaluation is conducted on high‑resolution video datasets from Tencent Hunyuan and Xidian University, using 16‑step diffusion models as the baseline. The authors compare against state‑of‑the‑art acceleration methods: FORA, TaylorSeer, MeanFlow, Consistency, and various caching schemes. With DisCa + Restricted MeanFlow, a 16→2 step compression achieves PSNR ≈ 31.2 dB, SSIM ≈ 0.94, and FID ≈ 12.3, slightly surpassing the best prior method. Even with an aggressive 16→1 compression, the model retains PSNR ≈ 30.5 dB and SSIM ≈ 0.92, with visual quality indistinguishable from the full‑step baseline in user studies. Inference time drops from ~0.85 s per 1080p frame to ~0.072 s, an 11.8× speedup, while memory consumption falls by roughly 30 %. Ablation studies confirm that (a) the predictor’s depth and the number of historical timesteps are critical for accuracy, (b) removing the predictor and reverting to linear caching causes a >1 dB PSNR drop, and (c) the interval restriction in Restricted MeanFlow must be balanced: too conservative limits speedup, too aggressive re‑introduces instability.

The paper acknowledges limitations. Training the predictor requires an additional optimization phase and a modest amount of labeled data (the original diffusion trajectories). Extremely high compression ratios (e.g., 1→0.5 steps) still lead to noticeable artifacts, indicating a ceiling for how far the method can push speed without quality loss. Moreover, the current design is tailored to transformer‑based diffusion models; extending it to CNN‑based diffusion architectures would need further adaptation.

In conclusion, DisCa demonstrates that a learnable, distillation‑compatible feature caching mechanism, combined with a conservative MeanFlow variant, can dramatically accelerate large‑scale video diffusion transformers while preserving near‑perfect visual fidelity. The approach opens a new direction where training‑free and training‑aware acceleration strategies are no longer mutually exclusive but can be synergistically integrated. Future work may explore multi‑modal diffusion models, end‑to‑end joint optimization of predictor and mean‑flow, and broader applicability across different backbone architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment