AgentXRay: White-Boxing Agentic Systems via Workflow Reconstruction

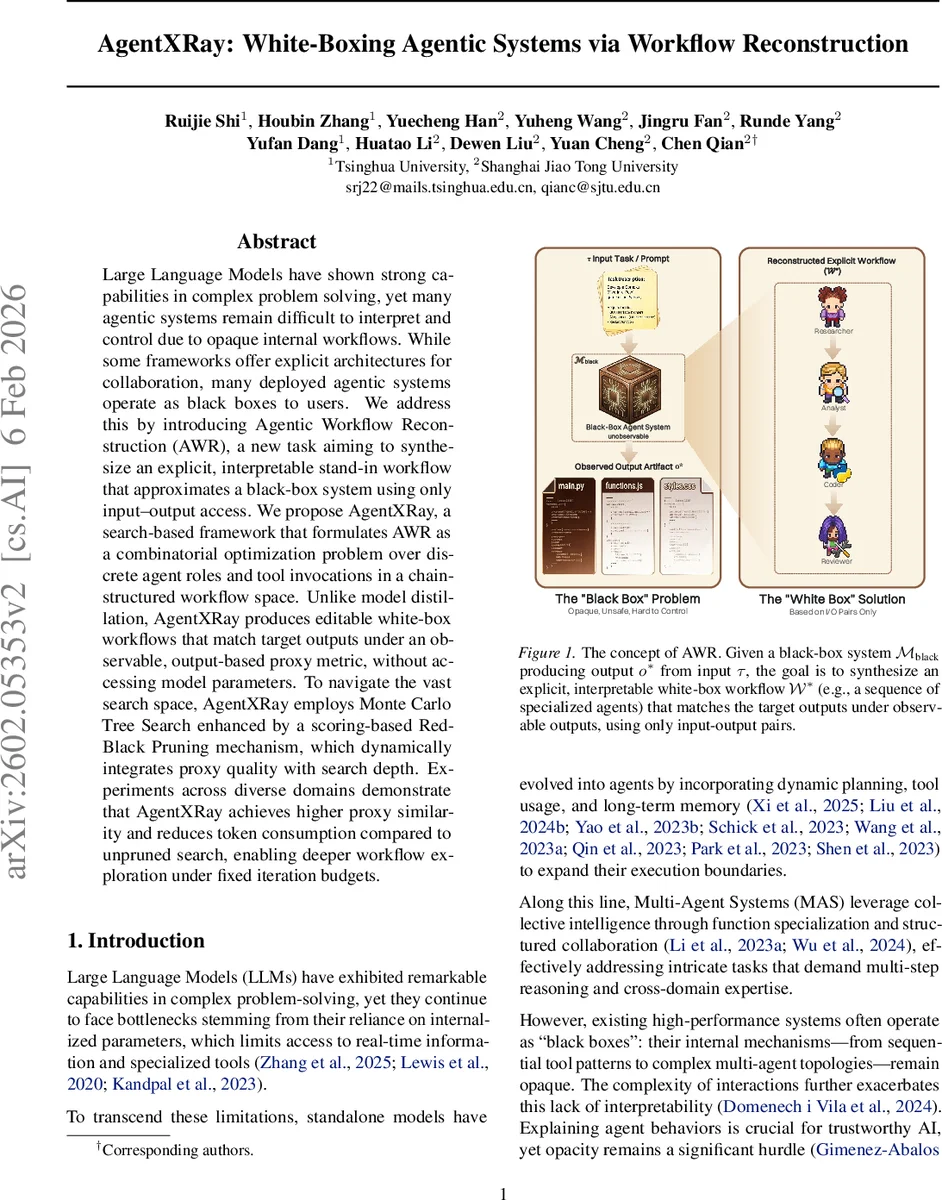

Large Language Models have shown strong capabilities in complex problem solving, yet many agentic systems remain difficult to interpret and control due to opaque internal workflows. While some frameworks offer explicit architectures for collaboration, many deployed agentic systems operate as black boxes to users. We address this by introducing Agentic Workflow Reconstruction (AWR), a new task aiming to synthesize an explicit, interpretable stand-in workflow that approximates a black-box system using only input–output access. We propose AgentXRay, a search-based framework that formulates AWR as a combinatorial optimization problem over discrete agent roles and tool invocations in a chain-structured workflow space. Unlike model distillation, AgentXRay produces editable white-box workflows that match target outputs under an observable, output-based proxy metric, without accessing model parameters. To navigate the vast search space, AgentXRay employs Monte Carlo Tree Search enhanced by a scoring-based Red-Black Pruning mechanism, which dynamically integrates proxy quality with search depth. Experiments across diverse domains demonstrate that AgentXRay achieves higher proxy similarity and reduces token consumption compared to unpruned search, enabling deeper workflow exploration under fixed iteration budgets.

💡 Research Summary

The paper tackles the problem of interpreting and controlling large‑language‑model (LLM) based agentic systems that are typically deployed as opaque “black‑boxes.” While such systems excel at complex problem solving, their internal structures—agent roles, tool‑calling sequences, and coordination topologies—are hidden from users, limiting transparency, safety, and adaptability. To address this, the authors introduce a new task called Agentic Workflow Reconstruction (AWR): given only input‑output pairs from a black‑box system, synthesize an explicit, editable workflow that reproduces the same observable behavior.

The authors formalize AWR as a combinatorial optimization problem over a unified primitive space (Ω). Each primitive is a tuple ⟨role, base model, thought pattern, local toolset⟩, treating both pure reasoning agents and tool‑augmented agents as atomic actions. To keep the search tractable, they adopt a linearity hypothesis, restricting workflows to linear chains of length ≤ Lmax rather than arbitrary graphs. This reflects the fact that many agentic systems execute as sequential action‑observation loops, making a chain representation a reasonable surrogate for the observable execution trace.

To search this massive space, the authors propose AgentXRay, an MCTS‑based framework. In the tree, each node represents a partial workflow prefix; expanding a node adds a new primitive. Because the reward (similarity between the reconstructed output and the black‑box output) is only observable after a near‑complete workflow is executed, MCTS is well‑suited to handle delayed feedback. The core innovation is Red‑Black Pruning, a dynamic coloring scheme that guides exploration versus exploitation. Each node receives a composite score combining quality (average reward), depth, and width. At each iteration, the β‑quantile of scores is computed; nodes above the threshold are colored RED (favor depth refinement via UCB), while the rest are colored BLACK (favor breadth by creating new children). This mechanism prunes low‑potential branches early, concentrating computational budget on promising sub‑trees. Theoretical analysis shows that, under a pruning rate p or quantile β, the effective search volume contracts from Θ(b^{Lmax}) to Θ((b·(1‑p))^{Lmax}) or Θ((b·(1‑β))^{Lmax}), yielding exponential speed‑ups.

Experiments span five diverse domains: software development (ChatDev), data analysis (MetaGPT), education (TeachMaster), 3D modeling (ChatGPT), and scientific computing (Gemini). For each, the target system is treated as a strict black box, and only input‑output pairs are collected. The reconstructed workflows are evaluated using Static Functional Equivalence (SFE)—a task‑specific similarity metric (e.g., AST matching for code, cosine similarity for text). AgentXRay outperforms several baselines, including supervised fine‑tuning on I/O pairs, a strong single‑model baseline (Claude Opus 4.5), the prior workflow reconstruction method AFlow, and a React‑style prompt chain. With all tools enabled, AgentXRay achieves an average SFE of 0.425 versus 0.403 for AFlow, and shows token‑consumption reductions of 8–22 %, indicating more efficient use of the LLM budget. Ablation studies confirm that both the inclusion of tool primitives and the Red‑Black pruning are essential: removing tools or disabling pruning leads to noticeable drops in similarity and increased token usage.

The paper’s contributions are threefold: (1) defining AWR as a novel task for black‑box to white‑box conversion, (2) introducing AgentXRay—a search framework that couples MCTS with a dynamic pruning strategy to navigate the combinatorial space of agentic primitives, and (3) providing extensive empirical evidence of its generality and efficiency across heterogeneous real‑world systems.

Limitations include reliance on the linearity assumption, which may miss richer graph‑structured collaborations; the need for manual definition of the primitive space, which could hinder scalability; and dependence on output‑level similarity metrics that do not guarantee functional equivalence of internal processes. Future work could extend the search to graph‑structured workflows, automate primitive discovery via meta‑learning, and integrate formal verification to certify functional equivalence.

In summary, AgentXRay demonstrates that it is possible to reconstruct interpretable, editable workflows that faithfully mimic black‑box LLM agents using only I/O access. By combining Monte‑Carlo Tree Search with a principled pruning scheme, the method efficiently explores a massive combinatorial space, achieving higher fidelity and lower token cost than prior approaches. This advances the transparency, controllability, and adaptability of LLM‑driven autonomous systems, opening new avenues for debugging, customization, and safe deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment