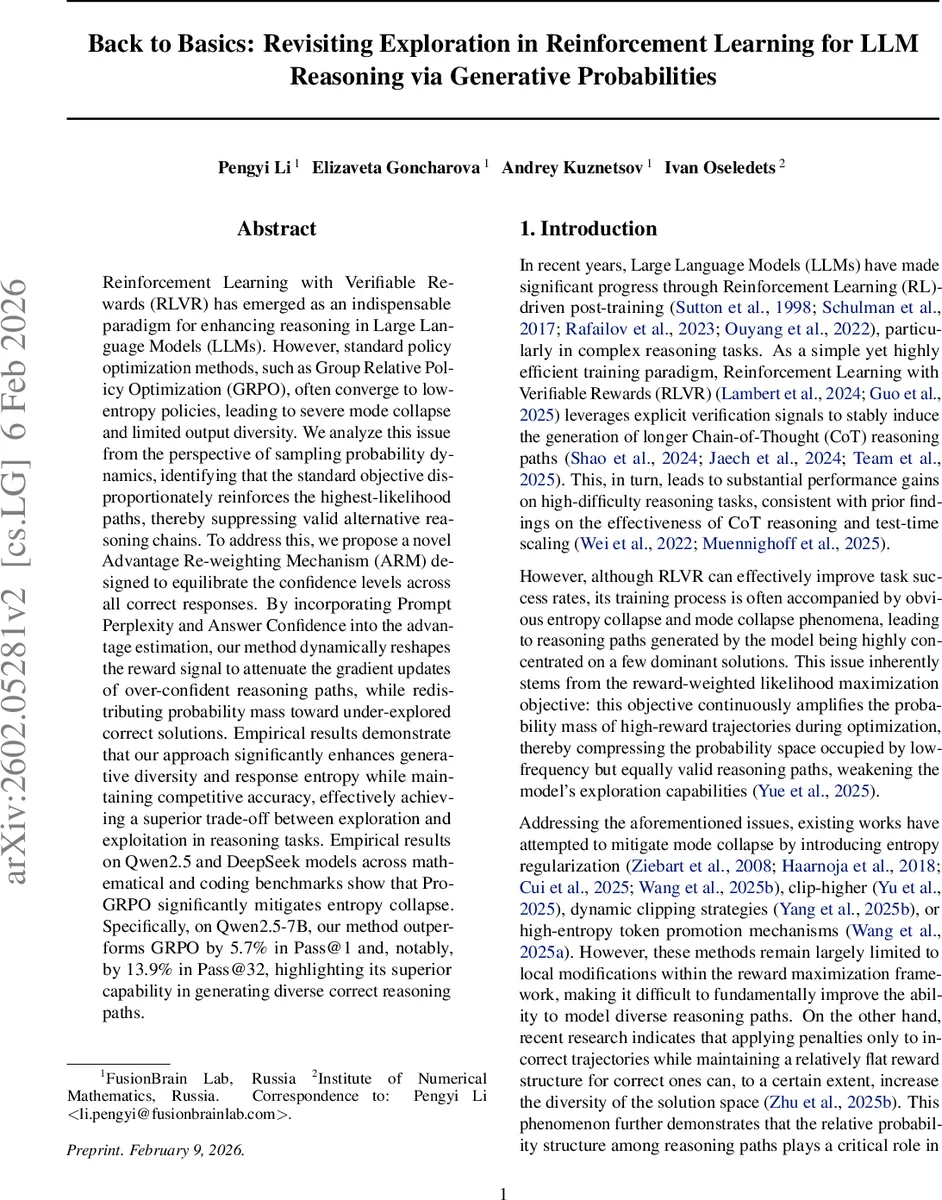

Back to Basics: Revisiting Exploration in Reinforcement Learning for LLM Reasoning via Generative Probabilities

Reinforcement Learning with Verifiable Rewards (RLVR) has emerged as an indispensable paradigm for enhancing reasoning in Large Language Models (LLMs). However, standard policy optimization methods, such as Group Relative Policy Optimization (GRPO), often converge to low-entropy policies, leading to severe mode collapse and limited output diversity. We analyze this issue from the perspective of sampling probability dynamics, identifying that the standard objective disproportionately reinforces the highest-likelihood paths, thereby suppressing valid alternative reasoning chains. To address this, we propose a novel Advantage Re-weighting Mechanism (ARM) designed to equilibrate the confidence levels across all correct responses. By incorporating Prompt Perplexity and Answer Confidence into the advantage estimation, our method dynamically reshapes the reward signal to attenuate the gradient updates of over-confident reasoning paths, while redistributing probability mass toward under-explored correct solutions. Empirical results demonstrate that our approach significantly enhances generative diversity and response entropy while maintaining competitive accuracy, effectively achieving a superior trade-off between exploration and exploitation in reasoning tasks. Empirical results on Qwen2.5 and DeepSeek models across mathematical and coding benchmarks show that ProGRPO significantly mitigates entropy collapse. Specifically, on Qwen2.5-7B, our method outperforms GRPO by 5.7% in Pass@1 and, notably, by 13.9% in Pass@32, highlighting its superior capability in generating diverse correct reasoning paths.

💡 Research Summary

This paper addresses a critical shortcoming of reinforcement‑learning‑with‑verifiable‑rewards (RLVR) for large language model (LLM) reasoning: standard policy‑optimization methods such as Group Relative Policy Optimization (GRPO) tend to collapse the policy’s entropy, leading to mode collapse and a lack of diverse reasoning chains. The authors analyze the problem from a sampling‑probability dynamics perspective and show that the conventional objective disproportionately amplifies the highest‑likelihood trajectories, thereby starving lower‑probability but correct paths of gradient signal.

To remedy this, they propose Probabilistic Group Relative Policy Optimization (ProGRPO), which augments GRPO with an Advantage Re‑weighting Mechanism (ARM). ARM incorporates two model‑internal confidence signals: (1) Prompt Confidence cθ(q), computed as the exponential of the average log‑probability over a selected low‑probability token subset T_low of the prompt, and (2) Answer Confidence cθ(o|q), defined analogously for the generated answer. These scores capture the model’s uncertainty on the most informative tokens (approximately the 20 % of positions with highest predictive entropy). The re‑weighted advantage is then

˜A_i = A_i + α · (cθ(q) − cθ(o_i|q))

where α is a tunable scaling factor. By increasing the advantage for samples where the model is confident about the prompt but less confident about the answer, the update attenuates the gradient contribution of over‑confident paths and redistributes probability mass toward under‑explored correct solutions.

A second technical contribution is Low‑Probability Token Length Normalization. Full‑sequence length normalization dilutes the reward signal because most tokens are trivially high‑probability (often > 0.9). The authors therefore restrict length normalization to the low‑probability token set T_low, preserving meaningful variations in confidence that are more indicative of reasoning quality.

The final objective combines the GRPO surrogate loss with the re‑weighted advantage and a widened clipping interval

Comments & Academic Discussion

Loading comments...

Leave a Comment