DRMOT: A Dataset and Framework for RGBD Referring Multi-Object Tracking

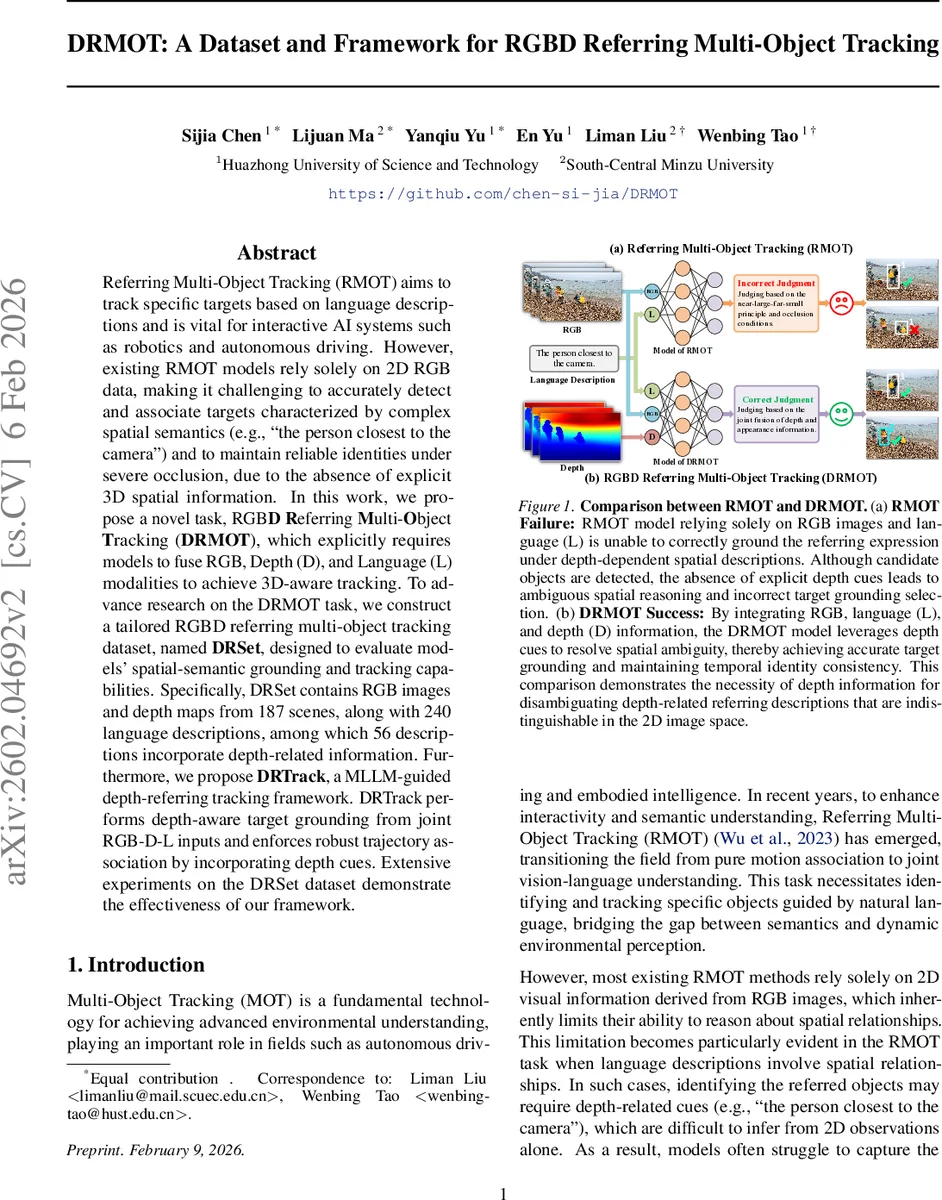

Referring Multi-Object Tracking (RMOT) aims to track specific targets based on language descriptions and is vital for interactive AI systems such as robotics and autonomous driving. However, existing RMOT models rely solely on 2D RGB data, making it challenging to accurately detect and associate targets characterized by complex spatial semantics (e.g., ``the person closest to the camera’’) and to maintain reliable identities under severe occlusion, due to the absence of explicit 3D spatial information. In this work, we propose a novel task, RGBD Referring Multi-Object Tracking (DRMOT), which explicitly requires models to fuse RGB, Depth (D), and Language (L) modalities to achieve 3D-aware tracking. To advance research on the DRMOT task, we construct a tailored RGBD referring multi-object tracking dataset, named DRSet, designed to evaluate models’ spatial-semantic grounding and tracking capabilities. Specifically, DRSet contains RGB images and depth maps from 187 scenes, along with 240 language descriptions, among which 56 descriptions incorporate depth-related information. Furthermore, we propose DRTrack, a MLLM-guided depth-referring tracking framework. DRTrack performs depth-aware target grounding from joint RGB-D-L inputs and enforces robust trajectory association by incorporating depth cues. Extensive experiments on the DRSet dataset demonstrate the effectiveness of our framework.

💡 Research Summary

The paper introduces a new task, RGB‑D Referring Multi‑Object Tracking (DRMOT), which extends the existing Referring Multi‑Object Tracking (RMOT) paradigm by requiring the fusion of three modalities: RGB images, depth maps, and natural‑language descriptions. The authors argue that RMOT models that rely solely on 2‑D visual cues struggle with spatial expressions that depend on depth (e.g., “the person closest to the camera”) and suffer from identity switches under severe occlusion because they lack explicit 3‑D information.

To enable systematic research on DRMOT, the authors construct a dedicated dataset named DRSet. Starting from the high‑quality ARKitTrack RGB‑D video collection, they manually annotate 187 diverse scenes with frame‑level bounding boxes and 240 language queries, of which 56 explicitly involve depth‑related spatial relations. The annotation pipeline consists of (1) building an attribute table that separates static attributes (color, type, etc.) from dynamic behaviors (walking, approaching), (2) selecting objects that satisfy these attributes across whole videos, (3) writing natural‑language descriptions and drawing precise bounding boxes frame‑by‑frame, and (4) a two‑person cross‑review to guarantee consistency of object IDs and semantic correctness. The dataset is split 60/40 into training (141 videos) and testing (99 videos) sets.

The core contribution is the DRTrack framework, a two‑stage, MLLM‑guided pipeline designed for depth‑aware grounding and robust trajectory association. In the first stage, a multimodal large language model (e.g., Qwen2.5‑VL or InternVL3) receives the synchronized RGB image, depth map, and language query as joint input and directly predicts the target’s bounding box for the current frame. Because the depth map is part of the input, the model can resolve ambiguities that arise in pure 2‑D grounding, correctly handling expressions such as “the nearest object” or “the object behind the table.”

The second stage employs a depth‑enhanced version of OC‑SORT (Online Clustering SORT). The bounding box output from the MLLM is combined with the average depth of the detected region to compute a depth‑weighted Intersection‑over‑Union (IoU) score. This depth‑aware IoU is fused with traditional appearance‑based matching scores to associate detections across frames. By penalizing matches with inconsistent depth, the tracker reduces identity switches, especially in scenarios with partial occlusion or drastic viewpoint changes.

Extensive experiments on DRSet demonstrate that DRTrack outperforms all RGB‑only RMOT baselines (TransRMO‑T, CR‑Tracker, ReaT‑rack, etc.) across standard MOT metrics. Overall, DRTrack achieves a MOTA of 71.2% and IDF1 of 68.5%, improving over baselines by 7.4 and 6.9 percentage points respectively. The gains are even more pronounced on the 56 depth‑related queries, where MOTA rises by 12.3 points and the number of ID switches drops by 35 %. When depth maps are omitted, DRTrack’s performance falls back to that of typical MLLM‑based RMOT, confirming that depth acts as a complementary, not mandatory, cue.

The authors acknowledge limitations: DRSet, while diverse, does not cover high‑speed vehicular or large‑scale urban scenes, and the reliance on large MLLMs incurs significant computational cost, hindering real‑time deployment. Future work is suggested in three directions: (1) designing lightweight multimodal attention mechanisms for efficient inference, (2) expanding the dataset to include more challenging environments, and (3) exploring pre‑training strategies that better align 3‑D geometry with language semantics.

In summary, the paper provides solid evidence that integrating depth information with language and RGB visual data substantially improves spatial‑semantic grounding and tracking stability in referring multi‑object tracking. DRSet and DRTrack constitute valuable resources and baselines for the emerging field of 3‑D‑aware language‑vision interaction, with potential impact on robotics, autonomous driving, and interactive AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment