Refer-Agent: A Collaborative Multi-Agent System with Reasoning and Reflection for Referring Video Object Segmentation

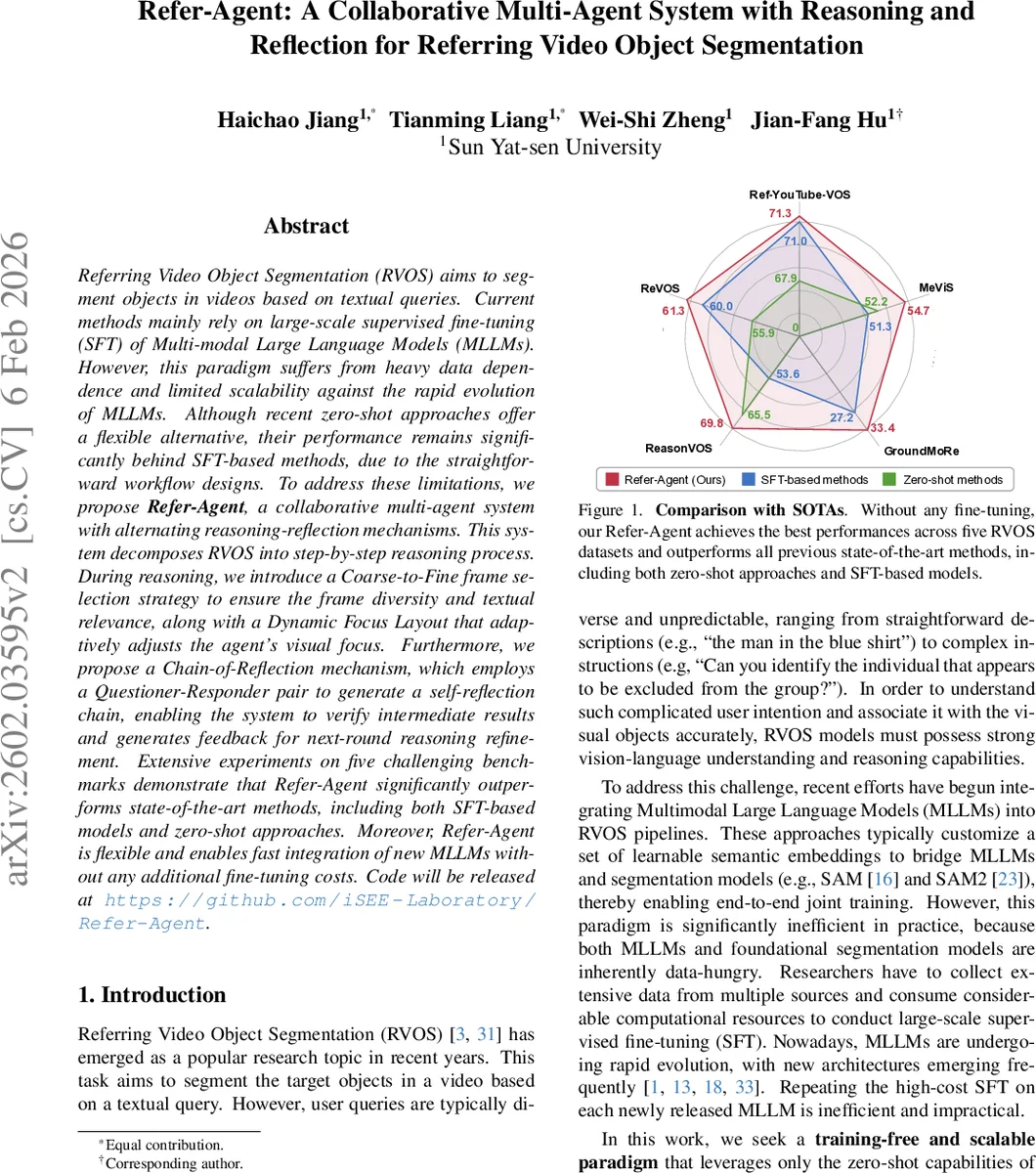

Referring Video Object Segmentation (RVOS) aims to segment objects in videos based on textual queries. Current methods mainly rely on large-scale supervised fine-tuning (SFT) of Multi-modal Large Language Models (MLLMs). However, this paradigm suffers from heavy data dependence and limited scalability against the rapid evolution of MLLMs. Although recent zero-shot approaches offer a flexible alternative, their performance remains significantly behind SFT-based methods, due to the straightforward workflow designs. To address these limitations, we propose \textbf{Refer-Agent}, a collaborative multi-agent system with alternating reasoning-reflection mechanisms. This system decomposes RVOS into step-by-step reasoning process. During reasoning, we introduce a Coarse-to-Fine frame selection strategy to ensure the frame diversity and textual relevance, along with a Dynamic Focus Layout that adaptively adjusts the agent’s visual focus. Furthermore, we propose a Chain-of-Reflection mechanism, which employs a Questioner-Responder pair to generate a self-reflection chain, enabling the system to verify intermediate results and generates feedback for next-round reasoning refinement. Extensive experiments on five challenging benchmarks demonstrate that Refer-Agent significantly outperforms state-of-the-art methods, including both SFT-based models and zero-shot approaches. Moreover, Refer-Agent is flexible and enables fast integration of new MLLMs without any additional fine-tuning costs. Code will be released at https://github.com/iSEE-Laboratory/Refer-Agent.

💡 Research Summary

The paper tackles Referring Video Object Segmentation (RVOS), the task of segmenting objects in a video based on a natural‑language query, by proposing a training‑free, multi‑agent framework called Refer‑Agent. Existing state‑of‑the‑art approaches either fine‑tune large multimodal language models (MLLMs) together with segmentation backbones (e.g., SAM, SAM2) or use zero‑shot pipelines that are too simplistic. Fine‑tuning is costly, data‑hungry, and cannot keep up with the rapid evolution of new MLLM architectures, while current zero‑shot methods suffer from error accumulation and hallucinations.

Refer‑Agent decomposes RVOS into four sequential reasoning stages: (i) Frame Selection, (ii) Intent Analysis, (iii) Object Grounding, and (iv) Mask Generation. The core innovations are three mechanisms that make each stage more robust and that enable a feedback loop to correct mistakes.

-

Coarse‑to‑Fine Frame Selection – First, a large set of candidate frames (N ≫ K) is chosen by measuring CLIP‑based similarity between the query and each frame. The video is divided into N segments, and the highest‑scoring frame per segment is kept, forming a coarse set. Then, the coarse set together with the query is fed to an MLLM, which assigns an “importance” score reflecting textual relevance. The final score for each frame is a weighted sum of the CLIP similarity and the MLLM score (α·S_CLIP + β·S_MLLM). The top‑K frames are selected. This two‑step strategy yields both diverse temporal coverage and strong query‑frame alignment, unlike prior single‑pass sampling.

-

Dynamic Focus Layout for Intent Analysis – The selected frames are assembled into a single image grid where the key frame (the one with highest importance) occupies a large, high‑resolution block, while the remaining context frames are compressed into smaller slots. The layout template adapts to the key‑frame index to keep a balanced grid. This design directs the MLLM’s attention to the most informative frame while preserving temporal context, mitigating the dispersion of attention that occurs when all frames are fed equally. The MLLM then reasons over the grid and the query, producing concise discriminative expressions for each target (e.g., “the man in a blue shirt”).

-

Object Grounding & Mask Generation – For each expression, the MLLM performs image grounding to output a bounding box on the key frame. These boxes, together with the key frame, are used as prompts for a video segmentation model such as SAM2, which generates precise masks and propagates them across the whole video.

-

Chain‑of‑Reflection (CoR) Mechanism – To counteract MLLM hallucinations and error propagation, Refer‑Agent introduces a two‑stage self‑reflection loop involving a Questioner‑Responder pair.

- Existence Reflection asks whether each predicted object truly exists in the video, allowing detection of missed or spurious detections.

- Consistency Reflection checks whether the generated textual descriptions are semantically consistent with the original query.

The answers are turned into feedback that guides the next round of frame selection and intent analysis. The loop repeats until both reflection stages pass or a maximum number of iterations is reached. This alternating reasoning‑reflection process dramatically improves robustness without any additional training.

Extensive experiments on five challenging RVOS benchmarks (Ref‑YouTube‑VOS, A2D‑Sentences, JHMDB‑Sentences, DAVIS‑Sentences, OVIS) show that Refer‑Agent outperforms both fine‑tuned SFT models (e.g., GLUS, RGA3) and recent zero‑shot approaches (AL‑Ref‑SAM2, CoT‑RVS) by a large margin in mean IoU and overall IoU. Moreover, the system can swap in newer MLLMs (GPT‑4, Claude, LLaVA) without any fine‑tuning, demonstrating true scalability. Ablation studies confirm that each component—Coarse‑to‑Fine selection, Dynamic Focus Layout, and Chain‑of‑Reflection—contributes significantly to the final performance.

In summary, Refer‑Agent presents a novel, training‑free paradigm for RVOS that combines (1) multi‑scale, similarity‑guided frame sampling, (2) attention‑focused intent reasoning, and (3) a question‑answer based self‑reflection loop to mitigate hallucinations. This design achieves state‑of‑the‑art zero‑shot performance, rivaling and surpassing models that require costly supervised fine‑tuning, and opens a path toward flexible, plug‑and‑play multimodal agents for a broad range of vision‑language tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment