MapFormer: Self-Supervised Learning of Cognitive Maps with Input-Dependent Positional Embeddings

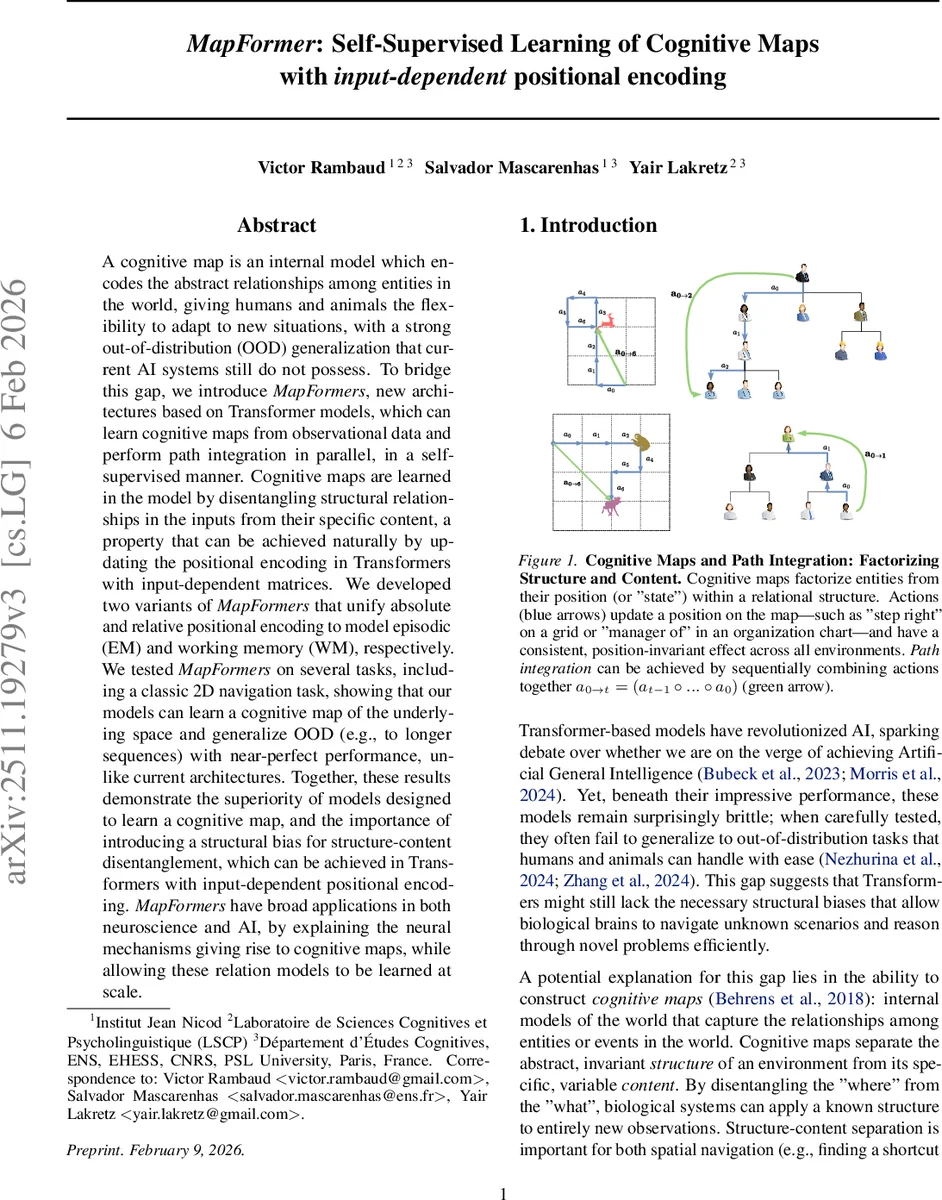

A cognitive map is an internal model which encodes the abstract relationships among entities in the world, giving humans and animals the flexibility to adapt to new situations, with a strong out-of-distribution (OOD) generalization that current AI systems still do not possess. To bridge this gap, we introduce MapFormers, new architectures based on Transformer models, which can learn cognitive maps from observational data and perform path integration in parallel, in a self-supervised manner. Cognitive maps are learned in the model by disentangling structural relationships in the inputs from their specific content, a property that can be achieved naturally by updating the positional encoding in Transformers with input-dependent matrices. We developed two variants of MapFormers that unify absolute and relative positional encoding to model episodic (EM) and working memory (WM), respectively. We tested MapFormers on several tasks, including a classic 2D navigation task, showing that our models can learn a cognitive map of the underlying space and generalize OOD (e.g., to longer sequences) with near-perfect performance, unlike current architectures. Together, these results demonstrate the superiority of models designed to learn a cognitive map, and the importance of introducing a structural bias for structure-content disentanglement, which can be achieved in Transformers with input-dependent positional encoding. MapFormers have broad applications in both neuroscience and AI, by explaining the neural mechanisms giving rise to cognitive maps, while allowing these relation models to be learned at scale.

💡 Research Summary

MapFormer introduces a novel class of Transformer architectures that learn cognitive maps through input‑dependent positional embeddings. The authors argue that the ability of biological agents to separate “where” (structure) from “what” (content) underlies their remarkable out‑of‑distribution (OOD) generalization, a capability lacking in current AI systems. To emulate this, MapFormer replaces the static positional encodings of standard Transformers with matrices that are generated dynamically from the input tokens themselves. These matrices rotate the query and key vectors, effectively encoding the agent’s internal position on a learned relational graph rather than a simple ordinal index.

Two variants are presented: Map EM (Episodic Memory) uses absolute positional embeddings derived from a cumulative sum of rotation angles, allowing the model to maintain a fixed coordinate for each experience; Map WM (Working Memory) adopts a relative rotary encoding (RoPE) where the rotation angle is a learnable parameter, mirroring the implicit structure representation found in working‑memory models. Both variants compute path integration in parallel by summing low‑dimensional angular velocities (ω·Δ) across time, then applying a single exponential map to obtain the final rotation matrix Rθ. This Lie‑group formulation (focused on the compact group SO(2)) eliminates the sequential matrix multiplications required by earlier RNN‑based approaches such as the Tolman‑Eichenbaum Machine, enabling efficient GPU‑parallel processing.

The paper grounds the method in Lie‑algebra theory, showing that actions correspond to generators in the algebra and that the exponential map yields the group element representing the integrated action sequence. By learning block‑diagonal rotation matrices at multiple scales, the model adapts to different grid sizes while preserving commutativity, which guarantees linear path integration.

Empirical evaluation focuses on two benchmark tasks. The Selective Copy task tests whether a model can copy a sequence while ignoring distractor blank tokens; MapFormer achieves >99 % accuracy, whereas standard RoPE Transformers fail to filter the blanks. The Ced‑Navigation task presents a 2‑D grid world where actions (↑,↓,←,→) and observations (objects) alternate. The model must predict the observation when revisiting a location. MapFormer learns the underlying grid topology independent of the random object assignments and generalizes to sequences twice as long and grids twice as large as seen during training, with near‑perfect prediction accuracy. Comparisons against the Tolman‑Eichenbaum Machine (TEM‑t), conventional Transformers, and recent selective State‑Space Models demonstrate that MapFormer consistently outperforms them in length‑generalization and structural learning.

Key contributions include: (1) a structural bias for Transformers that disentangles relational structure from token content via input‑dependent positional embeddings; (2) unified treatment of episodic and working memory through absolute and relative positional schemes; (3) a Lie‑group‑based, parallelizable path‑integration mechanism that scales beyond sequential RNN models; and (4) empirical evidence of strong OOD generalization on both synthetic and navigation tasks.

Limitations are acknowledged: experiments are confined to 2‑D grids with a simple set of cardinal actions, leaving open the question of scalability to non‑abelian groups (e.g., SO(3) for 3‑D rotations) or more complex relational graphs. The input‑dependent matrices introduce additional parameters, though the overall training time remains comparable to standard Transformers due to parallelization. Future work is suggested to extend the framework to richer relational structures, multimodal cognitive maps, and direct comparisons with neurophysiological data from hippocampal and entorhinal circuits.

Comments & Academic Discussion

Loading comments...

Leave a Comment