CompEvent: Complex-valued Event-RGB Fusion for Low-light Video Enhancement and Deblurring

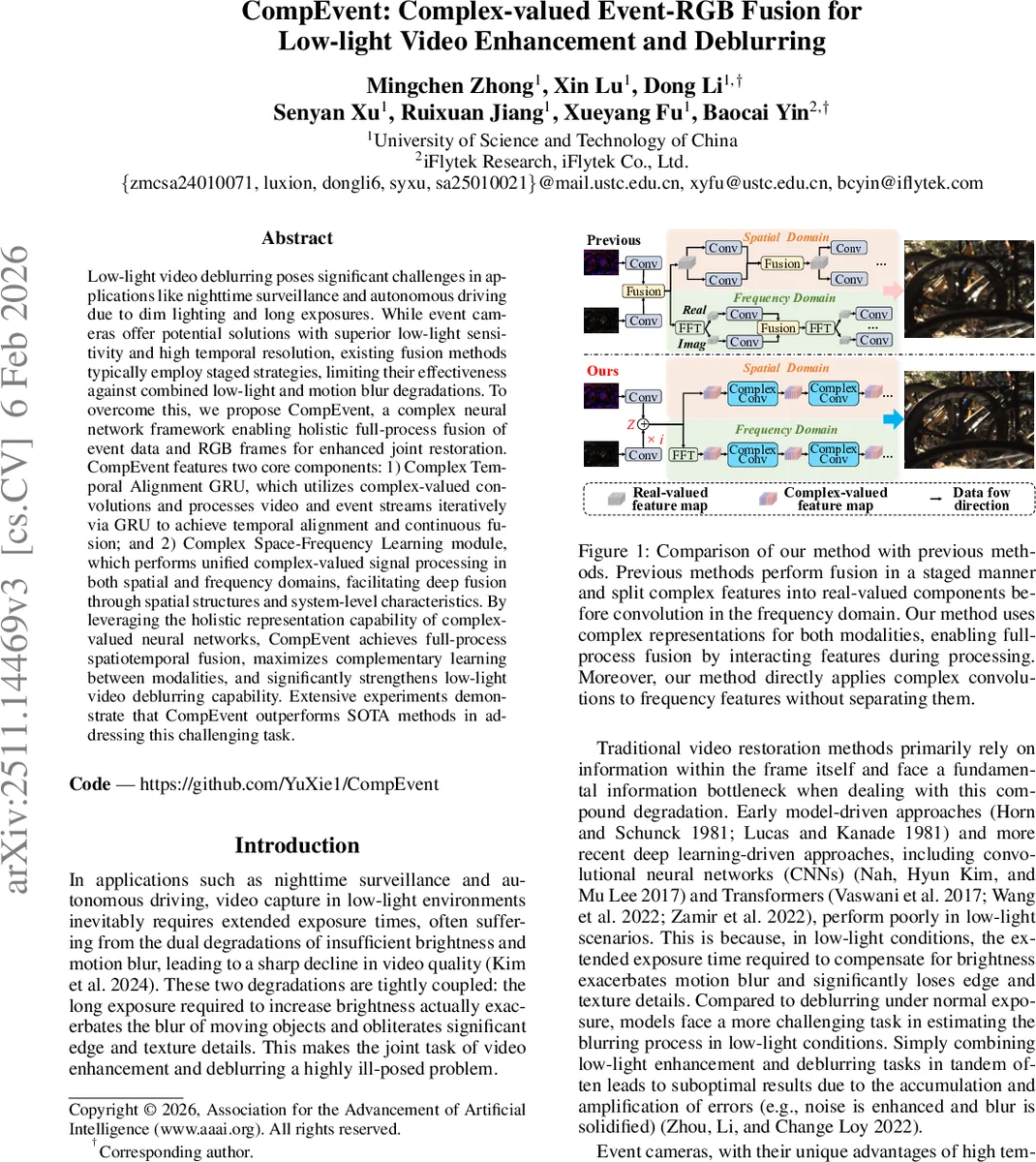

Low-light video deblurring poses significant challenges in applications like nighttime surveillance and autonomous driving due to dim lighting and long exposures. While event cameras offer potential solutions with superior low-light sensitivity and high temporal resolution, existing fusion methods typically employ staged strategies, limiting their effectiveness against combined low-light and motion blur degradations. To overcome this, we propose CompEvent, a complex neural network framework enabling holistic full-process fusion of event data and RGB frames for enhanced joint restoration. CompEvent features two core components: 1) Complex Temporal Alignment GRU, which utilizes complex-valued convolutions and processes video and event streams iteratively via GRU to achieve temporal alignment and continuous fusion; and 2) Complex Space-Frequency Learning module, which performs unified complex-valued signal processing in both spatial and frequency domains, facilitating deep fusion through spatial structures and system-level characteristics. By leveraging the holistic representation capability of complex-valued neural networks, CompEvent achieves full-process spatiotemporal fusion, maximizes complementary learning between modalities, and significantly strengthens low-light video deblurring capability. Extensive experiments demonstrate that CompEvent outperforms SOTA methods in addressing this challenging task.

💡 Research Summary

CompEvent addresses the challenging problem of simultaneously enhancing and deblurring low‑light video, where long exposure times required for sufficient illumination inevitably introduce severe motion blur. Existing approaches either rely solely on RGB frames or fuse event data and RGB in a staged manner, limiting cross‑modal interaction to a few discrete points and thus failing to fully exploit the complementary nature of the two modalities. To overcome these limitations, the authors propose a novel complex‑valued neural network framework that treats the RGB frame as the real part and the event stream as the imaginary part of a complex tensor, enabling continuous, “full‑process” fusion throughout the entire network.

The architecture consists of two core components. The first, Complex Temporal Alignment GRU (CT‑A‑GRU), extends the conventional gated recurrent unit to the complex domain. Input features Zₜ = F_R(Iₜ) + i·F_I(Eₜ) are processed by complex convolutions to compute reset and update gates (Rₜ, Uₜ) and a candidate hidden state. Because the gates are driven by complex convolutions, they inherently combine RGB and event information, allowing the network to suppress outdated blurry RGB cues when fresh event motion cues are available. A bidirectional design (forward and backward passes) further enriches each frame’s representation with both past and future context, which is crucial under severe noise and blur.

The second component, Complex Space‑Frequency Learning (CSFL), acts as the backbone for final restoration. After temporal alignment, three consecutive aligned features (H′ₜ₋₁, H′ₜ, H′ₜ₊₁) are concatenated and fed into a U‑Net‑style hierarchy that contains two parallel branches: a spatial branch employing complex convolutions to refine texture and color, and a frequency branch that applies FFT followed by complex convolutions directly on the complex spectrum. By processing the Fourier domain in the complex space, the method avoids splitting real and imaginary components, preserving phase information and allowing simultaneous correction of low‑frequency illumination deficits (caused by low light) and high‑frequency attenuation (caused by motion blur). The CSFL outputs a residual map which is added to the original RGB frame, yielding the final bright and sharp video.

Extensive experiments on benchmark datasets such as RELED and ED‑TF demonstrate that CompEvent outperforms state‑of‑the‑art methods by 1.5–2.0 dB in PSNR, 0.02–0.04 in LPIPS, and shows consistent SSIM gains. Qualitative results reveal markedly reduced noise, restored edge sharpness, and faithful color reproduction. Moreover, the complex‑valued convolutions reduce the total parameter count by roughly 50 % compared with equivalent real‑valued networks, highlighting the efficiency of the approach.

In summary, CompEvent introduces a principled complex‑valued fusion paradigm that integrates event‑based high‑temporal‑resolution cues with RGB texture and color information at every processing stage. The combination of a complex GRU for robust temporal alignment and a dual spatial‑frequency complex backbone for deep restoration enables superior performance on the notoriously ill‑posed joint low‑light enhancement and deblurring task, and opens new avenues for complex‑domain multimodal learning in vision.

Comments & Academic Discussion

Loading comments...

Leave a Comment