AR as an Evaluation Playground: Bridging Metrics and Visual Perception of Computer Vision Models

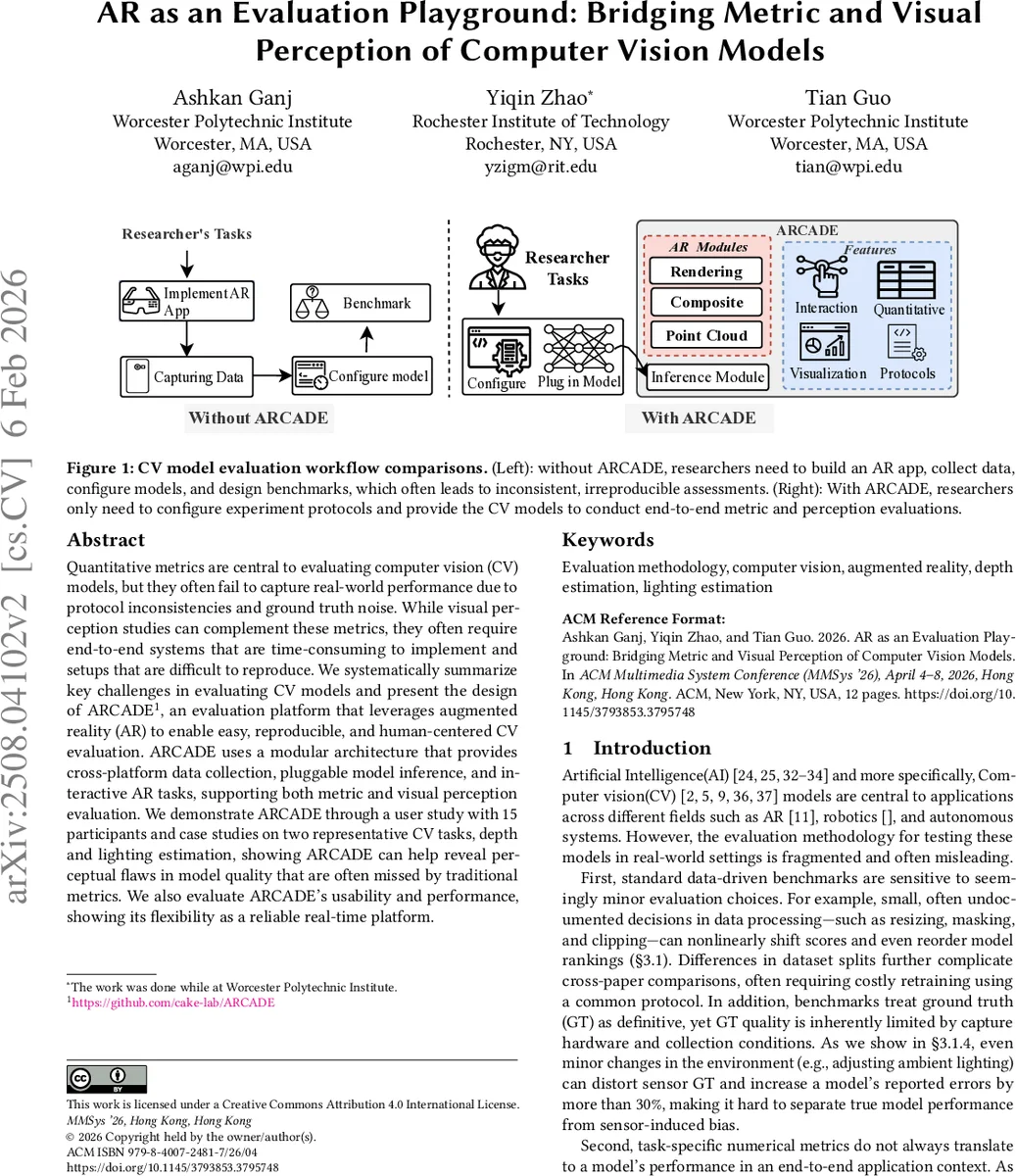

Quantitative metrics are central to evaluating computer vision (CV) models, but they often fail to capture real-world performance due to protocol inconsistencies and ground-truth noise. While visual perception studies can complement these metrics, they often require end-to-end systems that are time-consuming to implement and setups that are difficult to reproduce. We systematically summarize key challenges in evaluating CV models and present the design of ARCADE, an evaluation platform that leverages augmented reality (AR) to enable easy, reproducible, and human-centered CV evaluation. ARCADE uses a modular architecture that provides cross-platform data collection, pluggable model inference, and interactive AR tasks, supporting both metric and visual perception evaluation. We demonstrate ARCADE through a user study with 15 participants and case studies on two representative CV tasks, depth and lighting estimation, showing that ARCADE can reveal perceptual flaws in model quality that are often missed by traditional metrics. We also evaluate ARCADE’s usability and performance, showing its flexibility as a reliable real-time platform.

💡 Research Summary

The paper begins by diagnosing fundamental shortcomings of current computer‑vision (CV) model evaluation practices. It shows that seemingly minor preprocessing choices (e.g., image resizing, depth‑range clipping, masking), inconsistent dataset splits, and sensor‑dependent ground‑truth noise can shift standard metrics such as RMSE, AbsRel, or δ₁ by several percent and even reorder model rankings. Moreover, depth models output in different spaces (metric depth, relative depth, disparity) require global scale‑and‑shift alignment, which is highly sensitive to outliers and can further distort metric‑based comparisons. These observations highlight a “metric‑perception gap”: high scores on benchmark suites do not guarantee satisfactory performance when models are deployed in real‑world AR applications.

To bridge this gap, the authors introduce ARCADE, an Augmented‑Reality‑based Evaluation Playground. ARCADE’s modular architecture comprises four components: (1) cross‑platform data capture (live streaming and replay), (2) a plug‑and‑play inference interface exposed via REST/Docker, (3) interactive AR tasks (virtual object placement, occlusion checks, point‑cloud visualization), and (4) visualization tools that overlay predictions with ground truth and generate error heatmaps. The system runs on a mobile device (iPhone 14 Pro), an edge server, and a web client, achieving average render‑composite latencies of 5.2 ms at 640×480 and 20 ms at 1920×1080, with interaction latencies between 7.5 ms and 18 ms, confirming real‑time feasibility.

The authors evaluate ARCADE in three ways. First, an end‑to‑end performance test demonstrates that the platform can sustain real‑time AR workflows. Second, a user study with 15 CV/ML researchers reveals high overall satisfaction (4.20/5), strong perceived effectiveness for judging depth‑model suitability (4.67/5) and for discovering failure cases quickly (4.53/5), and a notable reduction in engineering effort (4.33/5). Third, case studies on depth estimation and lighting estimation expose perceptual flaws that standard metrics miss. For depth, models such as ZoeDepth and DepthAnything achieve similar RMSE/AbsRel scores on NYU Depth V2, yet in AR scenes they produce visible depth discontinuities, misaligned occlusions, and unstable geometry when virtual objects are placed. For lighting, a model with low LPIPS still renders unrealistic reflections and shadows in AR, which participants immediately notice.

Finally, the authors open‑source the ARCADE framework and release a dataset of over 2,000 AR frames captured with synchronized RGB, LiDAR depth, camera intrinsics/extrinsics, and virtual‑object metadata. This contribution enables reproducible, perception‑centered benchmarking across models, devices, and application contexts. The paper concludes that AR‑driven interactive evaluation is a necessary complement to traditional numerical metrics, providing a more faithful assessment of CV models’ real‑world utility, especially for AR/VR and robotics deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment