AnCoder: Anchored Code Generation via Discrete Diffusion Models

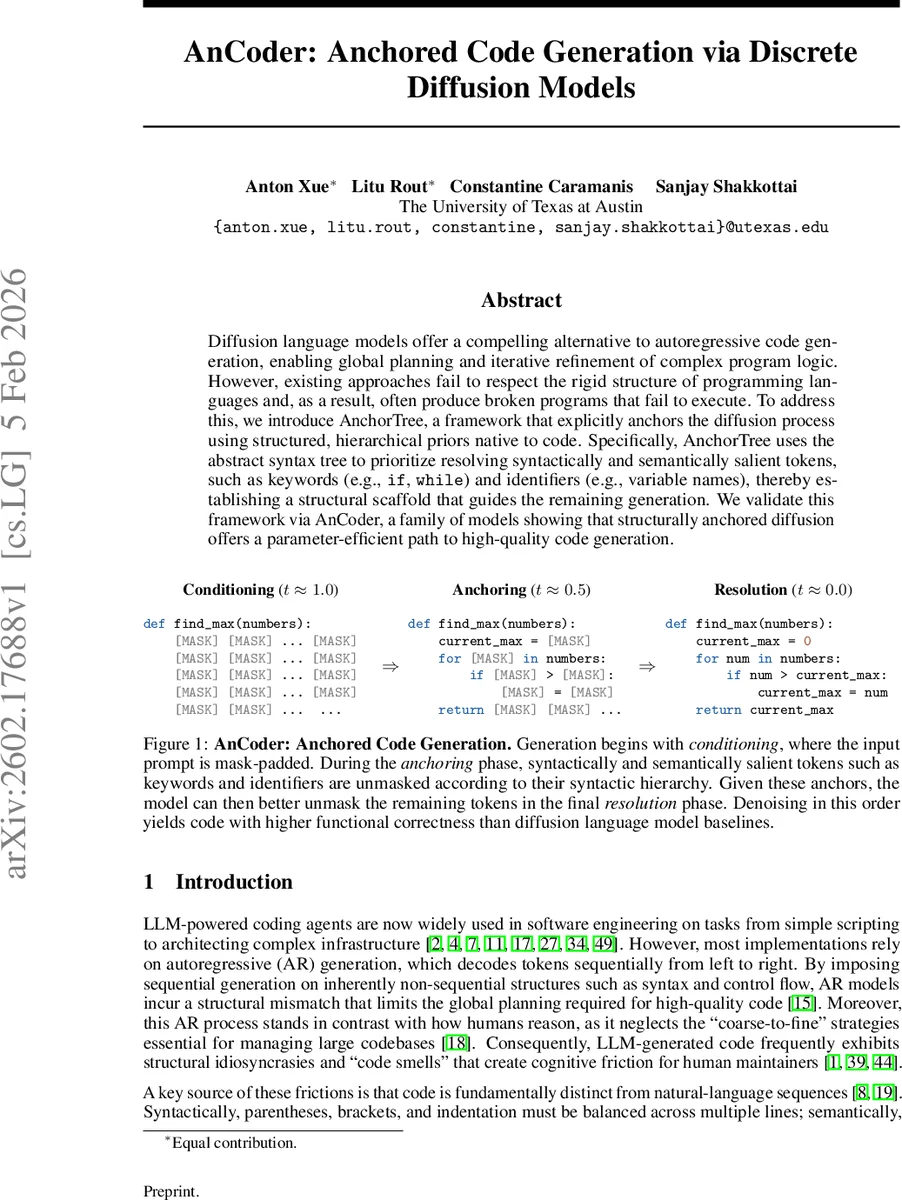

Diffusion language models offer a compelling alternative to autoregressive code generation, enabling global planning and iterative refinement of complex program logic. However, existing approaches fail to respect the rigid structure of programming languages and, as a result, often produce broken programs that fail to execute. To address this, we introduce AnchorTree, a framework that explicitly anchors the diffusion process using structured, hierarchical priors native to code. Specifically, AnchorTree uses the abstract syntax tree to prioritize resolving syntactically and semantically salient tokens, such as keywords (e.g., if, while) and identifiers (e.g., variable names), thereby establishing a structural scaffold that guides the remaining generation. We validate this framework via AnCoder, a family of models showing that structurally anchored diffusion offers a parameter-efficient path to high-quality code generation.

💡 Research Summary

The paper introduces AnCoder, a family of diffusion‑based code generation models that incorporate hierarchical anchoring derived from the abstract syntax tree (AST) of programs. Traditional diffusion language models (DLMs) generate code by iteratively denoising a fully masked token sequence, but they treat every token independently during the forward noising process. As a result, important syntactic tokens (e.g., keywords, delimiters) and semantic tokens (e.g., variable names) often remain masked until late in the denoising trajectory, depriving the model of crucial conditioning information. This leads to a high rate of syntactic errors and runtime failures, especially for longer or more complex programs.

To address this, the authors propose AnchorTree, a soft‑anchoring framework that leverages the AST’s hierarchical structure. During preprocessing, each token is mapped to its corresponding AST node, and a weight is assigned based on the node’s depth: tokens closer to the root (such as control‑flow keywords and top‑level identifiers) receive higher weights, while deeper expression tokens receive lower weights. This creates a graded importance spectrum rather than a binary “anchor vs. non‑anchor” labeling used in prior anchored diffusion work.

The model architecture consists of two cooperating networks: an anchor network (y_{\theta}^{A}) and a denoiser network (x_{\theta}^{D}). At any diffusion step (t), the anchor network first predicts the distribution over high‑weight tokens, effectively “unmasking” the structural scaffold of the program. The denoiser then conditions on these scaffold predictions to reconstruct the remaining tokens. Training optimizes an Anchored Negative ELBO (ANELBO), which combines the standard diffusion loss with an auxiliary anchor loss weighted by a hyper‑parameter (\mu). This auxiliary term explicitly encourages early correct prediction of anchor tokens.

Experiments are conducted on two widely used code generation benchmarks: HumanEval and MBPP. The authors train two model sizes (AnCoder‑Base and AnCoder‑Large) and compare against several baselines, including recent diffusion models (DiffuCoder, Gemini Diffusion) and strong autoregressive coders. Results show a consistent improvement in functional correctness, with gains of roughly 10–15 percentage points over the best diffusion baselines. Ablation studies demonstrate that (1) soft anchoring outperforms hard anchoring, (2) depth‑based weighting yields better generalization than simple frequency‑based heuristics, and (3) the two‑stage architecture provides a clear advantage over a single‑decoder design.

The paper also discusses limitations. AST parsing introduces additional preprocessing overhead and can propagate parsing errors into the training pipeline. The current work focuses exclusively on Python, leaving cross‑language applicability an open question. Moreover, diffusion models remain computationally heavier than autoregressive counterparts, which may hinder real‑time coding assistance without further optimization.

In conclusion, AnCoder shows that embedding language‑agnostic structural priors—specifically, the hierarchical information inherent in ASTs—into diffusion models can dramatically improve code generation quality. By soft‑anchoring tokens according to their syntactic importance, the model achieves a coarse‑to‑fine generation process that mirrors human programming practices. Future directions include extending the approach to multiple programming languages, exploring parser‑free hierarchical representations, and integrating test‑time constraint solvers for even higher reliability.

Comments & Academic Discussion

Loading comments...

Leave a Comment