DataCrumb: A Physical Probe for Reflections on Background Web Tracking

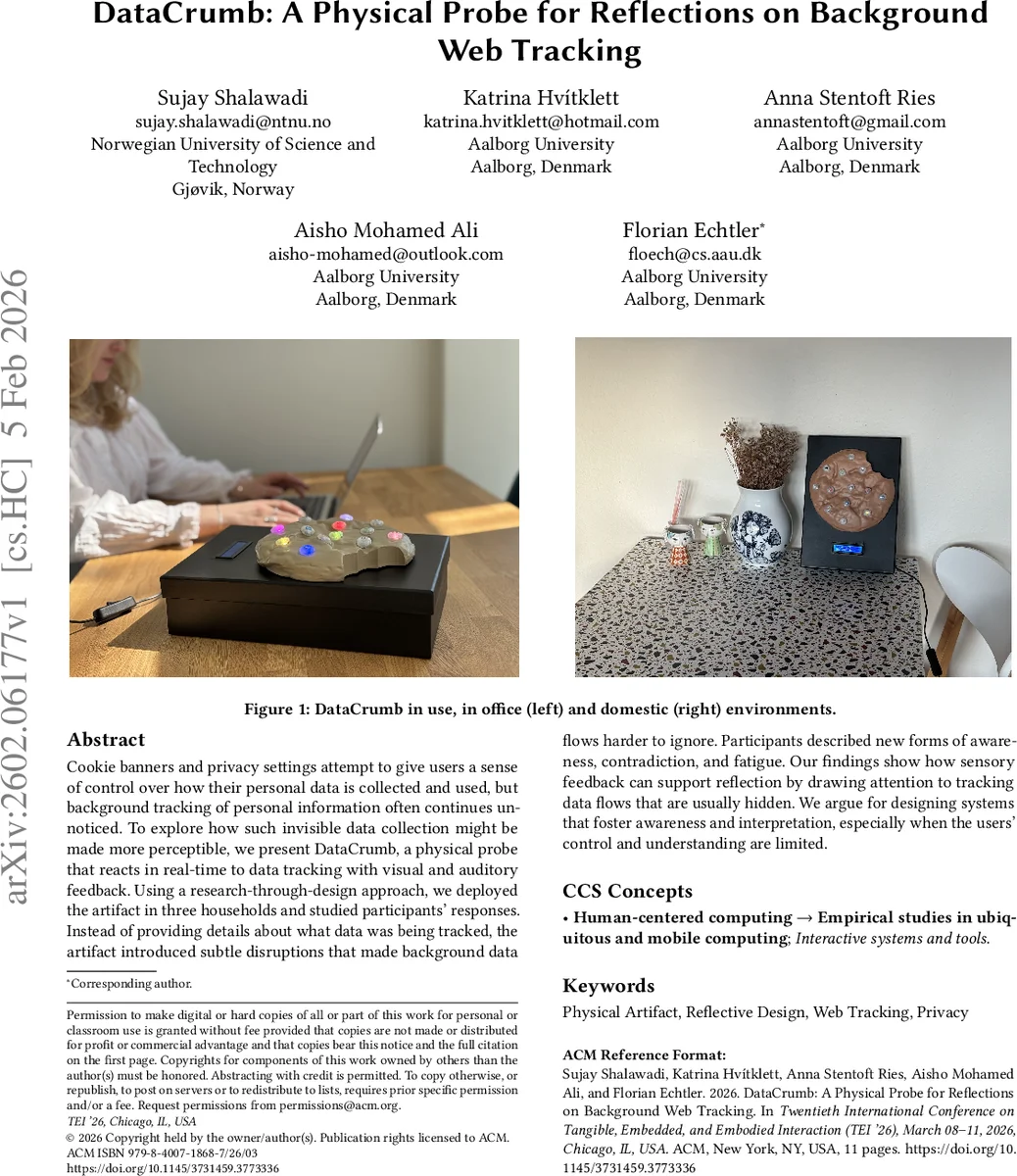

Cookie banners and privacy settings attempt to give users a sense of control over how their personal data is collected and used, but background tracking of personal information often continues unnoticed. To explore how such invisible data collection might be made more perceptible, we present DataCrumb, a physical probe that reacts in real-time to data tracking with visual and auditory feedback. Using a research-through-design approach, we deployed the artifact in three households and studied participants’ responses. Instead of providing details about what data was being tracked, the artifact introduced subtle disruptions that made background data flows harder to ignore. Participants described new forms of awareness, contradiction, and fatigue. Our findings show how sensory feedback can support reflection by drawing attention to tracking data flows that are usually hidden. We argue for designing systems that foster awareness and interpretation, especially when the users’ control and understanding are limited.

💡 Research Summary

DataCrumb is a tangible, network‑level probe that makes invisible web tracking perceptible through real‑time visual and auditory cues. The authors adopt a Research‑through‑Design (RtD) methodology, beginning with five semi‑structured interviews (participants aged 25‑33) to uncover two key insights: users feel they are taking privacy actions (rejecting cookies, using ad‑blockers) but lack visibility into whether tracking actually stops, and they experience fatigue and resignation from repeated consent dialogs. These insights guide the design of a physical artifact shaped like a cookie, equipped with three output modalities: 13 LEDs representing popular tracking domains, a buzzer that emits short tones for single blocks and longer tones for successive blocks, and a small screen displaying cumulative request and block counts. The feedback is deliberately ambiguous, encouraging users to interpret the signals rather than providing explicit explanations.

Technically, DataCrumb runs on a Raspberry Pi 4 with Pi‑hole acting as a local DNS sink. A custom Python script parses DNS logs in real time, matches requests against a known‑tracking list, and triggers GPIO‑controlled LEDs, buzzer, and display. The system operates autonomously for three‑day deployments, requiring no user interaction.

The artifact was deployed in three households for three days each, accompanied by pre‑ and post‑deployment interviews. Qualitative analysis revealed three overarching themes. First, new awareness: participants realized that tracking continued despite their attempts to block it, as the device’s lights and sounds made the hidden activity visible. Second, contradiction: the ambiguous signals highlighted a mismatch between users’ perceived control (privacy settings) and the actual data flows, surfacing the “privacy paradox” in lived experience. Third, fatigue: while the intermittent disruptions initially captured attention, over time users reported habituation and a desire to ignore or integrate the cues, mirroring known fatigue effects of privacy prompts.

The paper’s contributions are threefold: (1) the design and implementation of DataCrumb, a physical probe that translates background web tracking into multimodal sensory feedback; (2) a design approach that leverages sensory ambiguity and persistent signaling to provoke long‑term reflection rather than immediate control; (3) empirical evidence that such embodied disruptions can surface gaps between perceived and actual privacy, generating awareness, perceived contradictions, and fatigue. Limitations include the short deployment period, small sample size, and a static list of tracked domains. The authors suggest future work on longer‑term studies, personalized feedback mechanisms, and extending the concept to other invisible data flows such as AI model calls or sensor data collection. Overall, the study demonstrates that making invisible digital processes tangible can shift user experience from rational decision‑making toward embodied reflection, offering a complementary pathway for privacy‑by‑design research.

Comments & Academic Discussion

Loading comments...

Leave a Comment