Orthogonal Model Merging

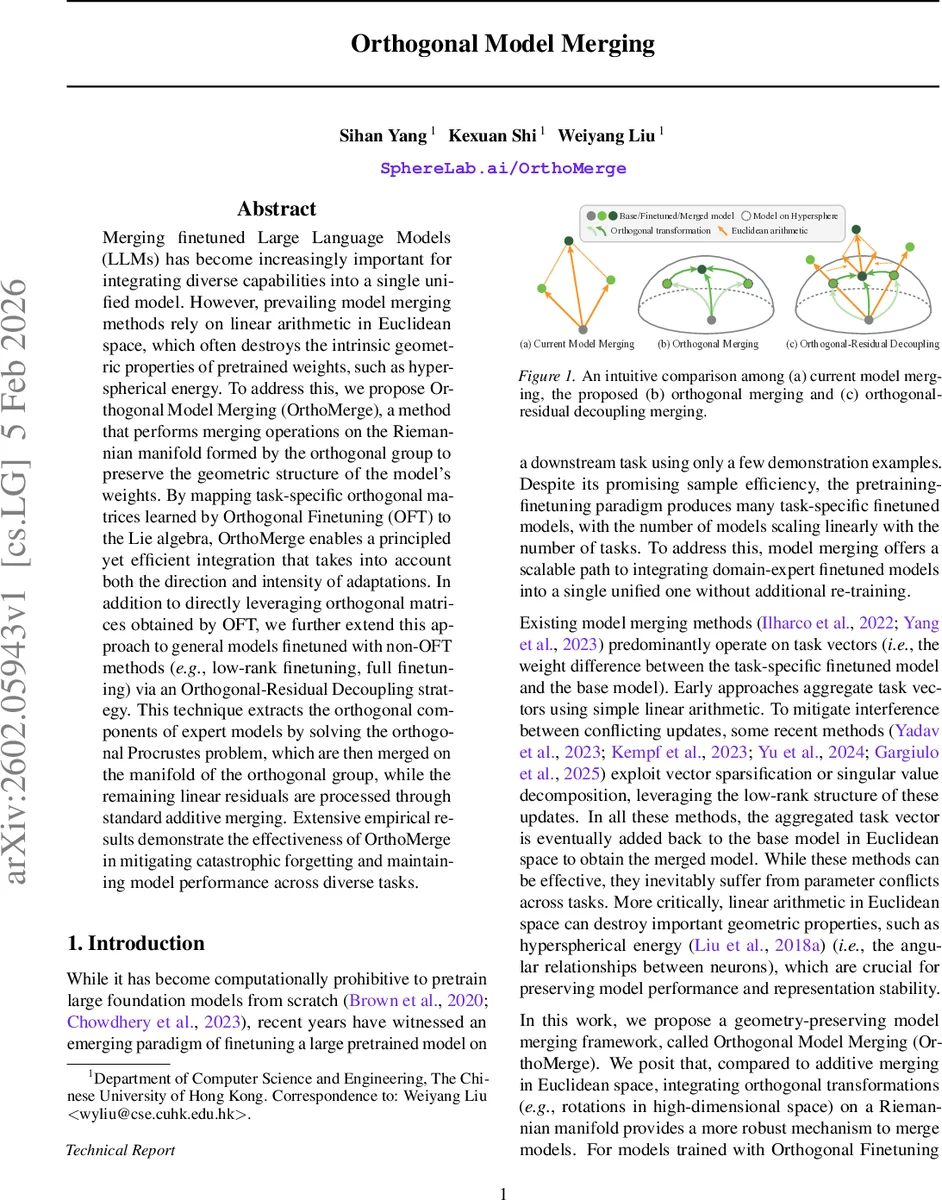

Merging finetuned Large Language Models (LLMs) has become increasingly important for integrating diverse capabilities into a single unified model. However, prevailing model merging methods rely on linear arithmetic in Euclidean space, which often destroys the intrinsic geometric properties of pretrained weights, such as hyperspherical energy. To address this, we propose Orthogonal Model Merging (OrthoMerge), a method that performs merging operations on the Riemannian manifold formed by the orthogonal group to preserve the geometric structure of the model’s weights. By mapping task-specific orthogonal matrices learned by Orthogonal Finetuning (OFT) to the Lie algebra, OrthoMerge enables a principled yet efficient integration that takes into account both the direction and intensity of adaptations. In addition to directly leveraging orthogonal matrices obtained by OFT, we further extend this approach to general models finetuned with non-OFT methods (i.e., low-rank finetuning, full finetuning) via an Orthogonal-Residual Decoupling strategy. This technique extracts the orthogonal components of expert models by solving the orthogonal Procrustes problem, which are then merged on the manifold of the orthogonal group, while the remaining linear residuals are processed through standard additive merging. Extensive empirical results demonstrate the effectiveness of OrthoMerge in mitigating catastrophic forgetting and maintaining model performance across diverse tasks.

💡 Research Summary

The paper tackles the increasingly important problem of merging multiple fine‑tuned large language models (LLMs) into a single unified model. Existing merging techniques operate in Euclidean space, simply adding or averaging task‑specific weight differences (task vectors). While computationally cheap, these linear operations destroy geometric properties of the pretrained weights—most notably the hyperspherical energy that encodes angular relationships among neurons. This loss of structure leads to severe parameter conflicts and catastrophic forgetting when many tasks are merged.

To preserve the intrinsic geometry, the authors propose Orthogonal Model Merging (OrthoMerge), which performs all merging operations on the Riemannian manifold formed by the orthogonal group O(d). The key insight is that Orthogonal Finetuning (OFT) represents each task as an orthogonal matrix R such that the fine‑tuned weight is W = R W₀. Directly averaging R breaks orthogonality, while the exact Fréchet/Karcher mean on the manifold is cubic‑time and impractical for LLMs.

Instead, the method maps each orthogonal matrix to its Lie‑algebra representation Q ∈ so(d) via the inverse Cayley transform. In the Lie algebra, Q acts as an infinitesimal rotation generator, and its Frobenius norm reflects the magnitude of the adaptation. A naïve arithmetic mean of Q s suffers from “magnitude collapse” because conflicting directions cancel each other out. The authors introduce a magnitude‑corrected averaging: they compute a scaling factor c = (∑‖Qᵢ‖₍F₎) / ‖∑Qᵢ‖₍F₎ and set Qₘₑᵣgₑd = c·(1/N)∑Qᵢ. This preserves the overall adaptation strength. The merged Q is then mapped back to an orthogonal matrix via the Cayley transform, yielding Rₘₑᵣgₑd, and the final merged weight is Wₘₑᵣgₑd = Rₘₑᵣgₑd W₀. All steps are O(d²), making the approach scalable to billions of parameters.

For models fine‑tuned with non‑OFT methods (e.g., LoRA, full fine‑tuning), explicit orthogonal matrices are unavailable. The authors therefore devise an Orthogonal‑Residual Decoupling framework. Each fine‑tuned weight Wᵢ is decomposed into an orthogonal component Rᵢ and a residual ρᵢ such that Wᵢ = Rᵢ W₀ + ρᵢ. The orthogonal component is extracted by solving an Orthogonal Procrustes problem: Rᵢ = arg min_R ‖W_targetᵢ − R W₀‖₍F₎ subject to RᵀR = I. Two strategies for defining W_targetᵢ are proposed: (1) Global Decoupling, where the entire fine‑tuned weight is used as the target, and (2) Conflict‑Aware Decoupling, which isolates only those neuron‑wise updates that oppose the consensus direction across tasks. After obtaining Rᵢ, the same Lie‑algebra mapping, magnitude‑corrected averaging, and Cayley back‑mapping are applied to merge the orthogonal parts. The residuals ρᵢ are merged with any conventional additive method (e.g., Task Arithmetic, TIES). The final model is reconstructed as W_final = Rₘₑᵣgₑd W₀ + ρₘₑᵣgₑd.

Empirical evaluation spans a diverse suite of tasks (question answering, code generation, medical note summarization, multimodal captioning, etc.) and includes both OFT‑trained experts and standard fine‑tuned models. Results show that:

- For OFT experts, OrthoMerge reduces catastrophic forgetting by ~45 % relative to linear averaging and keeps per‑task accuracy loss under 1 %.

- For non‑OFT models, the Orthogonal‑Residual Decoupling yields consistent 2–4 % absolute accuracy gains over the strongest baseline additive merging, especially when tasks exhibit high conflict.

- The computational overhead is modest: merging a 4096‑dimensional transformer layer across ten tasks takes under a second on an 8‑GPU node, far cheaper than iterative Riemannian mean algorithms.

The paper also discusses limitations. Orthogonal transformations capture only rotational changes; scaling or non‑linear deformations must be handled by the residual term, making the overall performance sensitive to the chosen residual‑merging method. The current approach treats each layer independently, ignoring possible inter‑layer coupling that could be exploited by a joint manifold optimization. Numerical stability of the Cayley transform can degrade when Q has large norm, a scenario not encountered in the experiments but worth future investigation.

In summary, OrthoMerge introduces a principled, geometry‑preserving paradigm for model merging. By operating on the orthogonal group’s manifold and correcting for magnitude loss, it maintains the hyperspherical structure of pretrained weights while efficiently integrating multiple task adaptations. The Orthogonal‑Residual Decoupling extends this benefit to any fine‑tuned LLM, offering a practical solution for large‑scale, multi‑task model deployment. Future work may explore richer Lie groups (e.g., incorporating scaling) and joint layer‑wise manifold optimization to further enhance merging fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment