EoCD: Encoder only Remote Sensing Change Detection

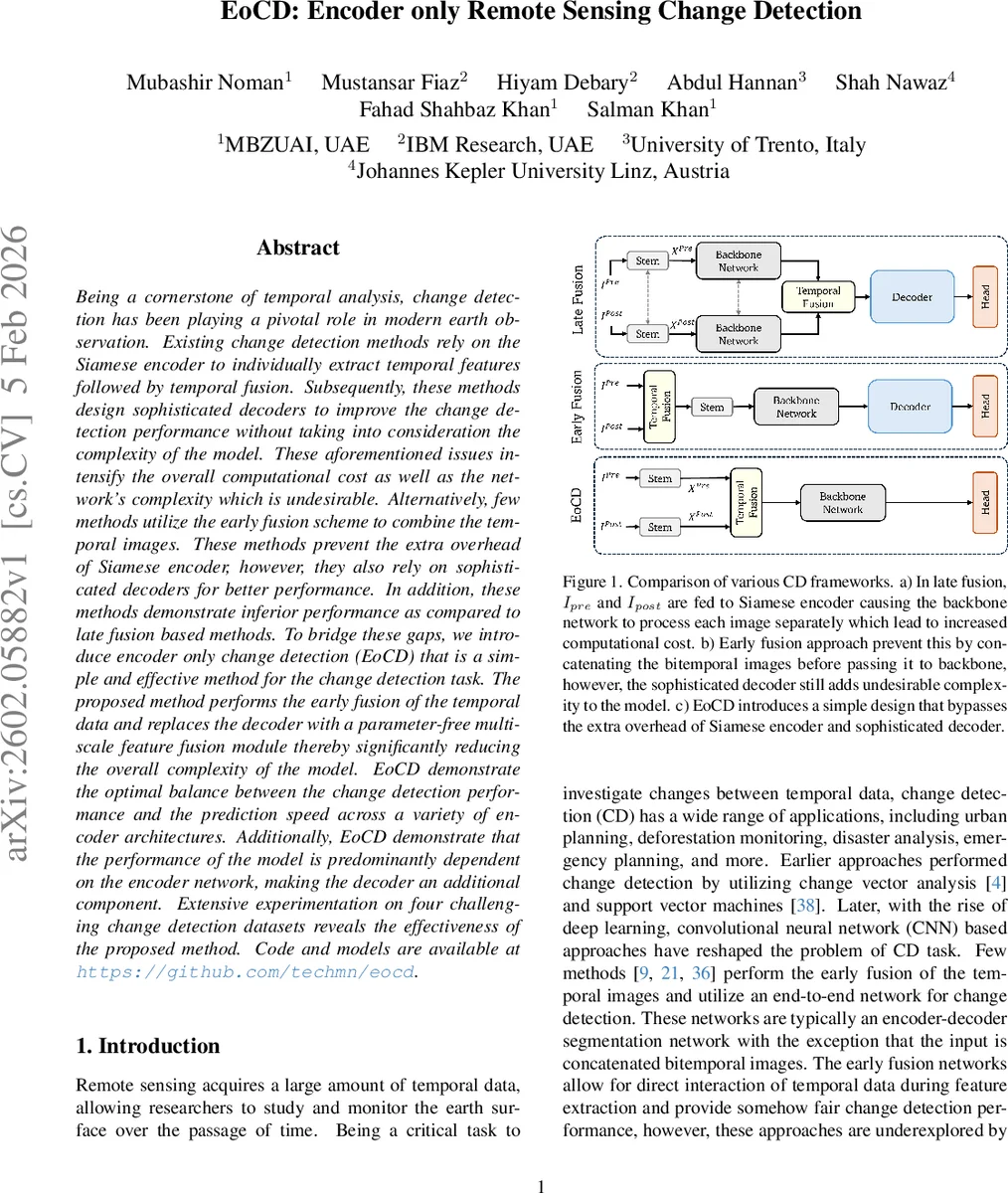

Being a cornerstone of temporal analysis, change detection has been playing a pivotal role in modern earth observation. Existing change detection methods rely on the Siamese encoder to individually extract temporal features followed by temporal fusion. Subsequently, these methods design sophisticated decoders to improve the change detection performance without taking into consideration the complexity of the model. These aforementioned issues intensify the overall computational cost as well as the network’s complexity which is undesirable. Alternatively, few methods utilize the early fusion scheme to combine the temporal images. These methods prevent the extra overhead of Siamese encoder, however, they also rely on sophisticated decoders for better performance. In addition, these methods demonstrate inferior performance as compared to late fusion based methods. To bridge these gaps, we introduce encoder only change detection (EoCD) that is a simple and effective method for the change detection task. The proposed method performs the early fusion of the temporal data and replaces the decoder with a parameter-free multiscale feature fusion module thereby significantly reducing the overall complexity of the model. EoCD demonstrate the optimal balance between the change detection performance and the prediction speed across a variety of encoder architectures. Additionally, EoCD demonstrate that the performance of the model is predominantly dependent on the encoder network, making the decoder an additional component. Extensive experimentation on four challenging change detection datasets reveals the effectiveness of the proposed method.

💡 Research Summary

The paper introduces EoCD (Encoder‑only Change Detection), a streamlined framework for remote‑sensing change detection that dramatically reduces model complexity while preserving state‑of‑the‑art accuracy. Traditional change detection pipelines fall into two categories. Late‑fusion methods employ a Siamese encoder that processes pre‑ and post‑event images separately, followed by a temporal‑fusion block and a sophisticated decoder. Although accurate, this design incurs high computational cost because the backbone is executed twice and the decoder adds many parameters. Early‑fusion approaches concatenate the two images along the channel dimension and feed them to a single encoder‑decoder network, saving some computation but still relying on a heavy decoder to achieve competitive performance.

EoCD adopts an early‑fusion strategy but eliminates the decoder entirely. After passing each temporal image through a lightweight stem, the resulting feature maps are concatenated and processed by a few convolutional layers to produce a fused representation F. This is fed into a conventional backbone encoder (e.g., ResNet, Swin‑Transformer, ConvNeXt) that yields four hierarchical feature maps S₁‑S₄. The core of the method is the Efficient Multiscale Feature Fusion (EMFF) module, which contains no learnable parameters. EMFF first interpolates all scales to a common spatial resolution. It then performs a series of channel‑wise average‑pooling, tanh‑based gating, and element‑wise multiplication to enhance high‑level features (S₄) with contextual information from the lower level (S₃). Subsequent average‑ and max‑pooling steps progressively integrate S₂ and S₁, finally concatenating the enriched high‑level map to produce a semantically rich fused tensor (\bar{S}). This tensor is fed directly to a lightweight change‑detection head (1×1 convolution + sigmoid) that outputs a binary change mask.

Training uses a knowledge‑distillation scheme. A “teacher” network retains the classic Siamese encoder, a multi‑scale fusion block, and a decoder; its parameters are frozen. The “student” network is the decoder‑less EoCD described above. During each iteration, the teacher generates soft predictions that serve as targets for the student, encouraging the student to mimic the teacher’s semantic representations despite its minimalist architecture.

Extensive experiments were conducted on four public RS‑CD datasets: LEVIR‑CD (building changes), CDD‑CD (building & road), SYSU‑CD (building, vegetation, road), and WHU‑CD (building). For each dataset, multiple backbones were evaluated. Results show that, with the same backbone, EoCD reduces FLOPs and parameter count by roughly 30‑50 % compared with Siamese‑encoder + decoder baselines, while achieving comparable or slightly higher F1‑score and IoU. Inference speed gains range from 1.8× to 2.5×, making the model suitable for real‑time or resource‑constrained scenarios such as on‑board satellite processing or UAV deployment.

The paper’s contributions are threefold: (1) a parameter‑free EMFF module that efficiently merges multi‑scale features without a decoder, (2) empirical evidence that change‑detection performance is primarily dictated by the encoder choice, relegating the decoder to a non‑essential role, and (3) a thorough benchmark across diverse backbones and datasets demonstrating the optimal trade‑off between accuracy and efficiency. The authors suggest future work on extending EMFF to other temporal vision tasks and further slimming the stem to enable ultra‑lightweight change detection on edge devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment