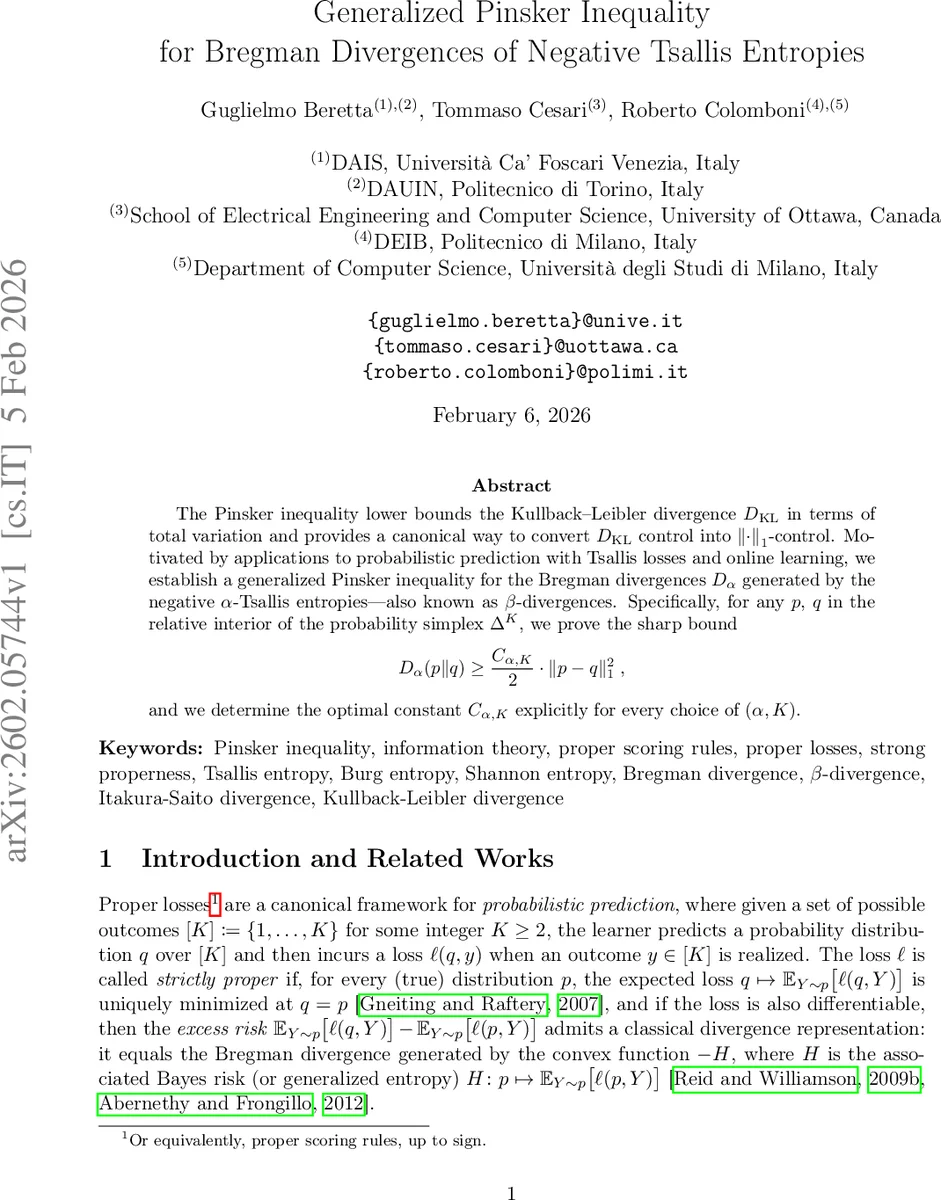

Generalized Pinsker Inequality for Bregman Divergences of Negative Tsallis Entropies

The Pinsker inequality lower bounds the Kullback–Leibler divergence $D_{\textrm{KL}}$ in terms of total variation and provides a canonical way to convert $D_{\textrm{KL}}$ control into $\lVert \cdot \rVert_1$-control. Motivated by applications to probabilistic prediction with Tsallis losses and online learning, we establish a generalized Pinsker inequality for the Bregman divergences $D_α$ generated by the negative $α$-Tsallis entropies – also known as $β$-divergences. Specifically, for any $p$, $q$ in the relative interior of the probability simplex $Δ^K$, we prove the sharp bound [ D_α(p\Vert q) \ge \frac{C_{α,K}}{2}\cdot |p-q|1^2, ] and we determine the optimal constant $C{α,K}$ explicitly for every choice of $(α,K)$.

💡 Research Summary

The paper investigates a fundamental question in information theory and statistical learning: how to bound the Bregman divergence generated by the negative α‑Tsallis entropy (also known as the β‑divergence) in terms of the total variation (ℓ₁) distance between two probability vectors. The classic Pinsker inequality provides such a bound for the Kullback–Leibler (KL) divergence, stating that D_KL(p‖q) ≥ ½‖p−q‖₁². The authors extend this result to the whole family of divergences D_α(p‖q) that arise from Tsallis losses, which are widely used in robust statistics, signal processing, and online learning.

The main contribution is Theorem 8, which identifies the sharp constant C_{α,K} such that for any p, q in the relative interior of the K‑dimensional probability simplex Δ^K, \

Comments & Academic Discussion

Loading comments...

Leave a Comment