PIRATR: Parametric Object Inference for Robotic Applications with Transformers in 3D Point Clouds

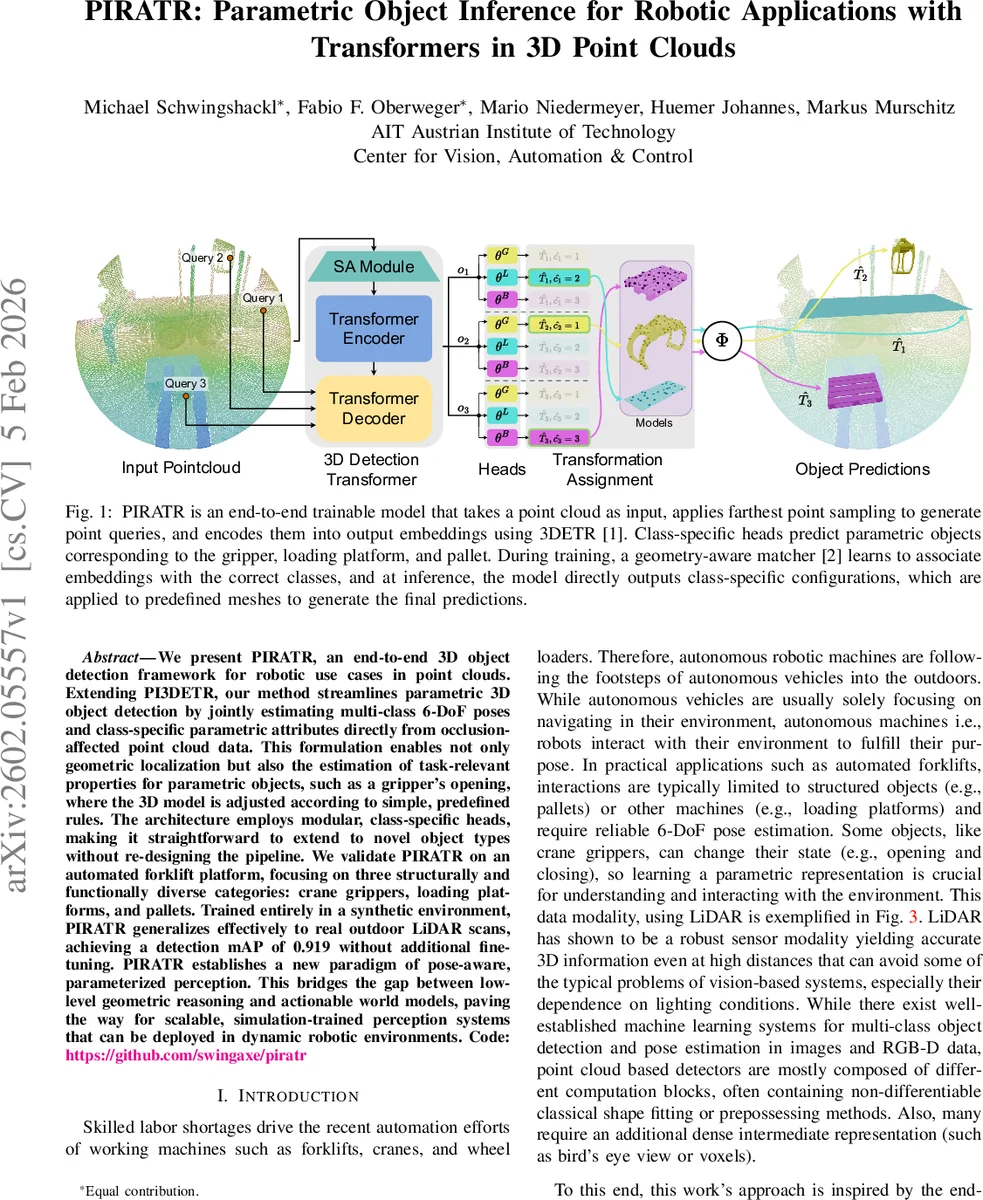

We present PIRATR, an end-to-end 3D object detection framework for robotic use cases in point clouds. Extending PI3DETR, our method streamlines parametric 3D object detection by jointly estimating multi-class 6-DoF poses and class-specific parametric attributes directly from occlusion-affected point cloud data. This formulation enables not only geometric localization but also the estimation of task-relevant properties for parametric objects, such as a gripper’s opening, where the 3D model is adjusted according to simple, predefined rules. The architecture employs modular, class-specific heads, making it straightforward to extend to novel object types without re-designing the pipeline. We validate PIRATR on an automated forklift platform, focusing on three structurally and functionally diverse categories: crane grippers, loading platforms, and pallets. Trained entirely in a synthetic environment, PIRATR generalizes effectively to real outdoor LiDAR scans, achieving a detection mAP of 0.919 without additional fine-tuning. PIRATR establishes a new paradigm of pose-aware, parameterized perception. This bridges the gap between low-level geometric reasoning and actionable world models, paving the way for scalable, simulation-trained perception systems that can be deployed in dynamic robotic environments. Code available at https://github.com/swingaxe/piratr.

💡 Research Summary

PIRATR (Parametric Object Inference for Robotic Applications with Transformers) is an end‑to‑end 3‑D object detection framework that directly consumes raw LiDAR point clouds and outputs both 6‑DoF poses and class‑specific parametric attributes such as a crane gripper’s opening angle. Building on the PI3DETR architecture, the authors replace the original curve‑prediction heads with modular, class‑specific feed‑forward networks (FFNs). Each FFN receives a transformer‑generated query embedding and jointly predicts position, orientation (as a unit quaternion), and, for the gripper class, an additional scalar state (opening angle). A separate classification head determines whether a query corresponds to background, a gripper, a loading platform, or a pallet.

A key contribution is a geometry‑aware matching strategy that respects object symmetries during the Hungarian assignment of predictions to ground‑truth. For symmetric objects (e.g., 180° rotation around the vertical axis), the loss is computed over the set of equivalent quaternions, preventing the network from being penalized for predicting a symmetric pose.

Training data are generated entirely in simulation using Blender and the official Livox‑Mid70 SDK. The pipeline creates 5 000 diverse scenes that include a forklift, a truck‑mounted crane, pallets, trees, and walls. Object placements follow realistic distributions (circular sampling for the forklift, Poisson‑disk sampling with Perlin noise for pallets, etc.). The LiDAR sensor model reproduces Livox’s Lissajous‑type scanning pattern: each ray’s azimuth and elevation are taken from the SDK, ray‑cast into the scene, and the resulting hit points are collected. After ray‑casting, the point cloud is down‑sampled to 32 k points using farthest‑point‑sampling, mirroring the density of real scans. Objects with insufficient hit counts are omitted from the ground‑truth to emulate occlusion.

Ground‑truth annotations encode each instance as a tuple (position p, orientation q, optional state α) together with a class label. Position semantics differ per class: the gripper’s center of mass, the loading platform’s front‑right corner, and the pallet’s bottom‑center. The authors also provide a deterministic mapping Φ that applies the pose (and opening angle for the gripper) to a CAD mesh and extracts a fixed set of 64 surface points, enabling a compact point‑based representation for loss computation. All inputs are normalized to the unit cube, and the opening angle is scaled to

Comments & Academic Discussion

Loading comments...

Leave a Comment