IESR:Efficient MCTS-Based Modular Reasoning for Text-to-SQL with Large Language Models

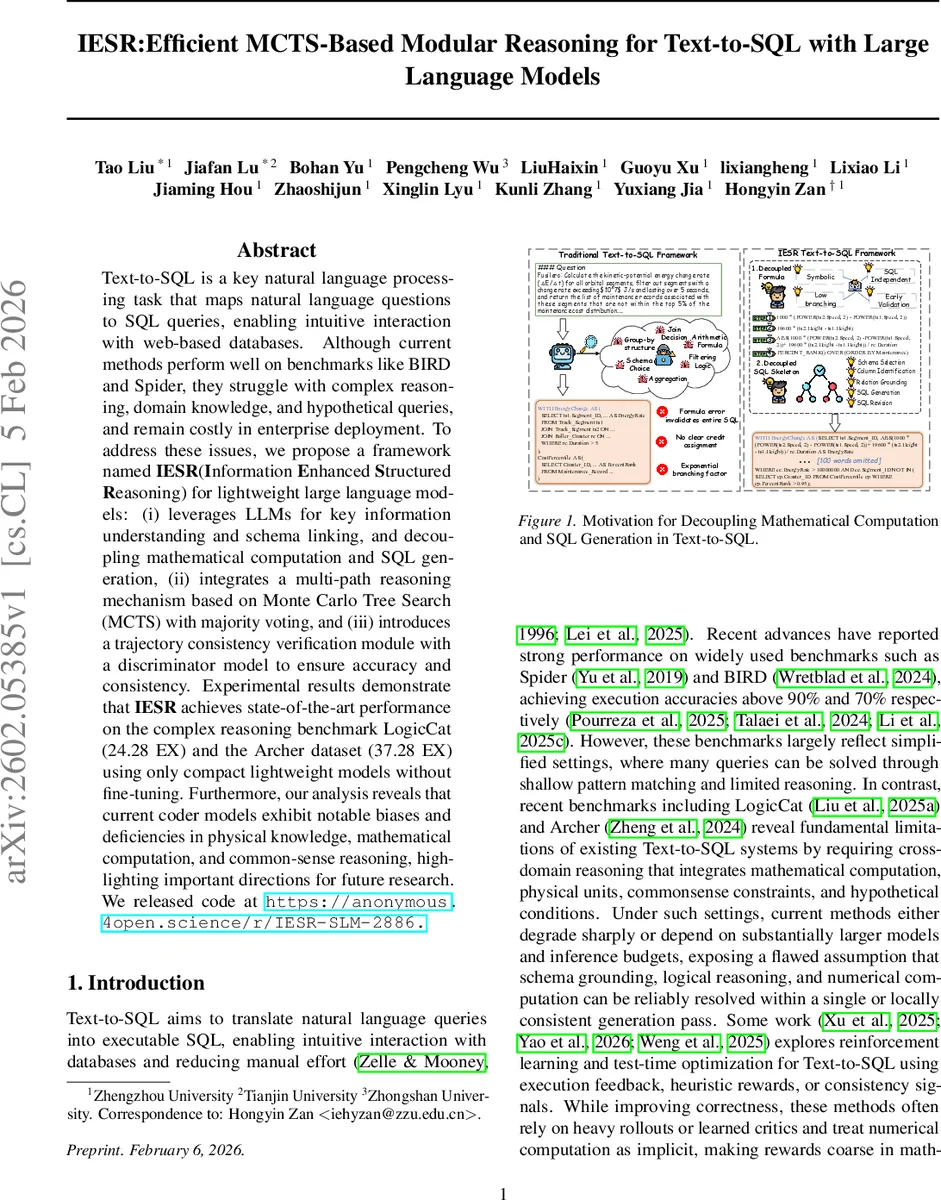

Text-to-SQL is a key natural language processing task that maps natural language questions to SQL queries, enabling intuitive interaction with web-based databases. Although current methods perform well on benchmarks like BIRD and Spider, they struggle with complex reasoning, domain knowledge, and hypothetical queries, and remain costly in enterprise deployment. To address these issues, we propose a framework named IESR(Information Enhanced Structured Reasoning) for lightweight large language models: (i) leverages LLMs for key information understanding and schema linking, and decoupling mathematical computation and SQL generation, (ii) integrates a multi-path reasoning mechanism based on Monte Carlo Tree Search (MCTS) with majority voting, and (iii) introduces a trajectory consistency verification module with a discriminator model to ensure accuracy and consistency. Experimental results demonstrate that IESR achieves state-of-the-art performance on the complex reasoning benchmark LogicCat (24.28 EX) and the Archer dataset (37.28 EX) using only compact lightweight models without fine-tuning. Furthermore, our analysis reveals that current coder models exhibit notable biases and deficiencies in physical knowledge, mathematical computation, and common-sense reasoning, highlighting important directions for future research. We released code at https://github.com/Ffunkytao/IESR-SLM.

💡 Research Summary

The paper addresses a critical gap in current Text‑to‑SQL research: while large language models (LLMs) achieve high execution accuracy on standard benchmarks such as Spider and BIRD, they falter on queries that require multi‑domain reasoning—mathematical computation, physical unit conversion, commonsense constraints, and hypothetical conditions. Moreover, deploying these large models in enterprise settings is costly. To overcome these challenges, the authors propose IESR (Information‑Enhanced Structured Reasoning), a lightweight, modular framework that works with moderate‑scale open‑source LLMs without any instruction fine‑tuning.

IESR consists of three tightly coupled stages.

- Information Understanding – A lightweight LLM first extracts a rich set of semantic hypotheses from the natural‑language question: entities, numeric expressions, units, and relational patterns. These are encoded as a latent semantic state Sₙ = (i, r, E, R, N, U, P). The hypotheses are deliberately high‑recall and treated as noisy. A constraint‑aware filtering step then applies hand‑crafted domain constraints (entity‑unit‑equation triples) to prune inconsistent relations. A non‑learned soft consistency scorer assigns each remaining relation a score in

Comments & Academic Discussion

Loading comments...

Leave a Comment