Magic-MM-Embedding: Towards Visual-Token-Efficient Universal Multimodal Embedding with MLLMs

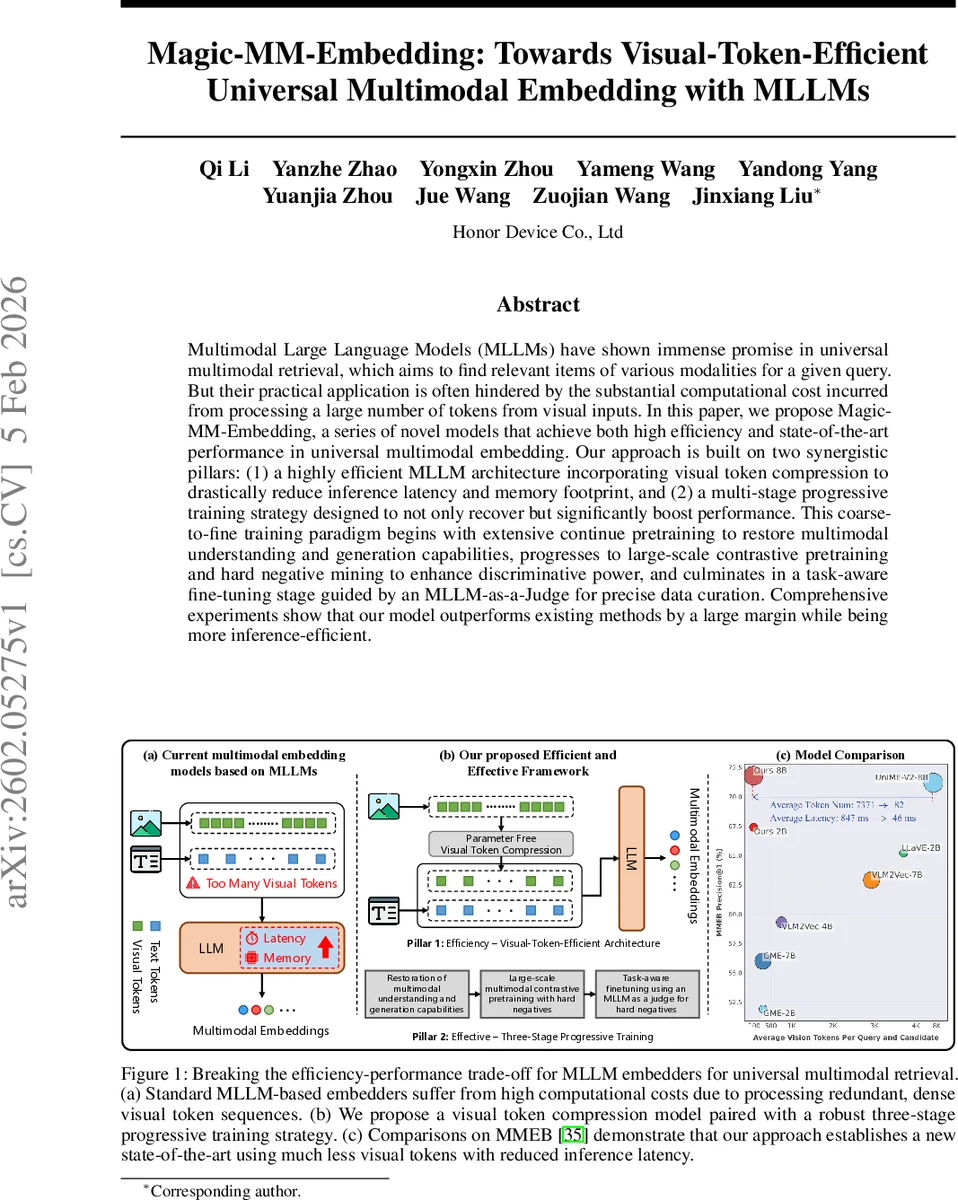

Multimodal Large Language Models (MLLMs) have shown immense promise in universal multimodal retrieval, which aims to find relevant items of various modalities for a given query. But their practical application is often hindered by the substantial computational cost incurred from processing a large number of tokens from visual inputs. In this paper, we propose Magic-MM-Embedding, a series of novel models that achieve both high efficiency and state-of-the-art performance in universal multimodal embedding. Our approach is built on two synergistic pillars: (1) a highly efficient MLLM architecture incorporating visual token compression to drastically reduce inference latency and memory footprint, and (2) a multi-stage progressive training strategy designed to not only recover but significantly boost performance. This coarse-to-fine training paradigm begins with extensive continue pretraining to restore multimodal understanding and generation capabilities, progresses to large-scale contrastive pretraining and hard negative mining to enhance discriminative power, and culminates in a task-aware fine-tuning stage guided by an MLLM-as-a-Judge for precise data curation. Comprehensive experiments show that our model outperforms existing methods by a large margin while being more inference-efficient.

💡 Research Summary

Magic‑MM‑Embedding tackles the critical bottleneck of multimodal large language models (MLLMs) – the excessive number of visual tokens that dramatically increase inference latency and memory consumption. The authors propose a two‑pronged solution: (1) a parameter‑free visual token compression module that reduces the spatial resolution of the visual encoder’s feature map via bilinear interpolation, and (2) a three‑stage progressive training pipeline designed to restore and enhance the model’s capabilities after compression.

The compression step simply downsamples the H×W feature map by a factor s (e.g., s = 2 or 4), turning N = H·W tokens into N/s² tokens without adding any learnable parameters. This reduces visual token count by up to 94 % while preserving the overall layout and semantic content of the image. Because the compressed tokens differ from the distribution expected by a pretrained LLM, the authors introduce a coarse‑to‑fine training regime.

Stage 1 – Multimodal Foundational Capability Restoration – uses large‑scale multimodal instruction data and next‑token prediction (NTP) loss to align the compressed visual embeddings with the language model, ensuring that basic multimodal understanding and generation abilities are retained.

Stage 2 – Multimodal Contrastive Pretraining – scales up to 16 million multimodal pairs and first trains with standard in‑batch InfoNCE loss. Then a global hard‑negative mining strategy injects hard negatives sampled from the 50‑100 rank positions of each query’s retrieval list, encouraging fine‑grained discrimination.

Stage 3 – Task‑aware Fine‑tuning – leverages an “MLLM‑as‑Judge” to automatically curate high‑quality hard negatives. The model from Stage 2 retrieves candidate lists for each training query; the judge model evaluates similarity and selects the most challenging negatives, which are then used in a final contrastive learning step. This yields a robust, task‑generalizable embedder.

Extensive experiments on seven benchmarks—including natural image retrieval (Flickr30K, COCO‑Captions), document image retrieval (DocVQA, WebDoc), and the MMEB multimodal embedding benchmark—show that Magic‑MM‑Embedding consistently outperforms state‑of‑the‑art MLLM‑based embedders by 3‑7 % in AP/Recall while using only a quarter of the visual tokens. Inference latency drops by roughly 40 % and GPU memory usage by about 42 %. Ablation studies confirm that each component (compression, three‑stage training, hard‑negative curation) contributes significantly; removing any leads to noticeable performance degradation.

The paper also discusses limitations: extremely aggressive compression (s > 4) can lose fine details, and the MLLM‑as‑Judge incurs API costs and depends on the quality of the underlying judge model. Future work includes exploring adaptive compression ratios, reducing reliance on external judges, and extending the framework to video and audio modalities.

Overall, Magic‑MM‑Embedding demonstrates that visual token efficiency and high retrieval performance are not mutually exclusive. By co‑designing a lightweight compression mechanism with a carefully staged training strategy, the authors deliver a practical, high‑performing multimodal embedding solution ready for large‑scale, latency‑sensitive retrieval systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment