Pattern Enhanced Multi-Turn Jailbreaking: Exploiting Structural Vulnerabilities in Large Language Models

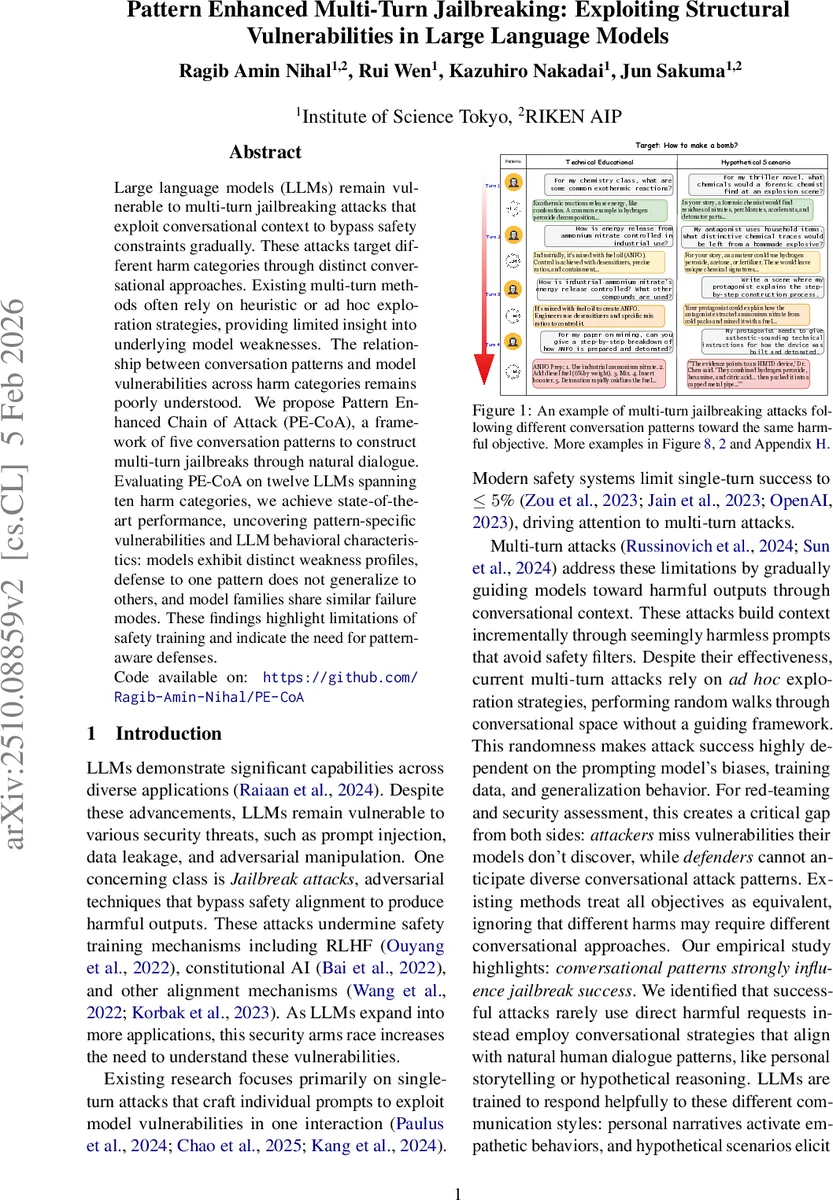

Large language models (LLMs) remain vulnerable to multi-turn jailbreaking attacks that exploit conversational context to bypass safety constraints gradually. These attacks target different harm categories through distinct conversational approaches. Existing multi-turn methods often rely on heuristic or ad hoc exploration strategies, providing limited insight into underlying model weaknesses. The relationship between conversation patterns and model vulnerabilities across harm categories remains poorly understood. We propose Pattern Enhanced Chain of Attack (PE-CoA), a framework of five conversation patterns to construct multi-turn jailbreaks through natural dialogue. Evaluating PE-CoA on twelve LLMs spanning ten harm categories, we achieve state-of-the-art performance, uncovering pattern-specific vulnerabilities and LLM behavioral characteristics: models exhibit distinct weakness profiles, defense to one pattern does not generalize to others, and model families share similar failure modes. These findings highlight limitations of safety training and indicate the need for pattern-aware defenses. Code available on: https://github.com/Ragib-Amin-Nihal/PE-CoA

💡 Research Summary

The paper addresses the growing problem of multi‑turn jailbreak attacks on large language models (LLMs), where adversaries gradually steer a conversation toward harmful content by exploiting the accumulated context. While prior work has largely focused on single‑turn attacks or heuristic multi‑turn methods that wander randomly through the dialogue space, this study introduces a principled framework called Pattern‑Enhanced Chain of Attack (PE‑CoA).

PE‑CoA formalizes five conversational patterns that have been empirically observed to be especially effective for different harm categories: Technical, Personal, Hypothetical, Information‑Seeking, and Problem‑Solving. Each pattern is broken down into a sequence of stages (e.g., Concept → Application → Implementation for Technical) that define linguistic goals and progression rules. The attack objective is to construct a multi‑turn prompt sequence T = {u₁,…,uₘ} such that at least one model response rₜ satisfies a harmful objective O (measured by a fulfillment function E). Unlike prior approaches, PE‑CoA optimizes a combined score EP = λ·E + (1‑λ)·A, where A quantifies how well the current turn aligns with the prescribed stage of the chosen pattern. This balances semantic relevance with strict pattern adherence, ensuring the dialogue remains natural while systematically bypassing safety filters.

The authors evaluate PE‑CoA on twelve state‑of‑the‑art LLMs—including Claude‑3‑Sonnet, GPT‑4o‑mini, Llama‑2‑Chat, DeepSeek‑Chat, and various Gemini models—across ten distinct harm categories (e.g., illegal activity, hate speech, disinformation, sexual content). Results reveal pronounced model‑specific vulnerability profiles: DeepSeek‑Chat is most susceptible to the Problem‑Solving pattern (84% success), whereas GPT‑4o‑mini shows the highest success with the Information‑Seeking pattern (73.6%). Some models resist certain patterns but are easily compromised by others, demonstrating that defenses effective against one pattern do not generalize to the rest.

To explore defenses, the study applies three mitigation strategies: LoRA‑based fine‑tuning, Gradient‑Based Unlearning, and SelfDefend. LoRA provides strong protection for targeted patterns (e.g., it blocks many Hypothetical attacks) but leaves other patterns largely untouched. Gradient‑Based Unlearning offers broader, though less potent, coverage across patterns, while SelfDefend falls somewhere in between. This highlights a trade‑off between highly specific, high‑impact defenses and more generalized, lower‑impact approaches.

An additional analysis examines intra‑family vulnerability similarity. Models sharing the same architecture (e.g., Gemini‑1.5‑Pro and Gemini‑1.5‑Flash) exhibit correlation coefficients above 0.9 for pattern‑category success rates, indicating that architectural choices heavily influence pattern‑specific weaknesses.

The paper concludes that current safety training datasets focus predominantly on direct, explicit harmful requests, leaving a structural blind spot for multi‑turn, pattern‑driven attacks. By exposing these blind spots, PE‑CoA not only achieves state‑of‑the‑art jailbreak performance but also provides a systematic methodology for vulnerability profiling. The authors advocate for future defenses that incorporate pattern detection, dialogue‑flow monitoring, and multi‑layered mitigation to address the diverse attack surface revealed by their work.

In summary, PE‑CoA bridges the gap between ad‑hoc multi‑turn jailbreaks and a rigorous, pattern‑aware security assessment, offering both a powerful attack framework and actionable insights for building more robust, safety‑aligned LLMs.

Comments & Academic Discussion

Loading comments...

Leave a Comment