DPMambaIR: All-in-One Image Restoration via Degradation-Aware Prompt State Space Model

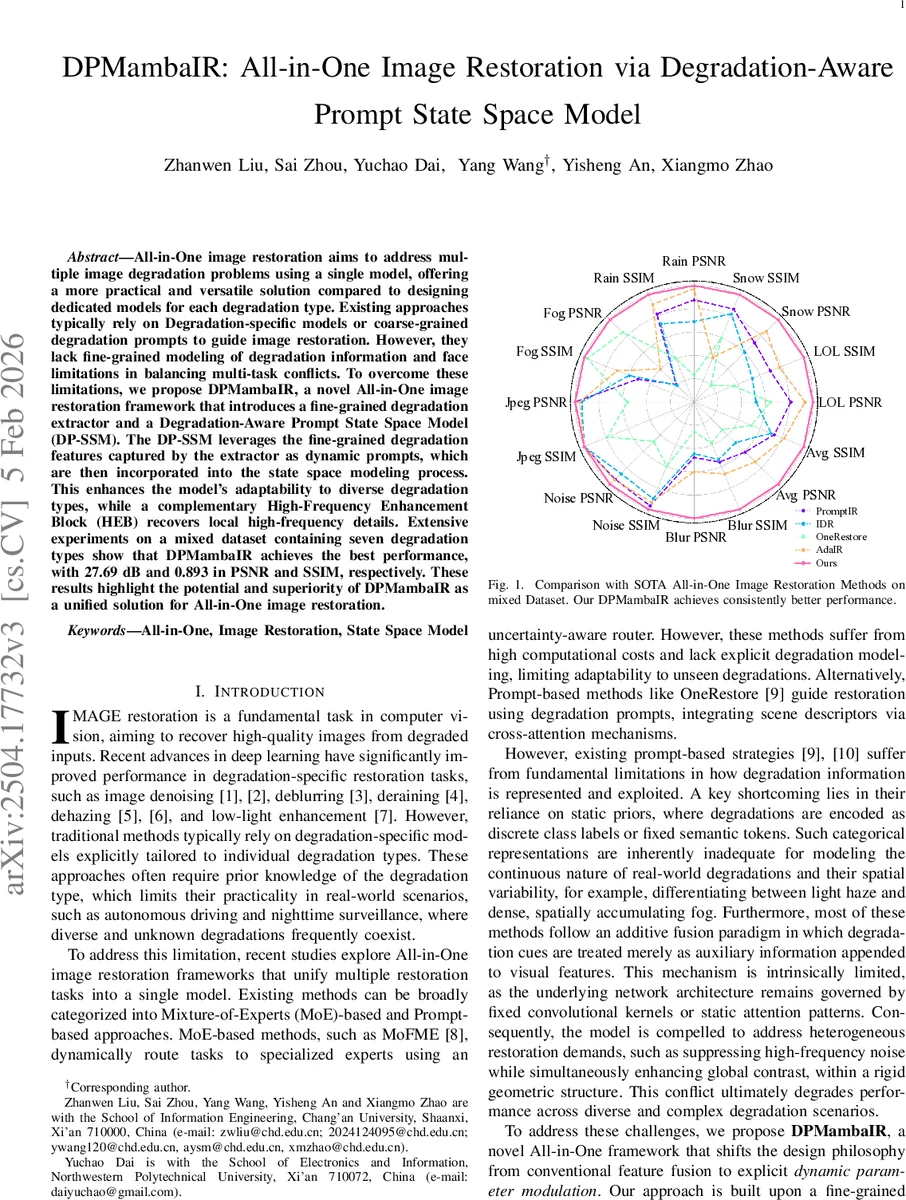

All-in-One image restoration aims to address multiple image degradation problems using a single model, offering a more practical and versatile solution compared to designing dedicated models for each degradation type. Existing approaches typically rely on Degradation-specific models or coarse-grained degradation prompts to guide image restoration. However, they lack fine-grained modeling of degradation information and face limitations in balancing multi-task conflicts. To overcome these limitations, we propose DPMambaIR, a novel All-in-One image restoration framework that introduces a fine-grained degradation extractor and a Degradation-Aware Prompt State Space Model (DP-SSM). The DP-SSM leverages the fine-grained degradation features captured by the extractor as dynamic prompts, which are then incorporated into the state space modeling process. This enhances the model’s adaptability to diverse degradation types, while a complementary High-Frequency Enhancement Block (HEB) recovers local high-frequency details. Extensive experiments on a mixed dataset containing seven degradation types show that DPMambaIR achieves the best performance, with 27.69dB and 0.893 in PSNR and SSIM, respectively. These results highlight the potential and superiority of DPMambaIR as a unified solution for All-in-One image restoration.

💡 Research Summary

The paper introduces DPMambaIR, a unified image restoration framework that can handle a wide variety of degradation types with a single model. The authors identify two fundamental shortcomings of existing All‑in‑One approaches: (1) coarse or discrete degradation representations that fail to capture continuous severity and spatial variability, and (2) static network parameters that merely concatenate degradation cues to visual features, limiting the ability to resolve conflicting restoration objectives such as noise suppression versus low‑light enhancement.

To address these issues, DPMambaIR consists of three main components. First, a fine‑grained degradation extractor is pre‑trained with a self‑supervised reconstruction loss. It regresses a 512‑dimensional continuous embedding from a degraded image, encoding both the type of degradation (noise, blur, compression, haze, snow, rain, low‑light) and its intensity. Second, the core of the model is the Degradation‑Aware Prompt State Space Model (DP‑SSM), built on the Vision‑Mamba architecture. Traditional state‑space models (SSM) use fixed matrices A, B, C and a discretization step Δ to evolve latent states. DP‑SSM dynamically modulates Δ, B, and C based on the degradation embedding. Adjusting Δ changes the integration step of the underlying ODE: small Δ yields high‑inertia dynamics suitable for smoothing high‑frequency noise, while large Δ provides high‑gain dynamics that amplify weak signals in low‑light conditions. Modulating B controls how strongly the external observation (the degraded image) drives state transitions, and C injects global degradation priors into the output mapping. This dynamic parameter modulation enables the network to adopt distinct continuous dynamics for each degradation scenario without needing separate expert branches.

Third, a lightweight High‑Frequency Enhancement Block (HEB) is added after the DP‑SSM stack to compensate for the tendency of multi‑task training to bias toward low‑frequency structures. HEB employs a compact convolutional branch with channel‑wise attention to restore fine textures and edges that may be lost during the global state‑space processing.

The overall architecture follows a U‑shaped encoder‑decoder design. A shallow 3×3 convolution extracts initial features, the encoder progressively downsamples while doubling channel width, and the decoder upsamples with skip connections. Multiple DP‑SSM blocks are interleaved, each receiving the same degradation embedding as a prompt. A final refinement stage adds the learned residual to the original degraded image, producing the restored output.

Experiments are conducted on a mixed dataset comprising seven degradation types (Gaussian noise, motion blur, JPEG compression, haze, snow, rain, and low‑light). DPMambaIR achieves an average PSNR of 27.69 dB and SSIM of 0.893, surpassing state‑of‑the‑art All‑in‑One methods such as MoFME, OneRestore, and AdaIR. Ablation studies show that removing the dynamic modulation of Δ, B, or C each degrades performance by 0.4–1.2 dB, confirming the importance of each component. The addition of HEB improves high‑frequency fidelity, especially noticeable in texture‑rich regions.

From a computational standpoint, the model retains the linear‑time complexity of Vision‑Mamba (O(N) with respect to image size) because the dynamic modulation is implemented via small MLPs that process the 512‑dimensional embedding. The full model contains roughly 45 M parameters (large version) and runs at about 30 FPS on a single 1080Ti GPU, making it suitable for real‑time applications.

The authors acknowledge limitations: the degradation extractor is trained on a predefined set of synthetic degradations, so performance on completely unseen real‑world corruptions remains to be tested; the current design focuses on 2‑D images, leaving video temporal consistency as future work; and extending DP‑SSM to other vision tasks (segmentation, detection) could further demonstrate its adaptability.

In summary, DPMambaIR advances All‑in‑One image restoration by (1) providing a continuous, fine‑grained degradation representation, (2) introducing a degradation‑aware dynamic state‑space module that tailors its evolution dynamics to the specific corruption, and (3) supplementing global modeling with a dedicated high‑frequency enhancement block. This combination yields superior quantitative results, efficient inference, and a flexible architecture that can be extended to broader multi‑task and multi‑modal vision scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment