Hidden in Plain Sight -- Class Competition Focuses Attribution Maps

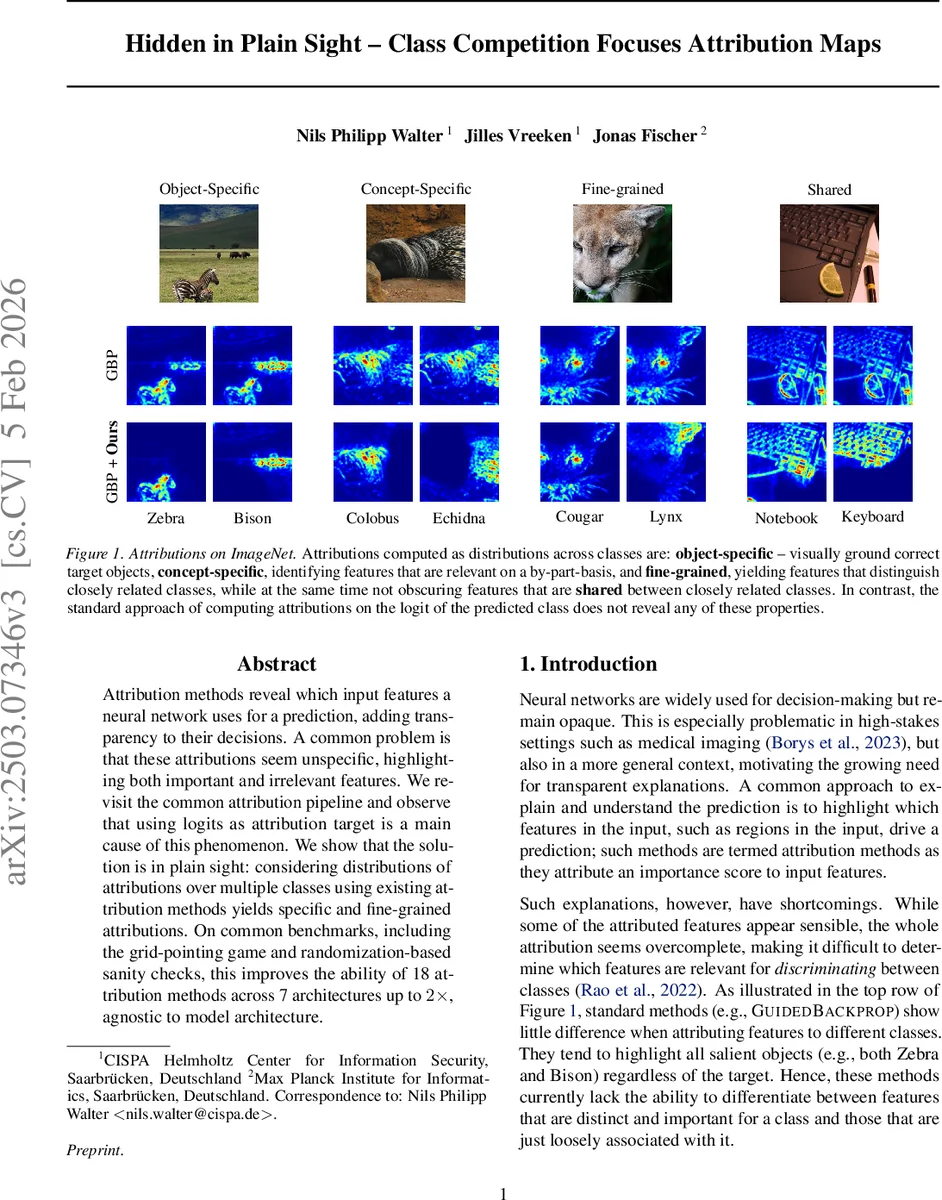

Attribution methods reveal which input features a neural network uses for a prediction, adding transparency to their decisions. A common problem is that these attributions seem unspecific, highlighting both important and irrelevant features. We revisit the common attribution pipeline and observe that using logits as attribution target is a main cause of this phenomenon. We show that the solution is in plain sight: considering distributions of attributions over multiple classes using existing attribution methods yields specific and fine-grained attributions. On common benchmarks, including the grid-pointing game and randomization-based sanity checks, this improves the ability of 18 attribution methods across 7 architectures up to 2x, agnostic to model architecture.

💡 Research Summary

Deep neural networks achieve remarkable performance across many domains, yet their decision processes remain opaque, especially in high‑stakes applications such as medical imaging. Post‑hoc attribution methods—techniques that assign importance scores to input features—are widely used to visualize what drives a model’s prediction. However, practitioners frequently observe that these visual explanations are overly broad, highlighting both relevant and irrelevant regions, which hampers their usefulness for fine‑grained analysis.

The authors identify the root cause of this problem: the conventional attribution pipeline computes explanations with respect to a single logit (typically the predicted class) and completely ignores the competitive information encoded in the soft‑max layer. The soft‑max transforms raw logits into a probability distribution by contrasting each class against all others. When a model is highly confident, the gradient of the soft‑max probability vanishes, causing gradient‑based attribution methods (e.g., Guided Backpropagation, Integrated Gradients) to produce diffuse, non‑specific maps.

To recover the discriminative signal, the paper introduces Attribution Lens (AL), a plug‑and‑play refinement that re‑introduces class competition directly into the attribution maps. The procedure consists of three steps:

-

Multi‑class attribution extraction – For a chosen subset of classes (C’) (e.g., the top‑k predicted classes, a predefined domain‑specific set, or the best‑vs‑worst pair), compute a per‑class attribution map (A_c) using any existing method (gradient‑based, CAM‑based, LRP, etc.). This step incurs a cost proportional to (|C’|) but otherwise uses the same back‑propagation machinery as the original method.

-

Spatial soft‑max normalization – At each pixel ((i,j)) apply a soft‑max across the class‑wise attributions: \

Comments & Academic Discussion

Loading comments...

Leave a Comment