Efficient Long-Document Reranking via Block-Level Embeddings and Top-k Interaction Refinement

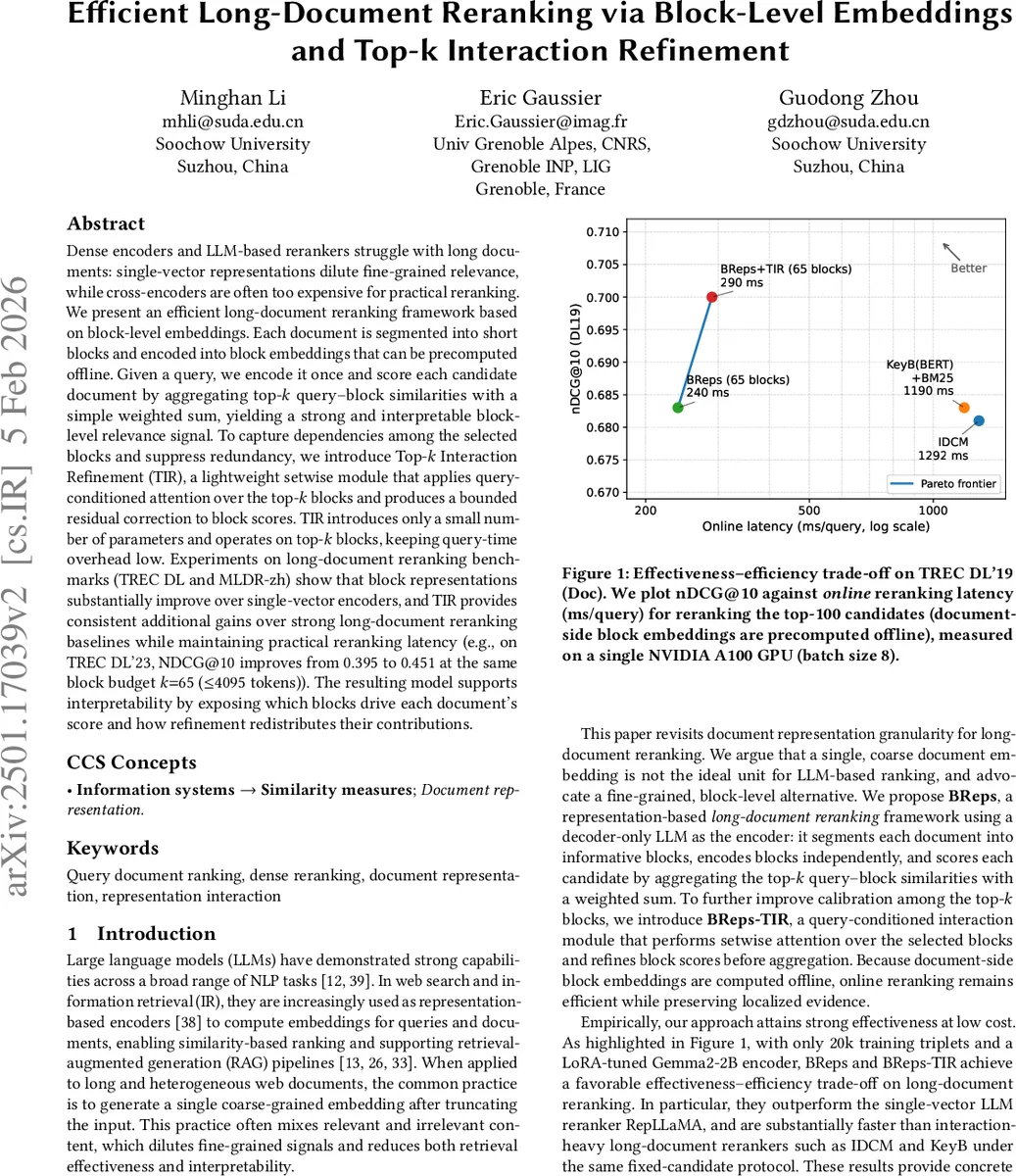

Dense encoders and LLM-based rerankers struggle with long documents: single-vector representations dilute fine-grained relevance, while cross-encoders are often too expensive for practical reranking. We present an efficient long-document reranking framework based on block-level embeddings. Each document is segmented into short blocks and encoded into block embeddings that can be precomputed offline. Given a query, we encode it once and score each candidate document by aggregating top-k query-block similarities with a simple weighted sum, yielding a strong and interpretable block-level relevance signal. To capture dependencies among the selected blocks and suppress redundancy, we introduce Top-k Interaction Refinement (TIR), a lightweight setwise module that applies query-conditioned attention over the top-k blocks and produces a bounded residual correction to block scores. TIR introduces only a small number of parameters and operates on top-k blocks, keeping query-time overhead low. Experiments on long-document reranking benchmarks (TREC DL and MLDR-zh) show that block representations substantially improve over single-vector encoders, and TIR provides consistent additional gains over strong long-document reranking baselines while maintaining practical reranking latency. For example, on TREC DL 2023, NDCG at 10 improves from 0.395 to 0.451 with the same block budget k = 65, using at most 4095 tokens. The resulting model supports interpretability by exposing which blocks drive each document’s score and how refinement redistributes their contributions.

💡 Research Summary

The paper tackles two fundamental challenges in long‑document reranking: (1) the loss of fine‑grained relevance when a whole document is compressed into a single dense vector, and (2) the prohibitive inference cost of cross‑encoders that jointly process query‑document pairs. The authors propose a two‑stage framework called BReps‑TIR (Block‑Level Representations with Top‑k Interaction Refinement) that combines efficient offline block embeddings with a lightweight online refinement module.

In the first stage, each document is segmented into short blocks (maximum 63 tokens) using the CogLTX dynamic‑programming algorithm, which prefers punctuation boundaries. A decoder‑only large language model (Gemma‑2‑B, LoRA‑tuned) encodes each block independently, producing a set of block embeddings that can be pre‑computed and stored in a standard vector index. At query time, the same LLM encodes the query once, yielding a query embedding V_q. Cosine similarity between V_q and each block embedding V_bj is computed, producing a relevance score for every block. The top‑k blocks (e.g., k = 65) are selected, and their scores are aggregated with a descending‑weight vector W (either fixed or learned) to obtain an initial document score S_{q,d}=∑{i=1}^k w_i·S{(i)}. This “BReps” component preserves localized evidence and offers interpretability because each block’s contribution is explicit.

The second stage addresses two shortcomings of simple top‑k aggregation: (i) redundancy when multiple blocks contain overlapping evidence, and (ii) missed complementarity when several moderate‑quality blocks together cover different query facets. The Top‑k Interaction Refinement (TIR) module operates only on the selected k blocks, keeping online overhead minimal. It first applies a single‑head query‑to‑block attention to compute attention weights α_i and a context vector c that summarizes query‑conditioned information across the set. Query, block, and context vectors are projected into a low‑dimensional space (dimension d ≪ H). A gated residual is then computed: each block’s original score s_i is passed through a small MLP to produce a gate G_i, added to a fused representation Z_i, and finally transformed by a linear head followed by tanh scaling (γ) to yield a bounded residual δ_i. The calibrated scores s′_i = s_i + δ_i replace the original scores in the weighted sum, producing the final document relevance score.

The authors evaluate BReps‑TIR on three TREC Deep Learning (DL) tracks (2021‑2023) and the Chinese MLDR‑zh benchmark. With the same block budget (k = 65, ≤ 4095 tokens), BReps‑TIR improves NDCG@10 from 0.395 to 0.451 on TREC DL 2023, outperforming the single‑vector RepLLaMA baseline and approaching the effectiveness of heavyweight cross‑encoders while keeping query‑time latency under 300 ms on an NVIDIA A100 (versus > 1 s for IDCM/KeyB). Ablation studies explore the impact of block count n, top‑k size k, weight learning, and TIR hyper‑parameters, confirming that modest values (k ≈ 40‑80, d ≈ 64) yield the best trade‑off between accuracy and speed.

Key contributions are: (1) a practical block‑level representation scheme that can be pre‑computed and indexed without altering existing vector‑store APIs; (2) a lightweight setwise interaction module (TIR) that refines block scores with query‑conditioned attention, effectively handling redundancy and complementarity; (3) extensive empirical evidence that BReps‑TIR consistently surpasses both dense single‑vector rerankers and more expensive interaction‑heavy baselines while maintaining low latency; and (4) thorough analysis of segmentation, aggregation, and efficiency, demonstrating the method’s suitability for real‑world IR systems where interpretability and serving cost matter.

In summary, BReps‑TIR offers a compelling solution for long‑document reranking: it preserves fine‑grained relevance through block embeddings, refines scores via a cheap yet expressive interaction layer, and delivers a favorable accuracy‑efficiency balance that makes it ready for deployment in large‑scale search engines and specialized domains such as legal or scholarly retrieval.

Comments & Academic Discussion

Loading comments...

Leave a Comment