Image inpainting for corrupted images by using the semi-super resolution GAN

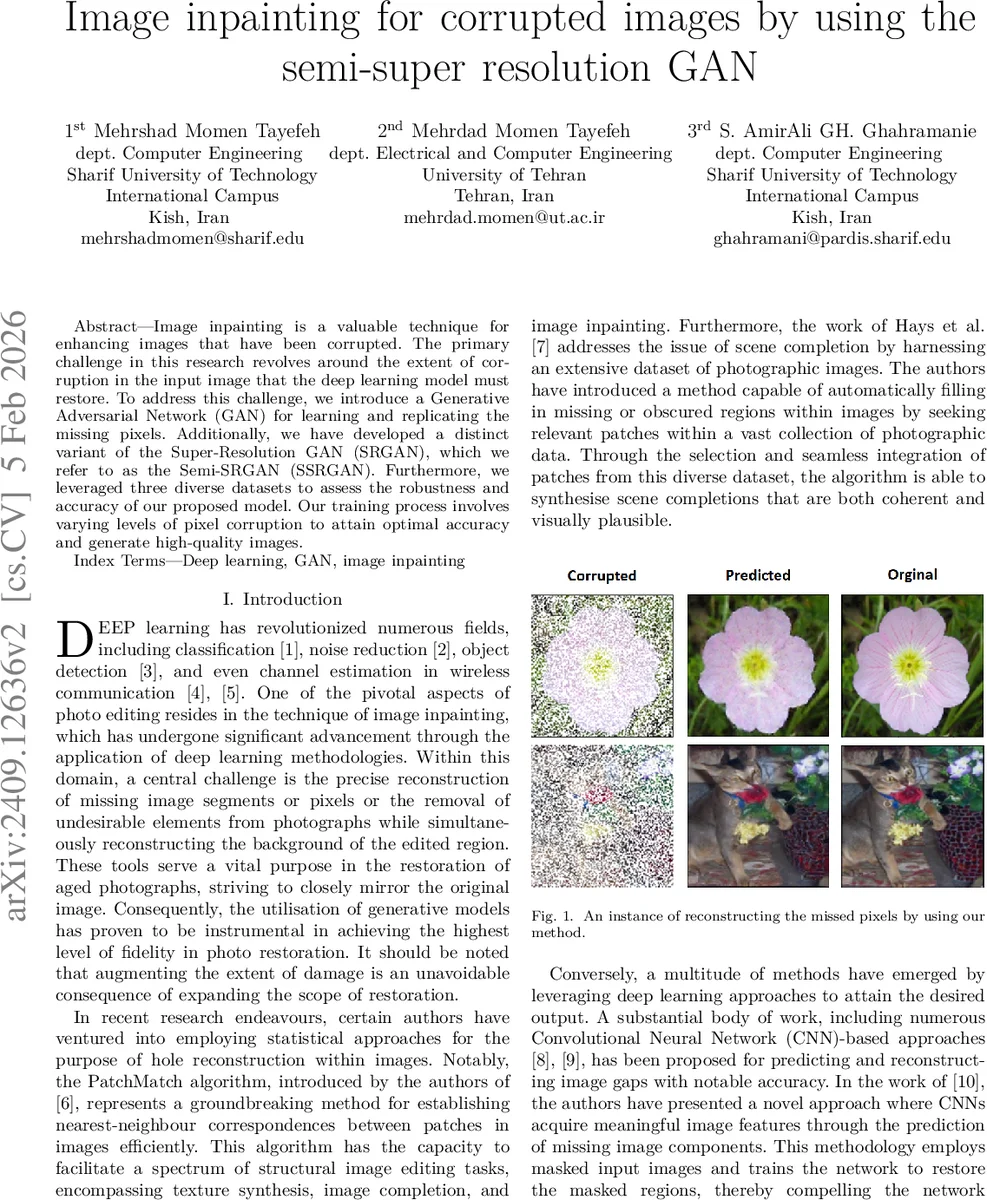

Image inpainting is a valuable technique for enhancing images that have been corrupted. The primary challenge in this research revolves around the extent of corruption in the input image that the deep learning model must restore. To address this challenge, we introduce a Generative Adversarial Network (GAN) for learning and replicating the missing pixels. Additionally, we have developed a distinct variant of the Super-Resolution GAN (SRGAN), which we refer to as the Semi-SRGAN (SSRGAN). Furthermore, we leveraged three diverse datasets to assess the robustness and accuracy of our proposed model. Our training process involves varying levels of pixel corruption to attain optimal accuracy and generate high-quality images.

💡 Research Summary

The paper addresses the problem of restoring corrupted images by proposing a lightweight variant of the Super‑Resolution Generative Adversarial Network (SRGAN), termed Semi‑Super‑Resolution GAN (SSR‑GAN). The authors observe that while deep‑learning‑based inpainting methods have surpassed classical statistical approaches, their performance degrades sharply when the proportion of missing pixels becomes large. To mitigate this, they introduce two main innovations. First, they simulate image damage by randomly selecting a uniform set of pixels and corrupting them at various rates ranging from 30 % to 80 %. This creates a training regime that forces the network to learn robust reconstruction across a spectrum of degradation levels. Second, they simplify the original SRGAN architecture and loss formulation. The generator consists of an initial 9 × 9 convolution, followed by six residual blocks (each with 3 × 3 kernels, stride 1, and skip connections), and ends with a final convolution (9 × 9, stride 4). A PixelShuffle layer with an upscale factor of 2 is employed to achieve higher resolution. The discriminator is reduced to four blocks, each doubling the channel count from 64 up to 512, and finishes with a transposed convolution (kernel 4, stride 4).

Instead of the computationally heavy VGG‑19 perceptual loss used in SRGAN, the authors adopt a plain Mean‑Squared‑Error (MSE) loss for both generator and discriminator. The discriminator loss is the sum of MSE on real and fake samples, while the generator loss combines the MSE between the reconstructed image and the ground‑truth with a small weighted MSE term (10⁻³) encouraging the discriminator to label the generated output as real.

Experiments are conducted on three publicly available datasets: Oxford‑IIIT‑Pet, Caltech‑101, and Flowers‑102. All images are resized to 128 × 128 to keep memory requirements modest. Training runs on an NVIDIA V100 GPU for 100 epochs, batch size 64, using the Adam optimizer (β₁ = 0.9, β₂ = 0.999) with an initial learning rate of 2 × 10⁻⁴, halved every 25 epochs. Performance is evaluated with Normalized Mean Squared Error (NMSE) and Peak Signal‑to‑Noise Ratio (PSNR).

Results show the expected trend: higher corruption rates lead to larger NMSE and lower PSNR. Nevertheless, SSR‑GAN achieves respectable numbers even at 50 % pixel loss: Caltech‑101 yields NMSE = 0.0077 and PSNR = 21.14 dB; Oxford‑IIIT‑Pet gives NMSE = 0.0092 and PSNR = 20.36 dB; Flowers‑102 records NMSE = 0.0159 and PSNR = 17.99 dB. Training curves reveal rapid NMSE reduction in early epochs, followed by slower convergence, with higher corruption levels requiring more epochs to stabilize.

The authors argue that SSR‑GAN offers a favorable trade‑off: reduced parameter count and simplified loss while maintaining competitive reconstruction quality for moderate levels of damage. They acknowledge several limitations: (1) the low resolution (128 × 128) may not reflect performance on real‑world high‑resolution photographs; (2) evaluation relies solely on NMSE and PSNR, omitting perceptual metrics such as SSIM or LPIPS; (3) detailed architectural hyper‑parameters are not tabulated, which hampers reproducibility.

In conclusion, the study demonstrates that a streamlined GAN architecture can effectively inpaint images with substantial missing data, providing a practical solution when computational resources are constrained. Future work should explore higher‑resolution scenarios, irregular masks, perceptual quality assessments, and comparisons with other lightweight GAN variants to further validate and extend the proposed approach.

Comments & Academic Discussion

Loading comments...

Leave a Comment