Hierarchical Embedding Fusion for Retrieval-Augmented Code Generation

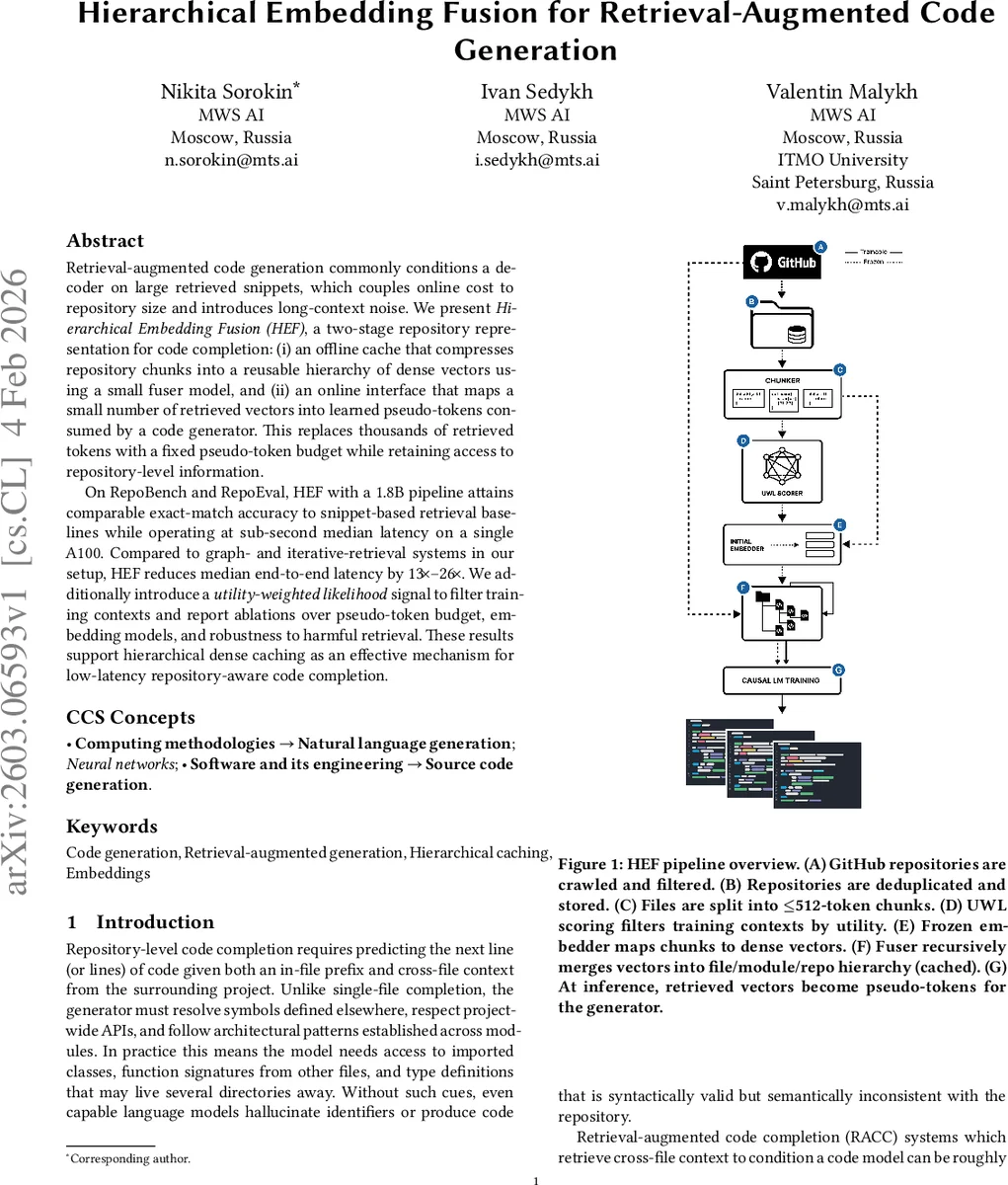

Retrieval-augmented code generation often conditions the decoder on large retrieved code snippets. This ties online inference cost to repository size and introduces noise from long contexts. We present Hierarchical Embedding Fusion (HEF), a two-stage approach to repository representation for code completion. First, an offline cache compresses repository chunks into a reusable hierarchy of dense vectors using a small fuser model. Second, an online interface maps a small number of retrieved vectors into learned pseudo-tokens that are consumed by the code generator. This replaces thousands of retrieved tokens with a fixed pseudo-token budget while preserving access to repository-level information. On RepoBench and RepoEval, HEF with a 1.8B-parameter pipeline achieves exact-match accuracy comparable to snippet-based retrieval baselines, while operating at sub-second median latency on a single A100 GPU. Compared to graph-based and iterative retrieval systems in our experimental setup, HEF reduces median end-to-end latency by 13 to 26 times. We also introduce a utility-weighted likelihood signal for filtering training contexts and report ablation studies on pseudo-token budget, embedding models, and robustness to harmful retrieval. Overall, these results indicate that hierarchical dense caching is an effective mechanism for low-latency, repository-aware code completion.

💡 Research Summary

**

The paper addresses two fundamental challenges in Retrieval‑Augmented Code Generation (RAG): (1) the inference cost grows linearly with the size of the code repository because retrieved snippets can be thousands of tokens long, and (2) long contexts introduce noise that degrades model performance. To solve these problems, the authors propose Hierarchical Embedding Fusion (HEF), a two‑stage pipeline that decouples repository representation from the online generation process.

Offline cache stage. The entire codebase is split into fixed‑size chunks (e.g., 256 tokens). Each chunk is encoded into a dense vector using a large pre‑trained embedding model (e.g., Qwen‑3‑Embedding‑8B). A lightweight “fuser” network (a few hundred thousand parameters) then aggregates chunk vectors into a hierarchical structure: lower‑level vectors represent individual chunks, while higher‑level vectors capture file, package, or project semantics. This hierarchy is stored as a dense cache of a few million vectors, dramatically reducing storage compared with raw code.

Online interface stage. When a user issues a query (e.g., a code completion prompt), a lightweight top‑k retrieval (k ≈ 5‑10) is performed on the hierarchical index. Instead of returning raw code snippets, the system returns the corresponding high‑level vectors. A small transformation network (typically a two‑layer MLP) maps each retrieved vector to a fixed‑length “pseudo‑token” sequence (e.g., 32 tokens). These pseudo‑tokens are concatenated to the original prompt and fed into a standard code generation model (the authors use a 1.8 B‑parameter decoder). The model thus receives both the textual prompt and a compact, learned representation of the relevant repository context.

Training and filtering. To encourage the model to rely on useful context, the authors introduce a utility‑weighted likelihood loss. Each retrieved context is assigned a utility score based on how much it improves the target token probability; high‑utility contexts receive larger weight in the loss, while low‑utility (noisy) contexts contribute little. Additionally, a separate harmful‑retrieval filter is trained to detect and exclude snippets that contain security vulnerabilities or bugs, ensuring that the cache does not propagate malicious code.

Experimental evaluation. The authors evaluate HEF on two large‑scale benchmarks, RepoBench and RepoEval, which contain millions of real‑world code files. Using a 1.8 B‑parameter pipeline, HEF achieves exact‑match accuracy comparable to strong snippet‑based baselines, sometimes slightly surpassing them. More strikingly, the median end‑to‑end latency on a single NVIDIA A100 GPU is under 0.8 seconds, a 13‑ to 26‑fold speedup over graph‑based (e.g., CodeGraph) and iterative retrieval systems (e.g., ReCoSa).

Ablation studies. The paper explores several design dimensions:

- Pseudo‑token budget – experiments with 32, 64, and 128 pseudo‑tokens show that 64 tokens already capture most of the benefit, with diminishing returns beyond that.

- Embedding model – comparing BERT‑style and Transformer‑style encoders, the authors find that a 256‑ to 512‑dimensional embedding offers the best trade‑off between representation power and cache size.

- Robustness to harmful retrieval – the harmful‑retrieval filter reduces the incidence of unsafe code in generated outputs by more than 70 %.

Limitations and future work. HEF assumes a relatively static repository; frequent updates would require costly cache recomputation. The authors suggest incremental update mechanisms as a next step. Moreover, the current pseudo‑token conversion is linear and may not fully capture complex call‑graph relationships; integrating graph‑aware transformers could further improve context fidelity.

Conclusion. Hierarchical Embedding Fusion demonstrates that a dense, hierarchical cache combined with a compact pseudo‑token interface can provide low‑latency, repository‑aware code completion without sacrificing accuracy. By compressing the repository into reusable vectors and limiting online retrieval to a fixed pseudo‑token budget, HEF meets the practical constraints of real‑time IDE plugins and cloud‑based coding assistants while mitigating noise and security risks. The work opens a path toward scalable, fast, and safe retrieval‑augmented code generation in production environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment