ARGaze: Autoregressive Transformers for Online Egocentric Gaze Estimation

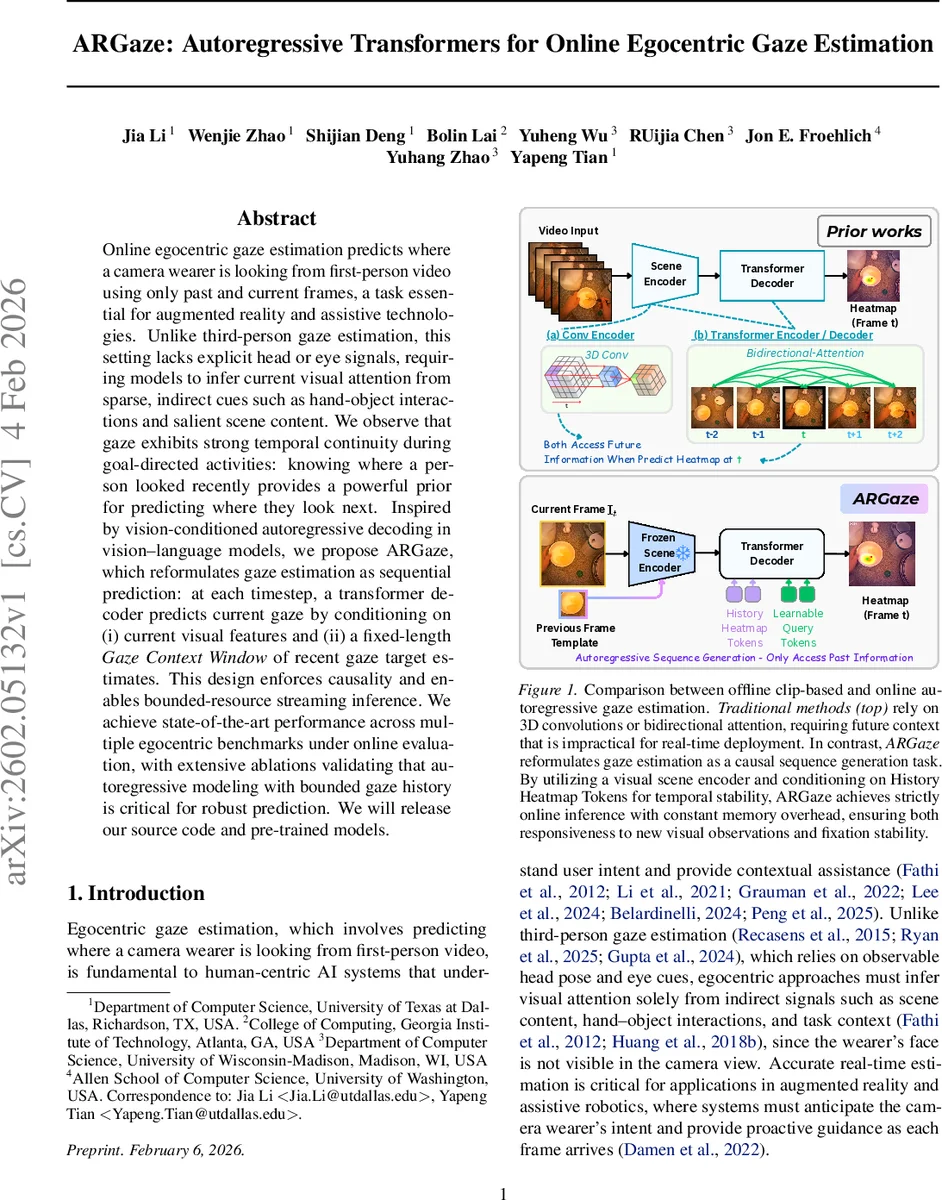

Online egocentric gaze estimation predicts where a camera wearer is looking from first-person video using only past and current frames, a task essential for augmented reality and assistive technologies. Unlike third-person gaze estimation, this setting lacks explicit head or eye signals, requiring models to infer current visual attention from sparse, indirect cues such as hand-object interactions and salient scene content. We observe that gaze exhibits strong temporal continuity during goal-directed activities: knowing where a person looked recently provides a powerful prior for predicting where they look next. Inspired by vision-conditioned autoregressive decoding in vision-language models, we propose ARGaze, which reformulates gaze estimation as sequential prediction: at each timestep, a transformer decoder predicts current gaze by conditioning on (i) current visual features and (ii) a fixed-length Gaze Context Window of recent gaze target estimates. This design enforces causality and enables bounded-resource streaming inference. We achieve state-of-the-art performance across multiple egocentric benchmarks under online evaluation, with extensive ablations validating that autoregressive modeling with bounded gaze history is critical for robust prediction. We will release our source code and pre-trained models.

💡 Research Summary

ARGaze tackles the problem of online egocentric gaze estimation, where a wearable camera must predict the wearer’s point of regard in real time using only past and current video frames. The authors observe that during goal‑directed activities human gaze exhibits strong temporal continuity, making recent fixations a powerful prior for the next gaze location. Leveraging this insight, they reformulate gaze prediction as a causal autoregressive sequence generation task: at each timestep t the model predicts a gaze heatmap Hₜ conditioned on the current visual frame Iₜ and a bounded window of the K most recent gaze heatmaps Hₜ₋ₖ … Hₜ₋₁.

The architecture consists of five main components. A Scene Encoder (a frozen DINOv3 backbone) extracts multi‑scale visual tokens from the current frame and from “tracking‑aware templates” – high‑resolution crops centered on previously predicted gaze points – providing both global scene context and localized visual priors. An Autoregressive Heatmap Tokenizer converts each past heatmap into a sequence of tokens via down‑sampling and a 1×1 convolution, then adds temporal and token‑type embeddings so the model can distinguish time steps and token roles. These history tokens are concatenated with learnable query tokens and fed into a Causal Transformer Decoder. The decoder applies masked self‑attention (ensuring strict causality) and cross‑attention over the visual tokens, integrating past fixation patterns with current visual information while keeping memory usage O(K). Finally, a Spatial Reconstruction Head reshapes the decoder output and upsamples it with transposed convolutions to produce the 2D gaze heatmap for the current frame.

Training optimizes a combination of L₂ loss on the heatmap, KL‑divergence to match predicted and ground‑truth probability distributions, and a total‑variation regularizer for temporal smoothness. Data augmentation includes random crops, color jitter, and synthetic noise injected into the gaze history to improve robustness.

Experiments were conducted on three public egocentric gaze datasets (EPIC‑KITCHENS, GTEA‑Gaze, EGTEA‑Gaze) using an online evaluation protocol that forbids future frames. ARGaze achieves state‑of‑the‑art performance, improving average F1‑score by 1.40 % and AUC by 1.2 % over the previous best methods, while delivering a 1.82× speedup in inference time. Notably, the model remains stable under challenging conditions such as rapid hand‑object interactions or abrupt ego‑motion, where prior clip‑based or bidirectional models tend to produce erratic predictions.

Ablation studies confirm the importance of each design choice: removing the gaze history tokens degrades performance by more than 7 %; reducing the history window to a single frame eliminates the continuity benefit; omitting the tracking‑aware templates harms accuracy during fast camera movements by about 4 %; and replacing causal masking with bidirectional attention breaks the real‑time constraint.

In summary, the paper makes four key contributions: (1) reframing egocentric gaze estimation as a causal autoregressive sequence problem; (2) introducing a bounded‑length, tokenized gaze history that provides explicit temporal priors while keeping memory constant; (3) designing a transformer‑based decoder that fuses past fixations with current visual context for fast, accurate online inference; and (4) demonstrating superior performance and robustness across multiple benchmarks and real‑world scenarios. Future work will explore multimodal extensions (audio, hand pose) and lightweight backbones for deployment on wearable AR devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment