Audio ControlNet for Fine-Grained Audio Generation and Editing

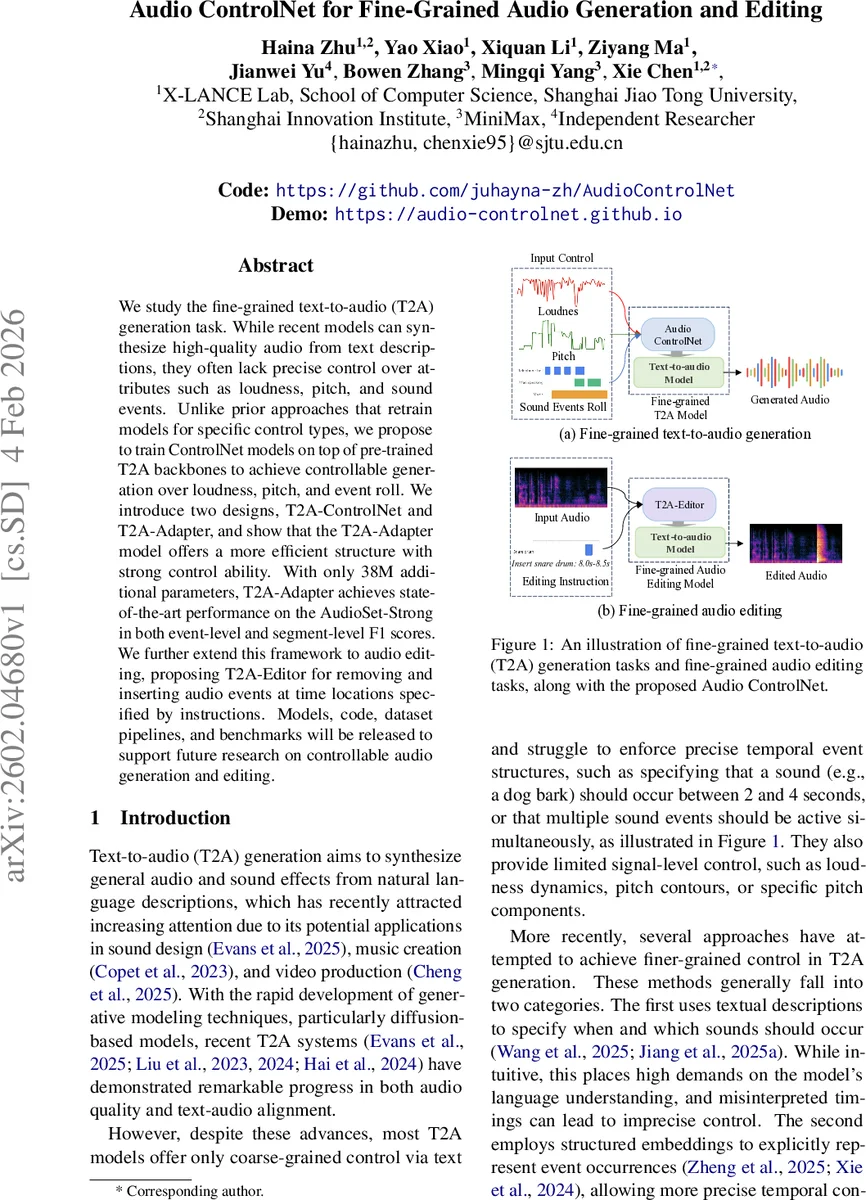

We study the fine-grained text-to-audio (T2A) generation task. While recent models can synthesize high-quality audio from text descriptions, they often lack precise control over attributes such as loudness, pitch, and sound events. Unlike prior approaches that retrain models for specific control types, we propose to train ControlNet models on top of pre-trained T2A backbones to achieve controllable generation over loudness, pitch, and event roll. We introduce two designs, T2A-ControlNet and T2A-Adapter, and show that the T2A-Adapter model offers a more efficient structure with strong control ability. With only 38M additional parameters, T2A-Adapter achieves state-of-the-art performance on the AudioSet-Strong in both event-level and segment-level F1 scores. We further extend this framework to audio editing, proposing T2A-Editor for removing and inserting audio events at time locations specified by instructions. Models, code, dataset pipelines, and benchmarks will be released to support future research on controllable audio generation and editing.

💡 Research Summary

The paper addresses the problem of fine‑grained control in text‑to‑audio (T2A) generation, where existing models can produce high‑quality audio from natural language but lack precise manipulation of attributes such as loudness dynamics, pitch contours, and exact timing of sound events. Rather than retraining large diffusion‑based T2A backbones for each control type, the authors propose a lightweight auxiliary network—Audio ControlNet—that sits on top of a frozen, pre‑trained T2A model (specifically FluxAudio, a hybrid MMDiT + DiT diffusion model).

Two variants of Audio ControlNet are introduced:

-

T2A‑ControlNet – a copy‑net architecture that replicates the first l layers of the backbone and injects residual control features directly into each corresponding layer. This design mirrors the original ControlNet used in text‑to‑image synthesis but is adapted to handle the hybrid MMDiT/DiT structure of FluxAudio.

-

T2A‑Adapter – a more parameter‑efficient design that employs a lightweight encoder (1‑D convolutions with SiLU activations) to extract features from the control signals. The encoder’s outputs are fed as keys and values into cross‑attention modules that operate on the latent representations of the first l layers of FluxAudio. Zero‑convolution layers are used to align dimensions before addition.

Both models keep the backbone weights frozen; only the auxiliary network is trained, dramatically reducing training cost while preserving the generative capacity of the original model.

Control Signals

The authors unify all control inputs as temporal sequences aligned with the audio timeline:

-

Loudness – computed as frame‑level RMS energy, converted to decibels, and smoothed with a Savitzky‑Golay filter. The resulting 1‑D curve is broadcast across the feature dimension.

-

Pitch – extracted as the continuous fundamental frequency (F0), transformed to log‑frequency, then processed with a continuous wavelet transform (CWT) to capture multi‑scale variations. The CWT coefficients are quantized into discrete bins and embedded via a learnable codebook.

-

Sound‑Event Roll – represented as an event roll matrix where each row corresponds to a sound‑event class and columns to time steps. For each active event, a semantic embedding of its textual label is obtained from the CLAP text encoder; inactive timesteps receive a zero vector. A shared linear projection aggregates overlapping events into a fixed‑dimensional vector per timestep.

These three modalities are concatenated (or summed after projection) to form a unified control tensor that is fed into either T2A‑ControlNet or T2A‑Adapter.

Audio Editing Extension

Beyond generation, the framework is extended to editing with T2A‑Editor. This model adapts the T2A‑Adapter architecture to accept (i) a reference audio clip (turning the system into an audio‑to‑audio model) and (ii) editing instructions such as “insert clapping from 2.0 s to 2.5 s” or “remove speech from 5.0 s to 8.0 s”. An additional lightweight conditioning network processes these inputs and injects them via cross‑attention, enabling precise insertion or removal of events at specified timestamps without altering the core backbone.

Experiments

The authors evaluate on the AudioSet‑Strong benchmark, which provides strong temporal annotations for sound events. Results show:

- T2A‑Adapter achieves state‑of‑the‑art event‑level F1 = 54.36 and segment‑level F1 = 68.26, surpassing prior methods that require full model retraining.

- Only 38 M extra parameters are introduced, a modest overhead compared to the backbone’s size.

- Subjective listening tests confirm that the controlled outputs are perceived as more faithful to the intended loudness, pitch, and event timing than baselines (e.g., Wang et al., 2025; Hai et al., 2024).

- For editing, T2A‑Editor outperforms AUDIT and Recomposer in both objective insertion/removal accuracy and human preference scores, while retaining the high audio quality of the underlying diffusion model.

Key Insights

- Parameter Efficiency – Freezing the backbone and training only a small auxiliary network yields strong controllability with minimal computational cost.

- Unified Temporal Conditioning – Representing all control modalities as aligned time‑series simplifies the architecture and makes it extensible to future control types (e.g., timbre, spatialization).

- Cross‑Attention as a Control Injection Mechanism – Using cross‑attention allows the model to selectively attend to control information at each diffusion step, leading to more precise manipulation than simple concatenation.

- Scalability to Editing – The same ControlNet paradigm can be repurposed for audio‑to‑audio editing, demonstrating the flexibility of the approach.

Conclusion

The paper introduces a versatile, lightweight framework for fine‑grained control and editing in text‑to‑audio generation. By leveraging a frozen diffusion backbone and adding a small, purpose‑built ControlNet (either residual‑based or adapter‑based), the authors achieve state‑of‑the‑art performance on a challenging benchmark while keeping the parameter budget low. The unified temporal conditioning and the successful extension to editing tasks suggest that Audio ControlNet could become a foundational component for future audio synthesis, sound design, and multimedia production pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment