Understanding Degradation with Vision Language Model

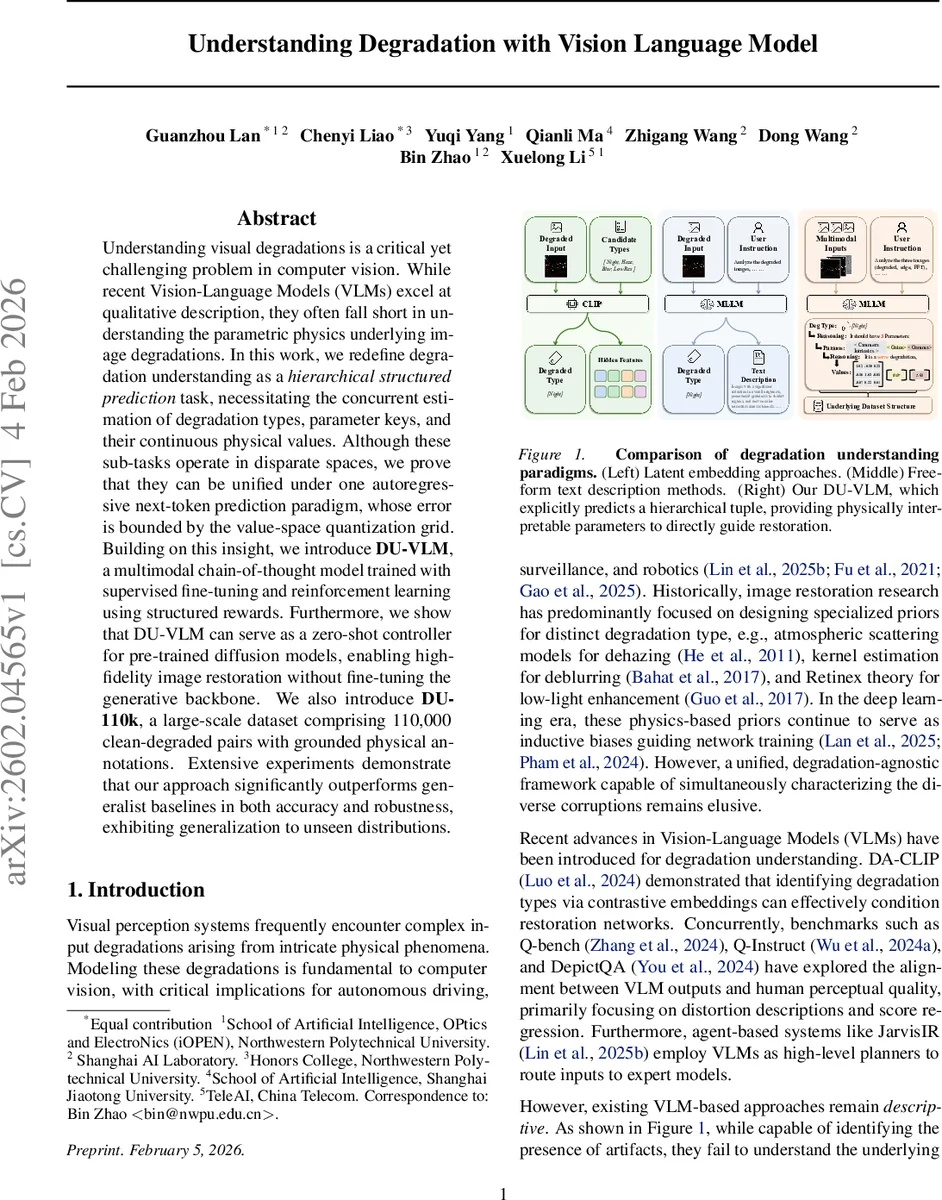

Understanding visual degradations is a critical yet challenging problem in computer vision. While recent Vision-Language Models (VLMs) excel at qualitative description, they often fall short in understanding the parametric physics underlying image degradations. In this work, we redefine degradation understanding as a hierarchical structured prediction task, necessitating the concurrent estimation of degradation types, parameter keys, and their continuous physical values. Although these sub-tasks operate in disparate spaces, we prove that they can be unified under one autoregressive next-token prediction paradigm, whose error is bounded by the value-space quantization grid. Building on this insight, we introduce DU-VLM, a multimodal chain-of-thought model trained with supervised fine-tuning and reinforcement learning using structured rewards. Furthermore, we show that DU-VLM can serve as a zero-shot controller for pre-trained diffusion models, enabling high-fidelity image restoration without fine-tuning the generative backbone. We also introduce \textbf{DU-110k}, a large-scale dataset comprising 110,000 clean-degraded pairs with grounded physical annotations. Extensive experiments demonstrate that our approach significantly outperforms generalist baselines in both accuracy and robustness, exhibiting generalization to unseen distributions.

💡 Research Summary

The paper introduces DU‑VLM, a vision‑language model designed to understand image degradations at the level of physical parameters and to use this understanding to control image restoration without fine‑tuning the generative backbone. Existing VLM‑based approaches can identify degradation types or provide free‑form descriptions, but they lack quantitative insight into the underlying physics (e.g., atmospheric scattering coefficients, gamma correction values). To bridge this gap, the authors reformulate degradation understanding as a hierarchical structured prediction problem consisting of three levels: degradation type (t), parameter keys (k) specific to the type, and continuous parameter values (v). They prove that predicting this hierarchy can be unified under a single autoregressive next‑token prediction objective, with error bounded by the quantization grid Δ.

A new large‑scale dataset, DU‑110k, is constructed to train and evaluate the model. Clean images from diverse sources (CelebA, BDD100K, NuScenes) are degraded using physically grounded models for haze, low‑light, blur, and low‑resolution. For each degraded‑clean pair, the exact tuple (t, k, v) is recorded. A human‑in‑the‑loop verification step removes unrealistic samples, resulting in 110 k verified triplets evenly distributed across the four degradation categories.

The model architecture builds on Qwen‑3‑VL‑8B, extending it with multimodal inputs: the degraded image, its FFT amplitude spectrum, and a Sobel edge map. Training proceeds in three stages. First, supervised fine‑tuning (SFT) teaches the model to generate a textual chain‑of‑thought (CoT) rationale describing degradation severity, then to emit the hierarchical token sequence

Comments & Academic Discussion

Loading comments...

Leave a Comment