Generative AI in Systems Engineering: A Framework for Risk Assessment of Large Language Models

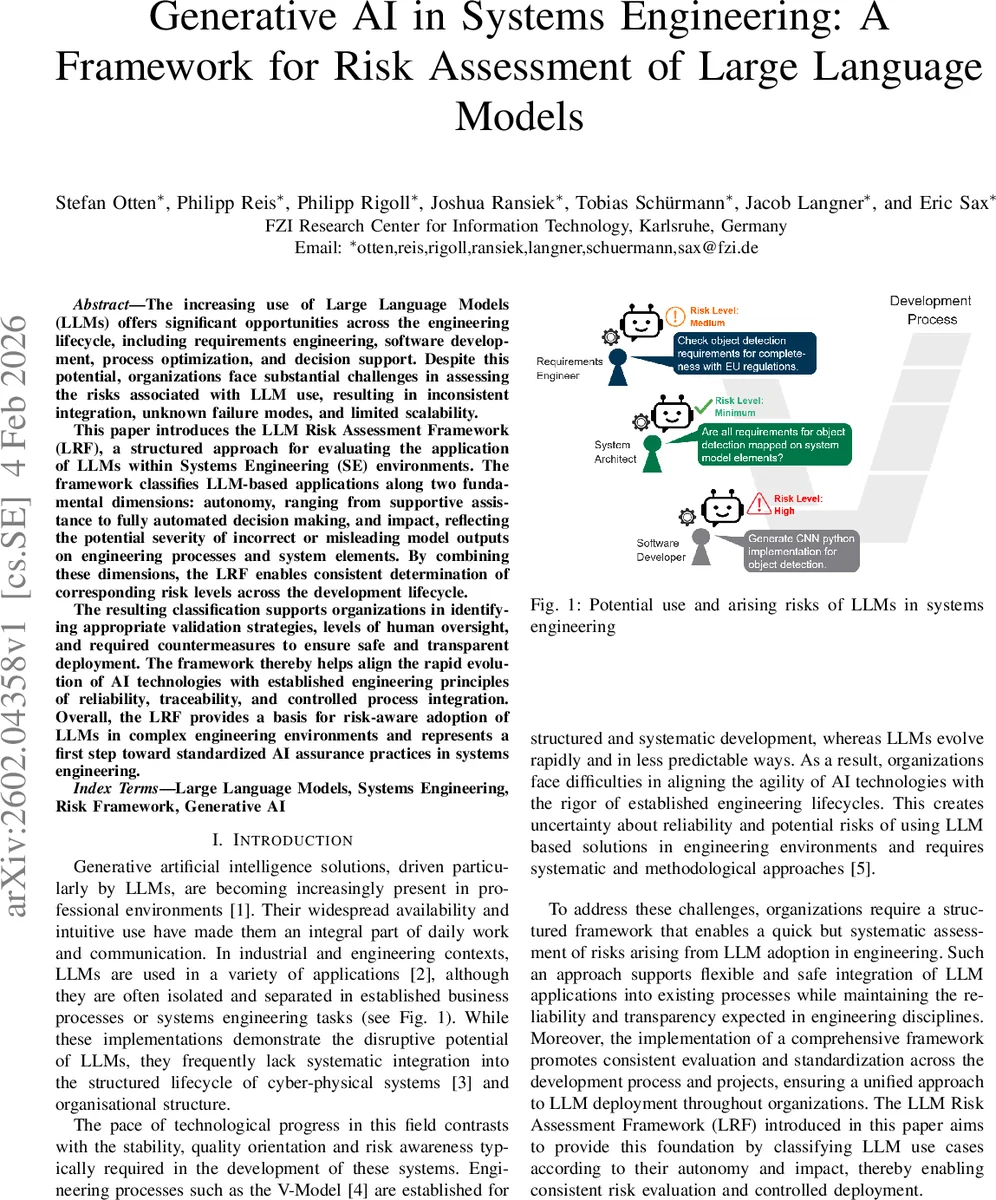

The increasing use of Large Language Models (LLMs) offers significant opportunities across the engineering lifecycle, including requirements engineering, software development, process optimization, and decision support. Despite this potential, organizations face substantial challenges in assessing the risks associated with LLM use, resulting in inconsistent integration, unknown failure modes, and limited scalability. This paper introduces the LLM Risk Assessment Framework (LRF), a structured approach for evaluating the application of LLMs within Systems Engineering (SE) environments. The framework classifies LLM-based applications along two fundamental dimensions: autonomy, ranging from supportive assistance to fully automated decision making, and impact, reflecting the potential severity of incorrect or misleading model outputs on engineering processes and system elements. By combining these dimensions, the LRF enables consistent determination of corresponding risk levels across the development lifecycle. The resulting classification supports organizations in identifying appropriate validation strategies, levels of human oversight, and required countermeasures to ensure safe and transparent deployment. The framework thereby helps align the rapid evolution of AI technologies with established engineering principles of reliability, traceability, and controlled process integration. Overall, the LRF provides a basis for risk-aware adoption of LLMs in complex engineering environments and represents a first step toward standardized AI assurance practices in systems engineering.

💡 Research Summary

The paper addresses the growing integration of large language models (LLMs) into the full lifecycle of systems engineering and the lack of systematic methods to assess the associated risks. After outlining the opportunities LLMs bring to requirements engineering, design, verification, validation, and release—thanks to their natural‑language processing, reasoning, and content‑generation capabilities—the authors highlight a mismatch between the rapid evolution of AI and the stability‑oriented, rigor‑driven processes (e.g., V‑Model) traditionally used in cyber‑physical system development.

A review of related work shows that while many studies discuss LLM reliability, hallucinations, bias, and the need for better integration into engineering workflows, none provide a unified risk‑assessment taxonomy that simultaneously considers the degree of model autonomy and the potential impact on system outcomes. To fill this gap, the authors propose the LLM Risk Assessment Framework (LRF). The framework is built on two orthogonal dimensions:

-

Autonomy Level – inspired by SAE driving automation levels, it defines four stages:

- 0 – Assisted: the LLM only supplies information; the human makes all decisions.

- 1 – Guided: the LLM proposes actions or recommendations that must be explicitly approved by the user.

- 2 – Supervised: the LLM executes defined tasks (e.g., generating requirement sets, test cases) while a human monitors and can intervene.

- 3 – Fully Automated: the LLM operates without any human oversight, directly producing artifacts that are consumed downstream.

-

Impact Level – categorizes the severity of consequences if the LLM’s output is incorrect, misleading, or misaligned:

- Low – errors have minimal or reversible effects on performance, safety, or compliance.

- Medium – mistakes can propagate to later stages, affecting cost, schedule, or quality.

- High – the LLM directly influences design, behavior, or handling of safety‑critical or regulated data; failures may jeopardize safety, reliability, or regulatory compliance.

By mapping a specific use case onto the 4 × 3 matrix, the LRF yields a concrete risk rating and prescribes a set of mitigation measures. The authors provide a detailed matrix that links each of the twelve cells to recommended validation strategies (e.g., simulation, formal verification, test‑case coverage), human‑oversight regimes (real‑time monitoring, periodic audits), and safety‑oriented controls (rollback mechanisms, human‑approval gates).

The paper illustrates the framework with concrete examples:

- Guided‑Medium – an LLM that suggests design alternatives during concept development; validation relies on human review and targeted test cases.

- Supervised‑High – an LLM automatically drafting detailed design documentation; requires automated traceability, formal checks, and continuous monitoring.

- Fully Automated‑Low – an LLM generating release notes; a lightweight validation pipeline and post‑generation human sign‑off suffice.

Technical challenges such as limited context windows, prompt brittleness, hallucinations, and lack of explainability are acknowledged. The authors suggest complementary techniques—Retrieval‑Augmented Generation (RAG) to extend context, prompt‑standardization guidelines, and metadata logging to improve traceability. Human‑centric concerns (over‑reliance, loss of domain expertise, difficulty distinguishing AI‑generated content) are addressed through governance recommendations: organization‑wide policies, role‑based responsibilities, data‑quality controls, and continuous training.

Implementation steps for an organization are outlined: (1) define LLM use objectives and scope; (2) assess each use case against the autonomy‑impact matrix; (3) design a validation plan matched to the derived risk level; (4) establish monitoring and escalation procedures; and (5) iterate based on performance metrics and incident analyses.

In conclusion, the LRF provides a structured, domain‑agnostic method to evaluate LLM‑related risks in systems engineering, aligning AI agility with the discipline’s core principles of reliability, traceability, and controlled integration. The authors view the framework as a first step toward standardized AI assurance practices and propose future work on domain‑specific risk extensions, automated risk‑rating tools, and alignment with emerging international standards.

Comments & Academic Discussion

Loading comments...

Leave a Comment