Data Agents: Levels, State of the Art, and Open Problems

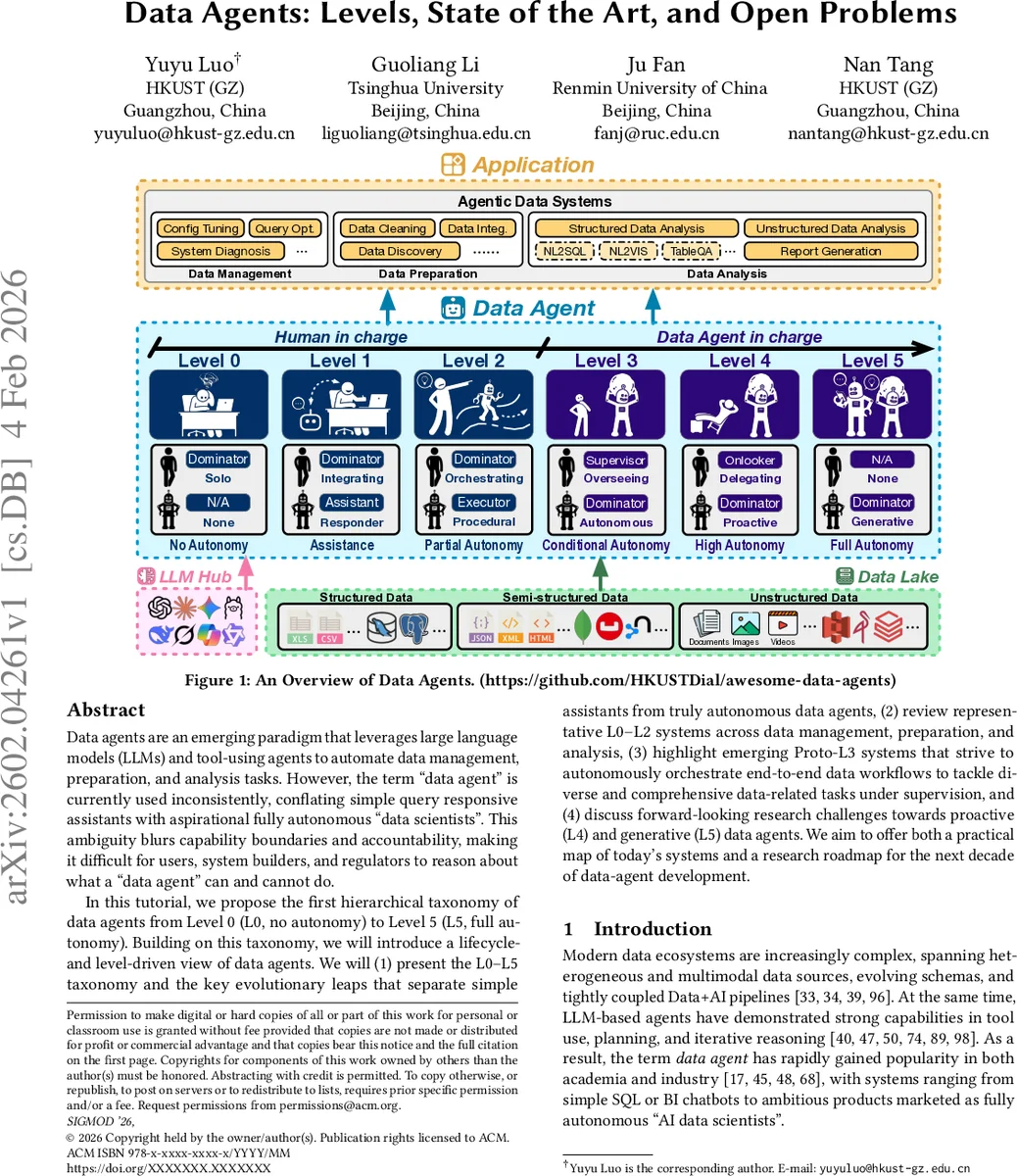

Data agents are an emerging paradigm that leverages large language models (LLMs) and tool-using agents to automate data management, preparation, and analysis tasks. However, the term “data agent” is currently used inconsistently, conflating simple query responsive assistants with aspirational fully autonomous “data scientists”. This ambiguity blurs capability boundaries and accountability, making it difficult for users, system builders, and regulators to reason about what a “data agent” can and cannot do. In this tutorial, we propose the first hierarchical taxonomy of data agents from Level 0 (L0, no autonomy) to Level 5 (L5, full autonomy). Building on this taxonomy, we will introduce a lifecycleand level-driven view of data agents. We will (1) present the L0-L5 taxonomy and the key evolutionary leaps that separate simple assistants from truly autonomous data agents, (2) review representative L0-L2 systems across data management, preparation, and analysis, (3) highlight emerging Proto-L3 systems that strive to autonomously orchestrate end-to-end data workflows to tackle diverse and comprehensive data-related tasks under supervision, and (4) discuss forward-looking research challenges towards proactive (L4) and generative (L5) data agents. We aim to offer both a practical map of today’s systems and a research roadmap for the next decade of data-agent development.

💡 Research Summary

The paper addresses the emerging concept of “data agents,” systems that combine large language models (LLMs) with tool‑using capabilities to automate data management, preparation, and analysis. The authors observe that the term is currently used inconsistently, ranging from simple query‑answering chatbots to ambitious products marketed as fully autonomous “AI data scientists.” This inconsistency obscures capability boundaries, hampers accountability, and makes it difficult for users, developers, and regulators to understand what a data agent can actually do.

To bring order to the field, the authors propose a hierarchical taxonomy inspired by the SAE J3016 driving‑automation levels, defining six autonomy levels from L0 (no autonomy) to L5 (full autonomy). They also introduce a lifecycle‑centric view that maps each level onto three major phases of the data pipeline: data management, data preparation, and data analysis.

Level definitions

- L0 (No Autonomy): All tasks are performed manually; no agent involvement.

- L1 (Assistance): Stateless, prompt‑response assistants that suggest SQL, visualizations, or report drafts. Humans execute and verify all suggestions.

- L2 (Partial Autonomy): Agents can perceive the environment, invoke specialized tools (e.g., DBMS, cleaning libraries, plotting packages), and close the loop with execution feedback. They maintain limited state across a single task.

- L3 (Workflow Orchestration – Proto‑L3): Under human supervision, agents orchestrate end‑to‑end data workflows. They generate task DAGs, optimize data‑task graphs (DAg), and coordinate multiple specialized sub‑agents. Current academic prototypes (LLM‑orchestrators, semantic‑operator frameworks) and early industry offerings fall into this category.

- L4 (Proactive): Long‑lived agents continuously monitor Data+AI ecosystems, autonomously discover issues or opportunities, and reconfigure pipelines without explicit instructions. They require robust long‑horizon planning, continual learning, and self‑governance.

- L5 (Generative): Fully generative “AI data scientists” that can invent new analytical methods, algorithms, or pipelines, not merely apply existing ones. This level entails meta‑learning, hypothesis generation, experimental design, and self‑evaluation.

The paper surveys representative systems for each level. For L0‑L2, examples include traditional DBAs, SQL‑tuning copilots, NL2SQL/NL2VIS chatbots, and data‑cleaning assistants that suggest code but rely on human execution. For Proto‑L3, the authors discuss systems that use LLMs to plan multi‑step pipelines, integrate tool‑specific APIs, and employ guardrails to ensure safety. They also map commercial “data‑agent” products from major cloud providers onto the taxonomy, noting common design patterns such as planner‑executor separation, DAg‑based orchestration, and multi‑agent collaboration, as well as limitations like reliance on predefined operators, limited causal reasoning, and heavy dependence on human‑crafted safety constraints.

Key technical challenges identified for progressing up the ladder include:

- Perception – extracting schemas, metadata, and quality signals from large, heterogeneous data lakes.

- Planning & Orchestration – generating and optimizing long‑horizon task graphs, handling dynamic tool availability, and managing resource trade‑offs.

- Memory & Continual Adaptation – maintaining context across sessions, updating knowledge bases, and learning from execution feedback.

- Causal and Meta‑Reasoning – preventing error propagation, reasoning about the impact of actions, and abstracting across tasks.

- Safety, Governance, and Accountability – defining responsibility boundaries, enforcing policy constraints, and providing verifiable guarantees as autonomy increases.

- Benchmarks & Evaluation – designing metrics and testbeds that capture autonomy, robustness, and usefulness rather than just task accuracy.

The authors outline a research roadmap focusing on two major transitions: L2→L3 (achieving reliable workflow orchestration) and L3→L4 (enabling proactive monitoring and self‑repair). They argue that breakthroughs in tool integration, automated debugging, and self‑supervised learning are essential. The final leap to L5 will require advances in generative reasoning, intrinsic motivation mechanisms, and safe exploration of novel analytical spaces.

In conclusion, the paper provides the first systematic taxonomy of data agents, a clear mapping of existing systems onto autonomy levels, and a forward‑looking agenda that highlights the technical, governance, and evaluation challenges needed to evolve data agents from helpful assistants to truly autonomous AI data scientists.

Comments & Academic Discussion

Loading comments...

Leave a Comment