Decoupled Hierarchical Distillation for Multimodal Emotion Recognition

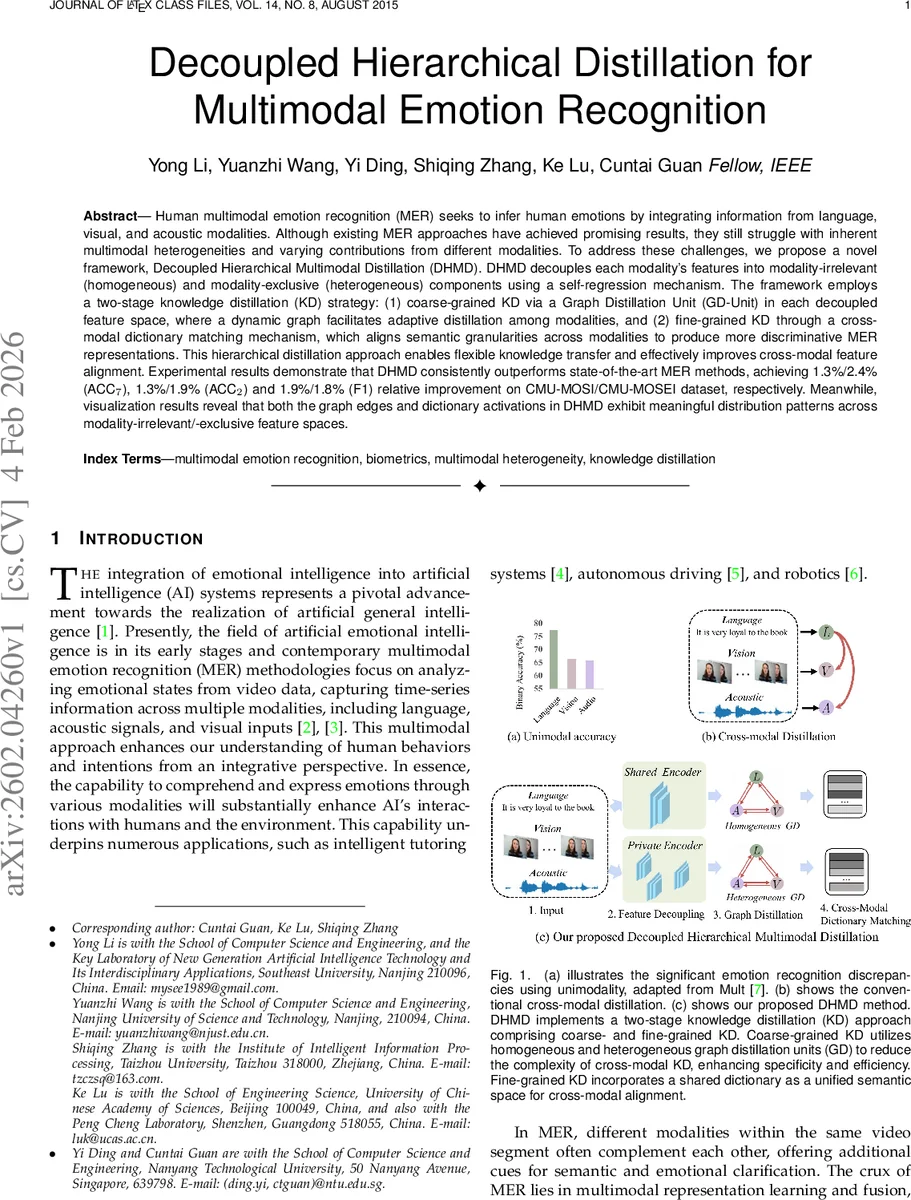

Human multimodal emotion recognition (MER) seeks to infer human emotions by integrating information from language, visual, and acoustic modalities. Although existing MER approaches have achieved promising results, they still struggle with inherent multimodal heterogeneities and varying contributions from different modalities. To address these challenges, we propose a novel framework, Decoupled Hierarchical Multimodal Distillation (DHMD). DHMD decouples each modality’s features into modality-irrelevant (homogeneous) and modality-exclusive (heterogeneous) components using a self-regression mechanism. The framework employs a two-stage knowledge distillation (KD) strategy: (1) coarse-grained KD via a Graph Distillation Unit (GD-Unit) in each decoupled feature space, where a dynamic graph facilitates adaptive distillation among modalities, and (2) fine-grained KD through a cross-modal dictionary matching mechanism, which aligns semantic granularities across modalities to produce more discriminative MER representations. This hierarchical distillation approach enables flexible knowledge transfer and effectively improves cross-modal feature alignment. Experimental results demonstrate that DHMD consistently outperforms state-of-the-art MER methods, achieving 1.3%/2.4% (ACC$_7$), 1.3%/1.9% (ACC$_2$) and 1.9%/1.8% (F1) relative improvement on CMU-MOSI/CMU-MOSEI dataset, respectively. Meanwhile, visualization results reveal that both the graph edges and dictionary activations in DHMD exhibit meaningful distribution patterns across modality-irrelevant/-exclusive feature spaces.

💡 Research Summary

The paper tackles multimodal emotion recognition (MER), where the goal is to infer a speaker’s affective state from three synchronized streams: language (text transcripts), visual (video frames), and acoustic (audio). Existing MER methods fall into two families. Fusion‑centric approaches (e.g., Tensor Fusion Network) design sophisticated mechanisms to combine raw modality features, but they struggle with the intrinsic heterogeneity of the data—language conveys abstract semantics, while visual and acoustic streams are dense, low‑level signals. Attention‑based approaches (e.g., MulT) learn cross‑modal interactions via transformers, yet they still suffer from distribution mismatches and from the fact that some modalities dominate the prediction while others contribute little.

To address these challenges, the authors propose Decoupled Hierarchical Multimodal Distillation (DHMD), a two‑stage knowledge‑distillation framework that first decouples each modality’s representation into a modality‑irrelevant (common/homogeneous) component and a modality‑exclusive (heterogeneous) component, then performs coarse‑grained and fine‑grained distillation in these spaces.

1. Feature Decoupling

Each modality (m \in {L, V, A}) is processed by a shared encoder (producing (X^{m}{com})) and a private encoder (producing (X^{m}{prt})). The shared encoder learns features that are common across all modalities, while the private encoder captures modality‑specific signals. Decoupling is enforced by a self‑regression loss: the private features are fed into a lightweight decoder that must reconstruct the original shallow features, guaranteeing that (X_{prt}) retains sufficient information. A margin loss further pushes samples of the same emotion label closer together and pushes different‑label samples apart, encouraging discriminative geometry in both spaces.

2. Hierarchical Knowledge Distillation

2.1 Coarse‑grained KD (Graph Distillation)

In each decoupled space a Graph Distillation Unit (GD‑Unit) is instantiated. The GD‑Unit builds a dynamic directed graph where each node corresponds to a modality and each edge weight represents the strength of knowledge transfer from source to target. Edge weights are learned jointly with the rest of the network via a KL‑divergence‑style distillation loss. Two variants are defined:

- HoGD (Homogeneous Graph Distillation) operates on the common space (X_{com}). Because the distribution gap is already reduced, HoGD can capture high‑level semantic correlations and reinforce them across modalities.

- HeGD (Heterogeneous Graph Distillation) works on the exclusive space (X_{prt}). Here a multimodal transformer is used to first align the heterogeneous features before graph‑based distillation, allowing the model to share modality‑specific cues that would otherwise be lost.

The graph is sample‑adaptive: for a given video segment the model may learn strong language→visual edges if the textual cue is dominant, or the opposite if visual cues are more informative. This eliminates the need for manually set distillation directions.

2.2 Fine‑grained KD (Dictionary Matching)

Beyond the coarse alignment, DHMD introduces a shared dictionary (codebook) of (K) discrete vectors. For each decoupled space a separate dictionary is learned, but the same dictionary is shared across modalities. Each modality’s features are quantized against the dictionary, producing a set of activation scores. A dictionary‑matching loss forces samples sharing the same emotion label to activate similar dictionary entries, effectively aligning semantic granularity across modalities. The dictionary thus serves as a set of “anchor points” that bridge subtle emotion cues, especially in weaker modalities.

3. Fusion and Prediction

The outputs of HoGD/HeGD and the dictionary‑matching modules are concatenated and passed through an adaptive fusion layer that learns modality‑specific gating weights. The fused representation is finally fed to a classifier (typically a fully‑connected layer with softmax) to predict either 7‑class (ACC7) or binary (ACC2) sentiment labels.

4. Experimental Validation

The authors evaluate DHMD on four benchmark datasets: CMU‑MOSI, CMU‑MOSEI, MUStARD, and UR‑FUNNY. Metrics reported include 7‑class accuracy (ACC7), binary accuracy (ACC2), and F1 score. DHMD consistently outperforms prior state‑of‑the‑art methods, achieving relative improvements of 1.3%–2.4% on ACC7, 1.3%–1.9% on ACC2, and 1.8%–1.9% on F1.

Ablation studies dissect the contributions of each component: (a) removing the decoupling step, (b) using only HoGD/HeGD, (c) using only dictionary matching, and (d) the full model. The full DHMD yields the highest scores, confirming that both coarse‑ and fine‑grained distillation are complementary.

Visualization analyses further support the claims: (i) graph edge weights evolve during training, highlighting modality‑specific distillation pathways per sample; (ii) dictionary activation heatmaps cluster by emotion class, indicating that the shared codebook learns a semantically meaningful space.

5. Contributions and Limitations

Contributions

- Introduces a unified multimodal distillation framework that simultaneously handles coarse semantic alignment (graph distillation) and fine semantic granularity (dictionary matching).

- Proposes a self‑regression based feature decoupling mechanism that cleanly separates modality‑common and modality‑exclusive representations, facilitating targeted knowledge transfer.

- Demonstrates state‑of‑the‑art performance across multiple MER benchmarks and provides extensive ablations and visualizations to explain the internal dynamics.

Limitations

- The size of the dictionary and the graph topology are set empirically; a more principled, possibly adaptive, selection could further improve scalability.

- Graph construction and dictionary quantization add computational overhead, which may hinder real‑time deployment.

- The current experiments focus on single‑speaker, well‑aligned video clips; extending to multi‑speaker dialogues or noisy, asynchronous streams remains an open challenge.

6. Future Directions

Potential extensions include (i) lightweight graph modules (e.g., sparsified attention) for edge efficiency, (ii) dynamic dictionary growth or pruning based on usage statistics, (iii) applying DHMD to other multimodal tasks such as sarcasm detection, affective dialogue generation, or cross‑modal retrieval, and (iv) integrating self‑supervised pretraining on large‑scale unlabelled multimodal corpora to further boost the quality of the decoupled representations.

In summary, DHMD offers a principled, hierarchical approach to mitigate multimodal heterogeneity in emotion recognition, achieving notable gains by jointly leveraging coarse graph‑based distillation and fine dictionary‑based alignment.

Comments & Academic Discussion

Loading comments...

Leave a Comment