SPOT-Occ: Sparse Prototype-guided Transformer for Camera-based 3D Occupancy Prediction

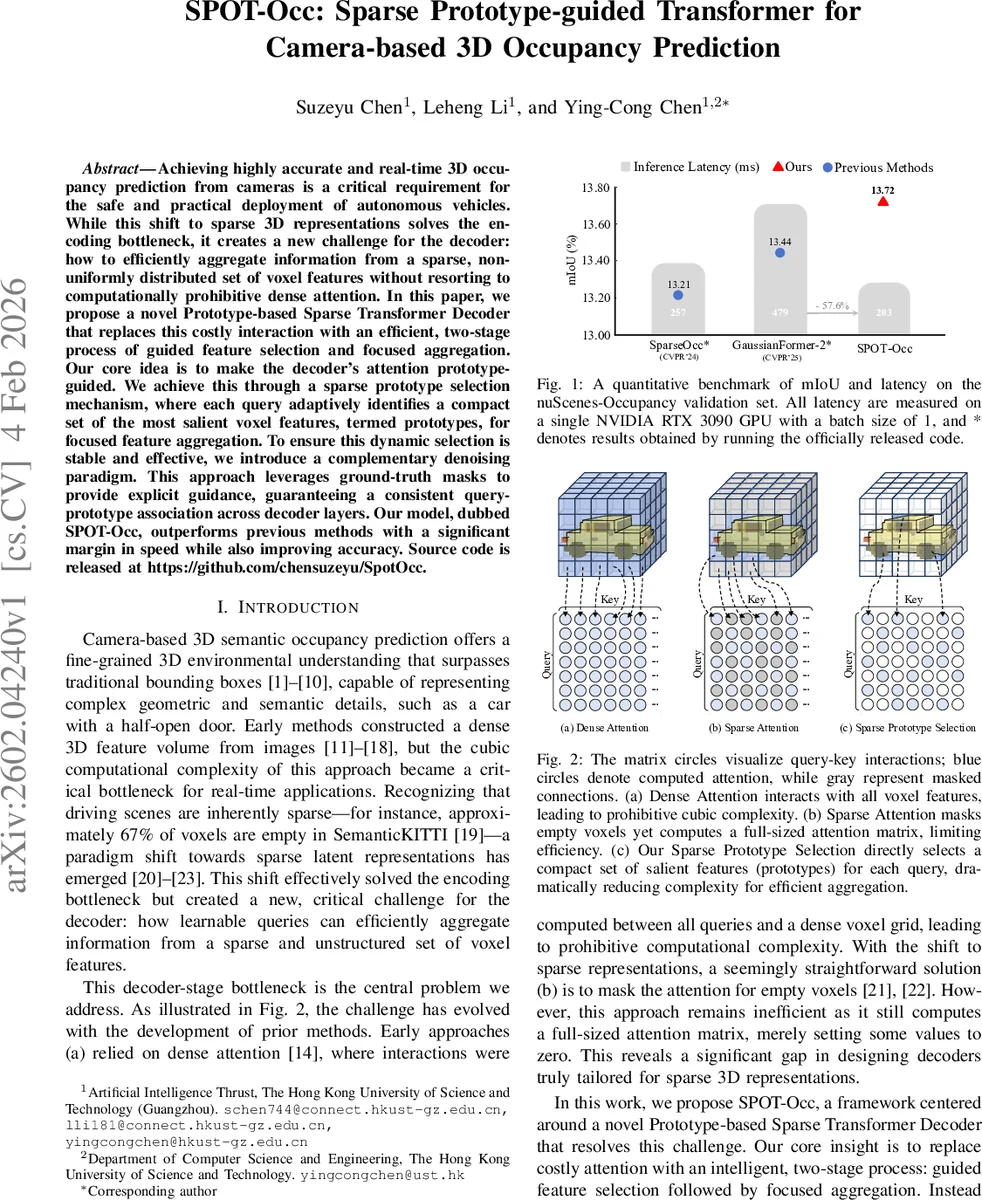

Achieving highly accurate and real-time 3D occupancy prediction from cameras is a critical requirement for the safe and practical deployment of autonomous vehicles. While this shift to sparse 3D representations solves the encoding bottleneck, it creates a new challenge for the decoder: how to efficiently aggregate information from a sparse, non-uniformly distributed set of voxel features without resorting to computationally prohibitive dense attention. In this paper, we propose a novel Prototype-based Sparse Transformer Decoder that replaces this costly interaction with an efficient, two-stage process of guided feature selection and focused aggregation. Our core idea is to make the decoder’s attention prototype-guided. We achieve this through a sparse prototype selection mechanism, where each query adaptively identifies a compact set of the most salient voxel features, termed prototypes, for focused feature aggregation. To ensure this dynamic selection is stable and effective, we introduce a complementary denoising paradigm. This approach leverages ground-truth masks to provide explicit guidance, guaranteeing a consistent query-prototype association across decoder layers. Our model, dubbed SPOT-Occ, outperforms previous methods with a significant margin in speed while also improving accuracy. Source code is released at https://github.com/chensuzeyu/SpotOcc.

💡 Research Summary

The paper addresses a critical bottleneck in camera‑based 3D occupancy prediction: the decoder’s need to aggregate information from a large, sparse set of voxel features. While recent works have alleviated the encoding bottleneck by using sparse voxel representations, they still rely on dense or naively masked attention in the transformer decoder, leading to prohibitive O(Nq·Nv) complexity.

SPOT‑Occ introduces a Prototype‑guided Sparse Transformer Decoder that replaces full attention with a two‑stage process: (1) Deformable Top‑ρ Prototype Selection – for each learnable query, a saliency score (cosine similarity after linear projection) is computed against all voxel keys. The top‑ρ % of voxels (typically 0.1–1 % of non‑empty voxels) are selected as prototypes. This selection is performed independently for each attention head, allowing heads to specialize in different feature sub‑spaces. (2) Prototype‑guided Aggregation – attention weights are obtained by temperature‑scaled softmax over the selected scores, and the corresponding value vectors are aggregated into a single prototype feature v_agg. A gated update combines v_agg with the original query through two feed‑forward networks, layer‑norm, and a residual connection, yielding a refined query. This reduces cross‑attention complexity to O(Nq·k) where k = ⌈ρ·Nv⌉ ≪ Nv.

Dynamic prototype selection can be unstable during training: the same query may attend to very different voxel sets across decoder layers, causing inconsistent mask predictions. To stabilize learning, the authors propose a Denoising Training Paradigm. For each ground‑truth object, a query is generated from its class embedding, then corrupted with semantic noise (random class flips) and feature noise (Gaussian perturbation). These noised queries are processed together with the regular matching queries. Crucially, the noised queries are guided by the ground‑truth masks during prototype selection, forcing them to focus on the correct spatial region from the outset. After decoder refinement, a dedicated Denoising Head reconstructs the original class and mask, providing a direct, one‑to‑one supervision that bypasses Hungarian matching. The Denoising branch is used only during training, incurring zero overhead at inference.

The overall architecture consists of: (i) a multi‑view image backbone with FPN, (ii) Lift‑Splat‑Shoot (LSS) to lift 2D features into a sparse 3D space, (iii) a sparse convolutional U‑Net‑like backbone that produces a multi‑scale voxel pyramid, (iv) the SPOT‑CA decoder described above, and (v) two prediction heads (class + mask, and denoising). Masks are obtained by dot‑product between refined query embeddings and voxel features, scaled by class probabilities, and the final voxel‑level occupancy is the argmax over all queries.

Training optimizes a composite loss: L = L_match + L_dn + L_depth. L_match and L_dn each combine cross‑entropy for classification with BCE + Dice for mask prediction; L_match uses Hungarian assignment, while L_dn uses the fixed correspondence of noised queries. An auxiliary depth loss supervises the LSS module using LiDAR depth.

Experiments on the nuScenes‑Occupancy benchmark demonstrate that SPOT‑Occ achieves a 57.6 % reduction in inference latency (≈13 ms on an RTX 3090) compared with the previous state‑of‑the‑art GaussianFormer‑2, while slightly improving mean IoU (13.44 % → 13.72 %). Ablation studies show that even with a tiny prototype ratio (ρ = 0.1 %), performance remains robust, and the denoising training dramatically increases layer‑wise mean IoU from ~48 % to >80 % in early layers, confirming its stabilizing effect.

In summary, SPOT‑Occ presents a novel query‑prototype attention mechanism and a GT‑mask‑driven denoising scheme that together enable efficient, accurate, and real‑time 3D occupancy prediction from cameras alone. This contribution not only advances autonomous‑driving perception but also opens avenues for applying prototype‑guided sparse attention to other 3D tasks such as object detection and semantic segmentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment