Adaptive 1D Video Diffusion Autoencoder

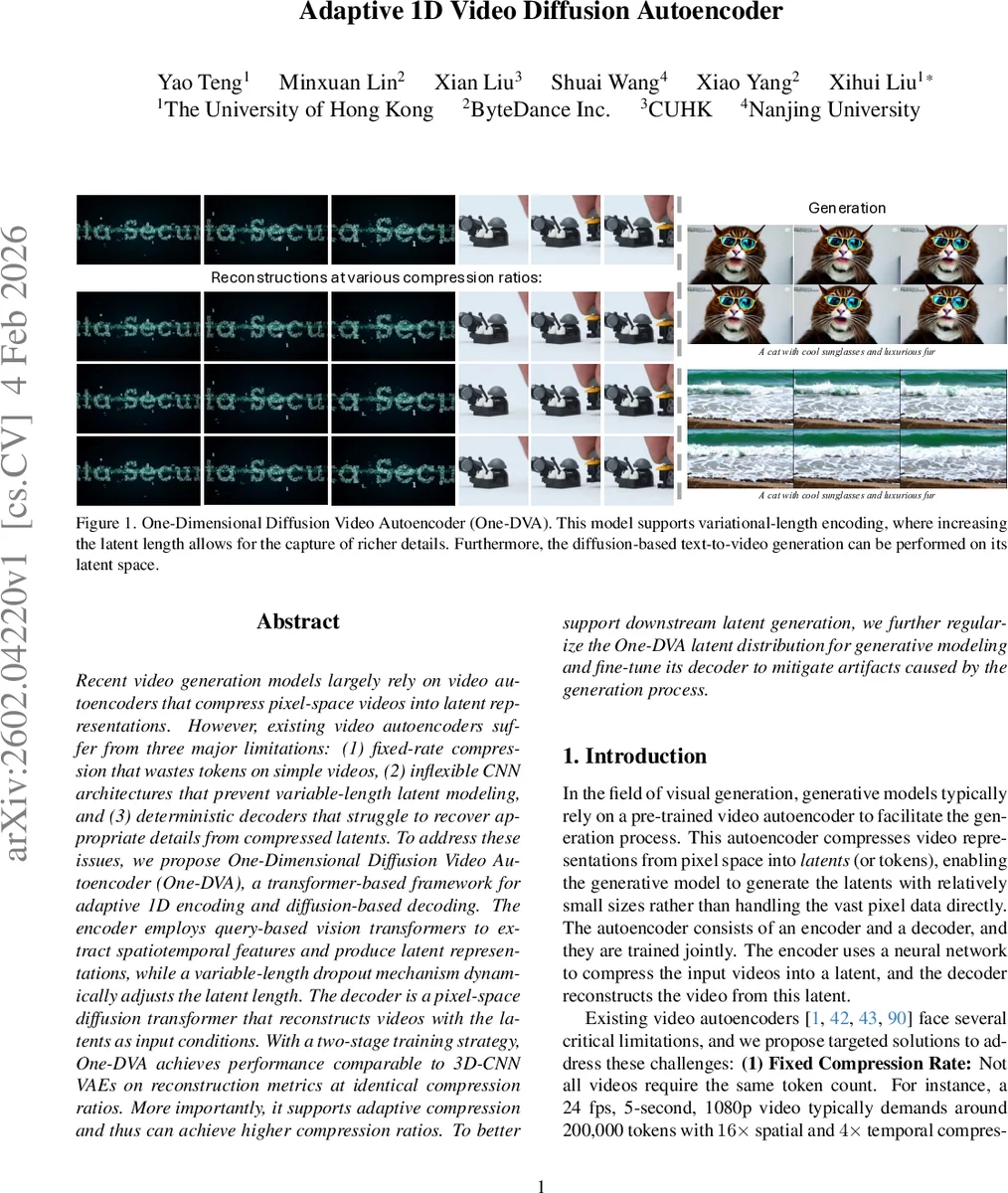

Recent video generation models largely rely on video autoencoders that compress pixel-space videos into latent representations. However, existing video autoencoders suffer from three major limitations: (1) fixed-rate compression that wastes tokens on simple videos, (2) inflexible CNN architectures that prevent variable-length latent modeling, and (3) deterministic decoders that struggle to recover appropriate details from compressed latents. To address these issues, we propose One-Dimensional Diffusion Video Autoencoder (One-DVA), a transformer-based framework for adaptive 1D encoding and diffusion-based decoding. The encoder employs query-based vision transformers to extract spatiotemporal features and produce latent representations, while a variable-length dropout mechanism dynamically adjusts the latent length. The decoder is a pixel-space diffusion transformer that reconstructs videos with the latents as input conditions. With a two-stage training strategy, One-DVA achieves performance comparable to 3D-CNN VAEs on reconstruction metrics at identical compression ratios. More importantly, it supports adaptive compression and thus can achieve higher compression ratios. To better support downstream latent generation, we further regularize the One-DVA latent distribution for generative modeling and fine-tune its decoder to mitigate artifacts caused by the generation process.

💡 Research Summary

The paper introduces One‑Dimensional Diffusion Video Autoencoder (One‑DVA), a transformer‑based autoencoder that overcomes three major shortcomings of existing video autoencoders: fixed‑rate compression, inflexible CNN architectures, and deterministic decoders. The encoder uses a Vision Transformer (ViT) backbone augmented with learnable 1‑dimensional queries. Video frames are first linear‑patched into spatiotemporal embeddings, concatenated with the queries, and processed through multiple transformer blocks. The queries attend to the embeddings and extract a compact 1‑D latent sequence, while a parallel structural latent is also produced for use as an auxiliary condition.

To achieve adaptive compression, a variable‑length dropout module is applied during training. Tokens are randomly dropped from the tail of the 1‑D sequence according to a distribution derived from a motion‑score computed on pixel differences. This “matryoshka” strategy lets simple videos retain few tokens and complex videos retain many, effectively providing content‑aware compression ratios.

The decoder is a pixel‑space Diffusion Transformer (DiT). It receives both the 1‑D latent and the structural latent as conditioning inputs and performs a diffusion process in pixel space, gradually denoising a random Gaussian input into a high‑fidelity video. Training uses a composite loss: diffusion (flow‑matching) loss, perceptual VGG loss, KL regularization to enforce a standard Gaussian latent distribution, and REPA loss on noisy features.

Training proceeds in two stages. Stage 1 focuses on optimizing the encoder for accurate compression; stage 2 integrates the variable‑length dropout and diffusion decoder, fine‑tuning the whole system. To make the latent space suitable for downstream latent diffusion models (LDMs), an alignment loss projects the 1‑D latents into the structural latent space, encouraging joint modeling. Finally, the decoder is fine‑tuned on latents generated by the LDM, allowing it to handle artifacts that arise from the generative process.

Experimental results show that at standard compression ratios (e.g., 16× spatial, 4× temporal) One‑DVA matches or slightly exceeds the reconstruction quality of state‑of‑the‑art 3D‑CNN VAEs in PSNR, SSIM, and LPIPS. When adaptive compression is enabled, token counts can be reduced by 30‑50 % with negligible quality loss, especially for low‑motion content. The paper demonstrates that (1) 1‑D variable‑length encoding enables content‑adaptive compression, (2) query‑based transformers provide flexibility to handle arbitrary video shapes, and (3) a diffusion‑based decoder offers generative reconstruction that compensates for lossy compression. Together, these contributions present a new paradigm for efficient, high‑quality video tokenization and generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment