AGMA: Adaptive Gaussian Mixture Anchors for Prior-Guided Multimodal Human Trajectory Forecasting

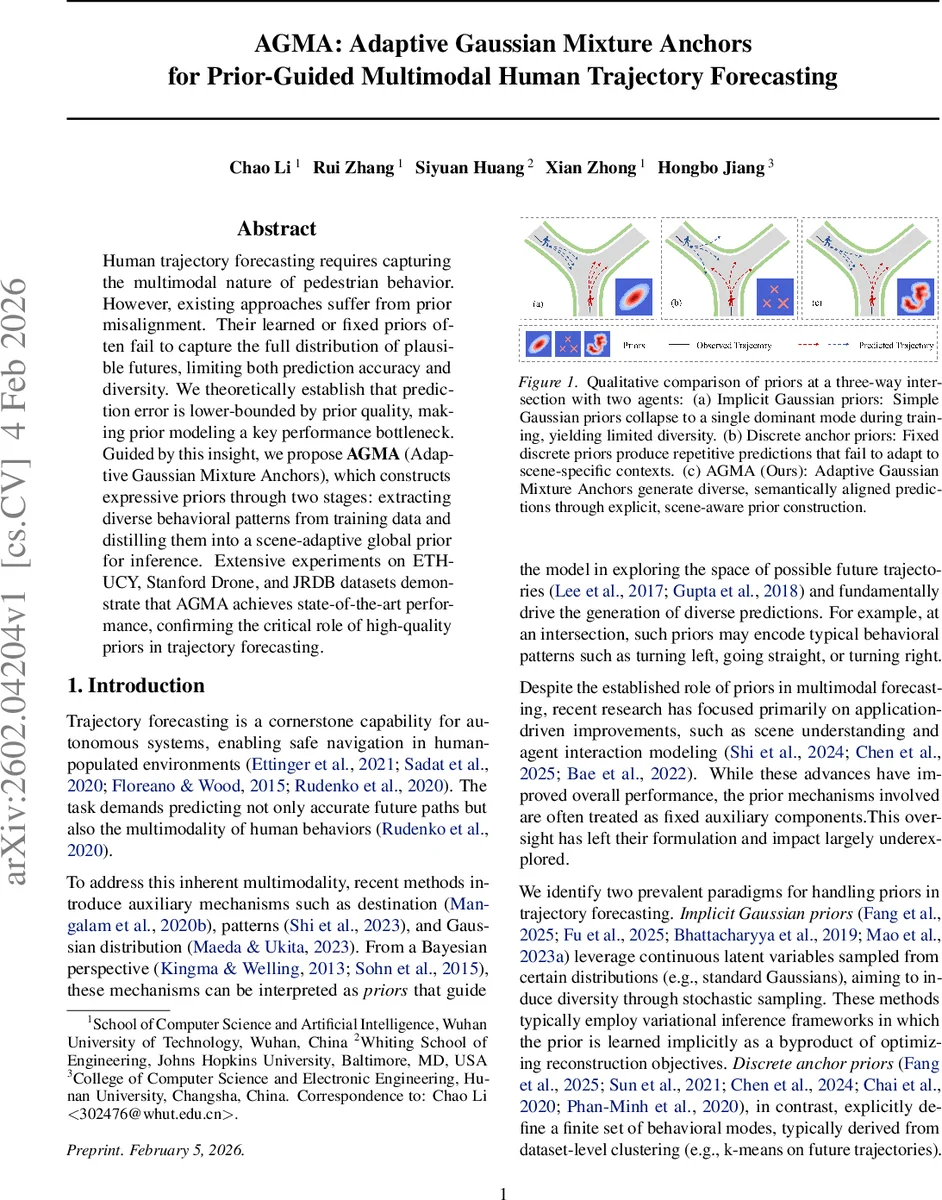

Human trajectory forecasting requires capturing the multimodal nature of pedestrian behavior. However, existing approaches suffer from prior misalignment. Their learned or fixed priors often fail to capture the full distribution of plausible futures, limiting both prediction accuracy and diversity. We theoretically establish that prediction error is lower-bounded by prior quality, making prior modeling a key performance bottleneck. Guided by this insight, we propose AGMA (Adaptive Gaussian Mixture Anchors), which constructs expressive priors through two stages: extracting diverse behavioral patterns from training data and distilling them into a scene-adaptive global prior for inference. Extensive experiments on ETH-UCY, Stanford Drone, and JRDB datasets demonstrate that AGMA achieves state-of-the-art performance, confirming the critical role of high-quality priors in trajectory forecasting.

💡 Research Summary

The paper addresses the fundamental challenge of multimodal human trajectory forecasting by focusing on the quality of the prior distribution that guides prediction. The authors first formalize trajectory forecasting as learning the conditional distribution p(Y|X) of future trajectories Y given observed trajectories X. They decompose this distribution using a latent variable z into a sampler p(Y|X,z) and a prior p(z|X). Through Theorem 3.1 they prove that the distribution‑matching loss L_dist is lower‑bounded by the squared difference between prior error (ε_prior) and sampler error (ε_sample). Consequently, even an optimal sampler cannot compensate for a poor prior. Proposition 3.2 further shows, from an information‑theoretic perspective, that the sampler’s minimum error is limited by the mutual information I(Y;z|X). If the prior fails to capture sufficient information (i.e., I(Y;z|X) ≪ H(Y|X)), the sampler is forced to incur an irreducible error floor.

Guided by these insights, the authors propose Adaptive Gaussian Mixture Anchors (AGMA), a two‑stage framework explicitly designed to improve prior quality. In Stage 1, batch‑level prior extraction, all agents in a training batch are encoded into full‑trajectory embeddings. A graph‑based clustering algorithm connects agents that are similar (high similarity score) and not repulsive (low repulsion score). The clustering thresholds are learned end‑to‑end via a Straight‑Through Estimator. Each resulting cluster represents a behavioral mode; cluster assignments are decoded back into trajectory space, encouraging the clusters to retain maximal mutual information with the future trajectories. This yields batch‑specific Gaussian mixture priors that preserve long‑tail patterns.

In Stage 2, global prior distillation, the batch‑level mixtures are aggregated into a single scene‑adaptive global Gaussian Mixture Model (GMM). The aggregation uses optimal transport to align batch components with global components, while a cross‑attention mechanism selects the most relevant global components for the current scene context. The resulting p(z|X) is thus scene‑aware and can dynamically adjust its mixture weights and component means to reflect the geometry and social context of each scene.

Importantly, the authors deliberately keep the trajectory decoder simple—a shared MLP—so that any performance gains can be attributed to the improved prior rather than a more powerful sampler. Extensive experiments on three widely used benchmarks—ETH‑UCY, Stanford Drone, and the egocentric JRDB dataset—demonstrate that AGMA achieves state‑of‑the‑art results. On ETH‑UCY, AGMA improves minimum average displacement error (mADE20) by 5.26 % and minimum final displacement error (mFDE20) by 9.38 % compared to previous best methods. Qualitative visualizations show that AGMA can generate diverse, semantically appropriate predictions at complex three‑way intersections, correctly handling left turns, straight‑through motions, and right turns according to scene constraints.

The paper’s contributions are threefold: (1) a theoretical analysis establishing prior quality as a necessary condition for accurate and diverse trajectory forecasting; (2) the AGMA framework that explicitly optimizes prior quality through batch‑wise clustering auto‑encoding and optimal‑transport‑based global distillation; and (3) empirical evidence that high‑quality, adaptive priors can outperform sophisticated decoder architectures. By shifting the focus from ever‑more complex samplers to principled prior modeling, AGMA opens a new direction for research in human motion prediction, with immediate implications for autonomous driving, robot navigation, and any system that must anticipate human movement in crowded, dynamic environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment