Partial Ring Scan: Revisiting Scan Order in Vision State Space Models

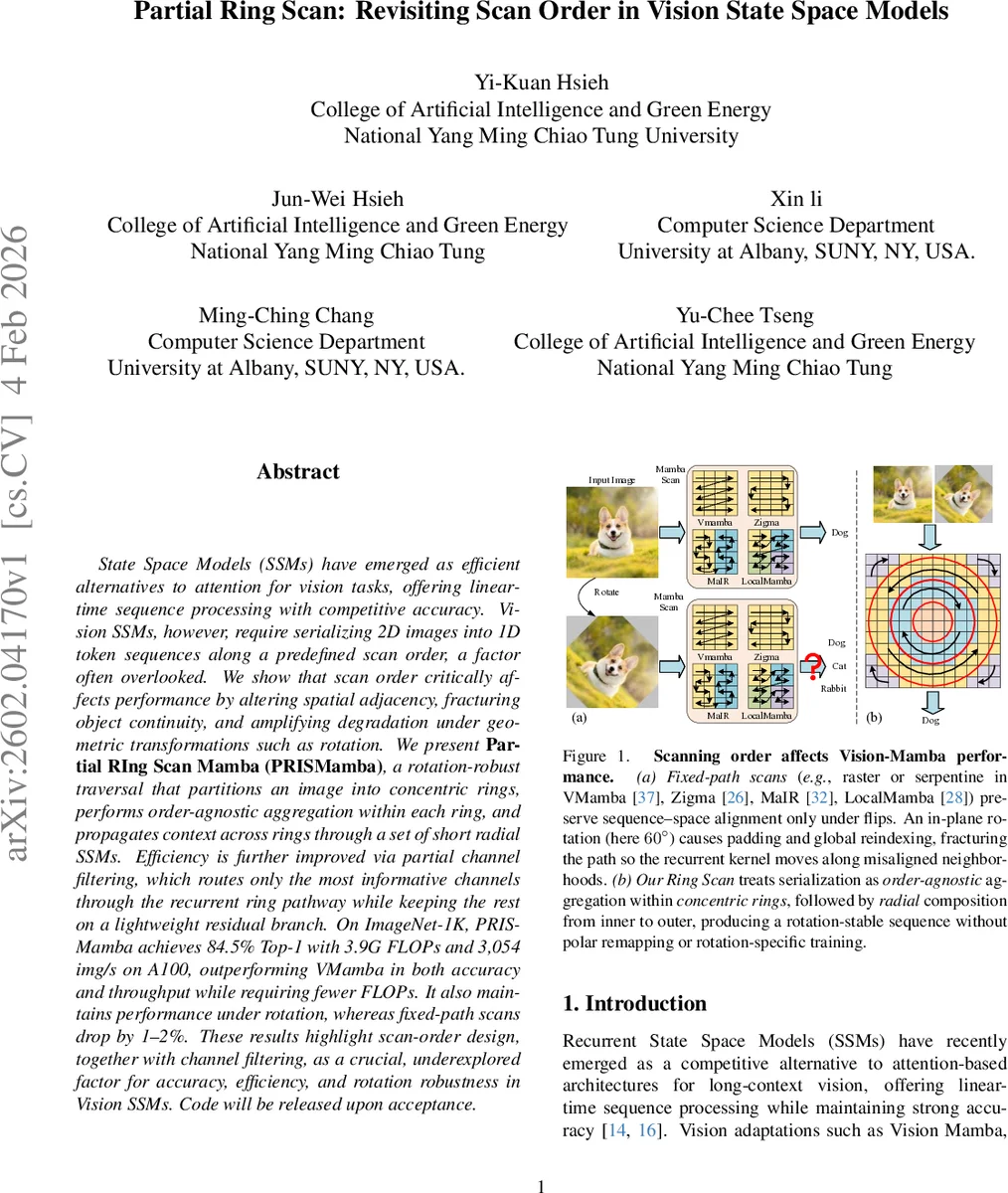

State Space Models (SSMs) have emerged as efficient alternatives to attention for vision tasks, offering lineartime sequence processing with competitive accuracy. Vision SSMs, however, require serializing 2D images into 1D token sequences along a predefined scan order, a factor often overlooked. We show that scan order critically affects performance by altering spatial adjacency, fracturing object continuity, and amplifying degradation under geometric transformations such as rotation. We present Partial RIng Scan Mamba (PRISMamba), a rotation-robust traversal that partitions an image into concentric rings, performs order-agnostic aggregation within each ring, and propagates context across rings through a set of short radial SSMs. Efficiency is further improved via partial channel filtering, which routes only the most informative channels through the recurrent ring pathway while keeping the rest on a lightweight residual branch. On ImageNet-1K, PRISMamba achieves 84.5% Top-1 with 3.9G FLOPs and 3,054 img/s on A100, outperforming VMamba in both accuracy and throughput while requiring fewer FLOPs. It also maintains performance under rotation, whereas fixed-path scans drop by 1~2%. These results highlight scan-order design, together with channel filtering, as a crucial, underexplored factor for accuracy, efficiency, and rotation robustness in Vision SSMs. Code will be released upon acceptance.

💡 Research Summary

State Space Models (SSMs) have recently emerged as a compelling alternative to self‑attention for vision, offering linear‑time sequence processing while preserving strong long‑range modeling capabilities. A critical yet under‑examined component of vision‑SSMs is the way a 2‑D image is serialized into a 1‑D token sequence. Existing works typically adopt a fixed scan order—raster, serpentine, diagonal, etc.—and treat this choice as an implementation detail. This paper demonstrates that scan order profoundly influences performance because it determines which spatial neighbors become adjacent in the sequence processed by the SSM. When an image is rotated, the predefined path is globally re‑indexed, breaking the alignment between sequence adjacency and true geometric adjacency. Consequently, object continuity is fractured, short‑range recurrences are forced to “repair” local coherence, and overall accuracy degrades, especially under in‑plane rotations.

To address these issues, the authors propose Partial Ring Scan Mamba (PRISMamba), a rotation‑robust traversal scheme. An image is first partitioned into concentric rings based on Euclidean distance from a chosen center (the image center or an object detector’s centroid). Within each ring, tokens are aggregated in an order‑agnostic manner (e.g., mean or weighted pooling), eliminating dependence on a specific traversal direction. Rings are then processed radially from the innermost to the outermost using a short, lightweight SSM (typically 1–3 steps). This design preserves spatial locality under arbitrary rotations because the ring membership does not change with rotation, and only a minimal sequential component is retained to keep the overall computation linear.

A second key contribution is Partial Channel Filtering (PCF). Instead of feeding all feature channels through the recurrent ring pathway, the model learns to select the most informative channels for the ring‑wise SSM while routing the remaining channels through a cheap residual branch. PCF reduces FLOPs by roughly 20 % and improves throughput without sacrificing, and sometimes even slightly improving, accuracy.

The architecture integrates PRISM blocks into a four‑stage hierarchical backbone. Each stage stacks L_i PRISM blocks, each consisting of (1) ring‑wise aggregation, (2) radial SSM propagation, (3) a 1 × 1 projection, and (4) residual fusion. The token count remains identical to conventional Vision‑Mamba models, ensuring comparable memory usage.

Empirical evaluation spans classification, detection, and segmentation. On ImageNet‑1K (224 × 224), PRISMamba achieves 84.5 % top‑1 accuracy with 3.9 G FLOPs and processes 3,054 images per second on an A100 GPU—outperforming VMamba (82.6 %, 5.6 G FLOPs, 1,686 img/s) by 1.9 % absolute accuracy and 1.8× higher throughput. A variant without PCF reaches 84.1 % at 4.6 G FLOPs and 2,177 img/s, confirming that the partial module itself yields efficiency gains. Under rotation, fixed‑path scans drop 1–2 % in accuracy, whereas PRISMamba remains essentially unchanged, demonstrating genuine rotation robustness without any rotation‑specific training.

On MS COCO (1× schedule, 1280 × 800), PRISMamba attains 48.9 AP (box) and 43.2 AP (mask) with 235 G FLOPs, surpassing VMamba (46.5/42.1 at 262 G) and GroupMamba (47.6/42.9 at 279 G) while using 10–19 % fewer FLOPs. Because each ring uses only short recurrences, memory and runtime stay close to standard Vision‑SSM baselines, yet the design delivers clear gains in accuracy and rotation robustness.

In summary, the paper makes four major contributions: (1) a systematic analysis showing how scan order shapes spatial adjacency and impacts performance; (2) the introduction of a rotation‑stable ring‑based scan that preserves locality while keeping linear‑time complexity; (3) partial channel filtering that trims computation and modestly boosts accuracy; and (4) a unified PRISMamba architecture that sets a new state‑of‑the‑art among Vision‑SSMs across multiple tasks. The work highlights scan‑order design as a cost‑free hyperparameter that should be treated as a first‑class citizen in future Vision‑SSM research. Future directions include object‑aware ring centering, multi‑scale ring hierarchies, and more sophisticated channel‑selection mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment