From Consistency to Complementarity: Aligned and Disentangled Multi-modal Learning for Time Series Understanding and Reasoning

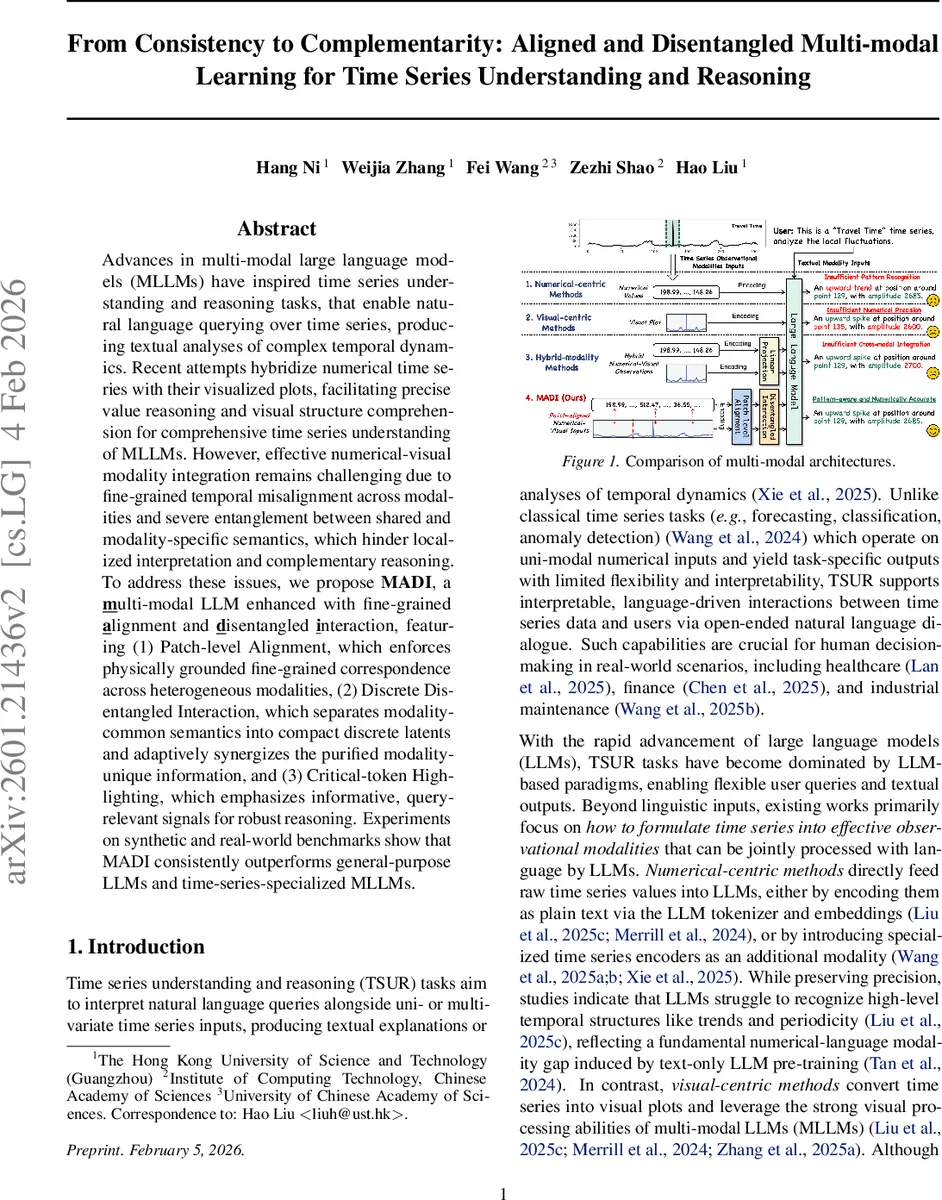

Advances in multi-modal large language models (MLLMs) have inspired time series understanding and reasoning tasks, that enable natural language querying over time series, producing textual analyses of complex temporal dynamics. Recent attempts hybridize numerical time series with their visualized plots, facilitating precise value reasoning and visual structure comprehension for comprehensive time series understanding of MLLMs. However, effective numerical-visual modality integration remains challenging due to fine-grained temporal misalignment across modalities and severe entanglement between shared and modality-specific semantics, which hinder localized interpretation and complementary reasoning. To address these issues, we propose MADI, a multi-modal LLM enhanced with fine-grained alignment and disentangled interaction, featuring (1) Patch-level Alignment, which enforces physically grounded fine-grained correspondence across heterogeneous modalities, (2) Discrete Disentangled Interaction, which separates modality-common semantics into compact discrete latents and adaptively synergizes the purified modality-unique information, and (3) Critical-token Highlighting, which emphasizes informative, query-relevant signals for robust reasoning. Experiments on synthetic and real-world benchmarks show that MADI consistently outperforms general-purpose LLMs and time-series-specialized MLLMs.

💡 Research Summary

The paper introduces MADI, a multimodal large language model (MLLM) designed for Time Series Understanding and Reasoning (TSUR), a task that requires answering natural‑language queries about one or more time‑series inputs with textual explanations. Existing approaches either treat the series purely as numbers, purely as visual plots, or combine the two in a naïve way, suffering from two fundamental problems: (1) fine‑grained misalignment between numerical values and their visual renderings, and (2) entanglement of shared semantics with modality‑specific information, which limits complementary reasoning. MADI tackles these challenges through three tightly integrated modules.

-

Patch‑level Alignment (PA) – Each time series is split into non‑overlapping patches of length pₙ. A line‑plot image is rendered at a resolution that yields exactly the same number of visual patches, establishing a one‑to‑one correspondence between numerical and visual patches. In addition, for every numerical patch a short caption containing timestamp range and basic statistics (max, min, mean, std) is generated. The three modalities (numerical, visual, caption) are encoded separately (a lightweight transformer for numbers, the pretrained vision encoder of the backbone MLLM for images, and the LLM tokenizer for captions). A contrastive loss (InfoNCE) aligns each numerical patch with its visual and textual counterparts while pushing away other patches, thereby enforcing physically grounded, fine‑grained cross‑modal consistency.

-

Discrete Disentangled Interaction (DDI) – After alignment, the model separates shared from modality‑specific semantics. Shared information is compressed into a compact discrete latent space using hierarchical Residual Vector Quantization (RVQ). The quantized codes act as a purified representation of modality‑common features, while the original continuous embeddings retain modality‑unique details. A cross‑attention layer then fuses the discrete shared codes with the modality‑specific tokens, allowing the model to exploit complementary strengths (precision of numbers, abstraction of visuals) without redundant interference.

-

Critical‑token Highlighting (CTH) – To improve robustness to diverse queries, CTH automatically identifies tokens that are most relevant to the current question across all modalities and prepends them to the token sequence fed to the LLM. This directs the language model’s attention to the most informative parts of the multimodal context, reducing hallucinations and improving answer relevance.

The architecture is built on a pretrained MLLM, reusing its vision encoder and language decoder. Experiments are conducted on synthetic datasets with controlled noise, periodicity, and trend variations, as well as real‑world benchmarks from finance, healthcare, and industrial monitoring. Baselines include general‑purpose LLMs (e.g., GPT‑4), time‑series‑specialized LLMs, vision‑only MLLMs, and hybrid models such as GEM that simply concatenate modalities. Evaluation metrics cover answer accuracy, BLEU/ROUGE scores for textual quality, and mean absolute error for precise numeric extraction. Across all settings, MADI consistently outperforms baselines by 4–7 % absolute improvement, demonstrating superior ability to retrieve exact numeric values while also providing high‑level pattern explanations (trend, seasonality). Ablation studies confirm that each component—PA, DDI, and CTH—contributes meaningfully to the overall gain.

The authors acknowledge limitations: sensitivity to patch size and codebook dimensions, computational overhead of patch rendering for streaming scenarios, and the current focus on line‑plot visualizations (other visual forms like spectrograms would need adaptation). Future work may explore adaptive patching, more efficient rendering pipelines, and extensions to diverse visual encodings.

In summary, MADI presents a principled “alignment → disentanglement → highlighting” framework that bridges the gap between numerical precision and visual abstraction in multimodal time‑series reasoning, setting a new benchmark for flexible, interpretable, and accurate TSUR systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment