Latent Chain-of-Thought as Planning: Decoupling Reasoning from Verbalization

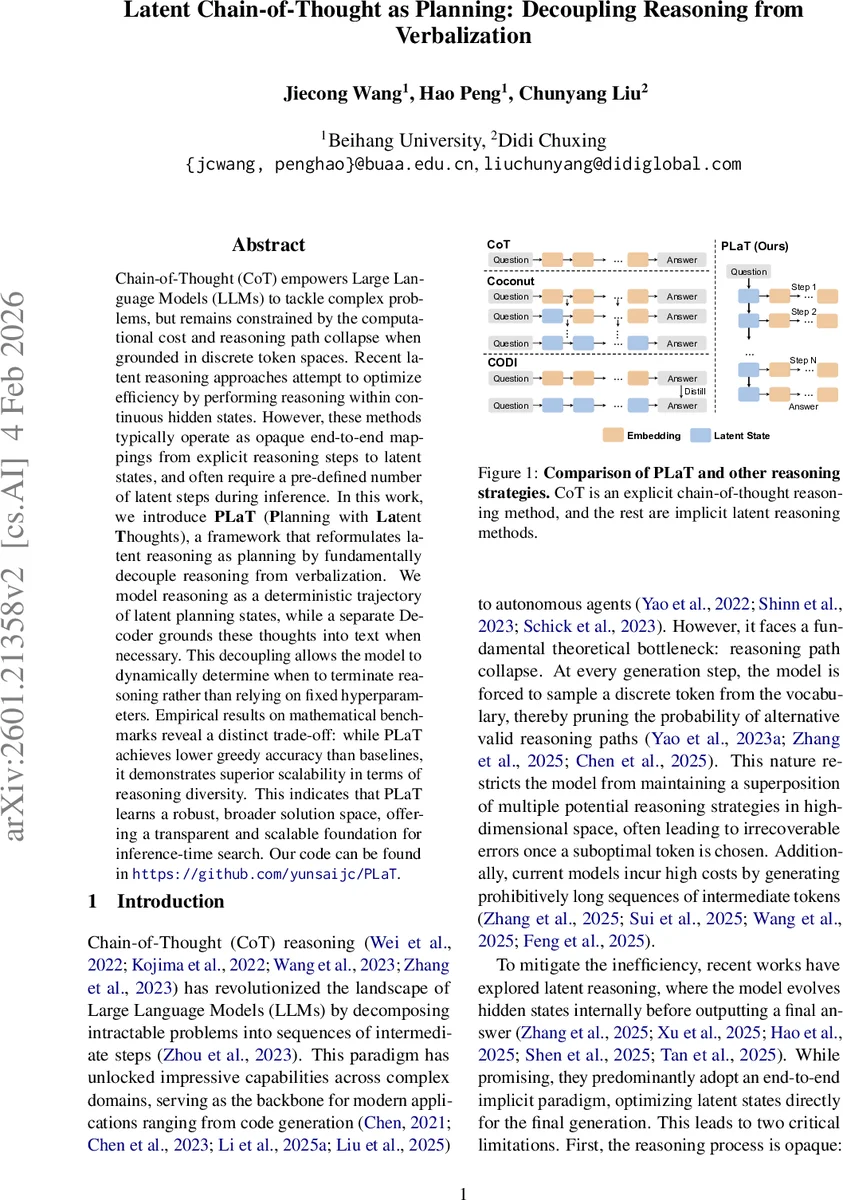

Chain-of-Thought (CoT) empowers Large Language Models (LLMs) to tackle complex problems, but remains constrained by the computational cost and reasoning path collapse when grounded in discrete token spaces. Recent latent reasoning approaches attempt to optimize efficiency by performing reasoning within continuous hidden states. However, these methods typically operate as opaque end-to-end mappings from explicit reasoning steps to latent states, and often require a pre-defined number of latent steps during inference. In this work, we introduce PLaT (Planning with Latent Thoughts), a framework that reformulates latent reasoning as planning by fundamentally decouple reasoning from verbalization. We model reasoning as a deterministic trajectory of latent planning states, while a separate Decoder grounds these thoughts into text when necessary. This decoupling allows the model to dynamically determine when to terminate reasoning rather than relying on fixed hyperparameters. Empirical results on mathematical benchmarks reveal a distinct trade-off: while PLaT achieves lower greedy accuracy than baselines, it demonstrates superior scalability in terms of reasoning diversity. This indicates that PLaT learns a robust, broader solution space, offering a transparent and scalable foundation for inference-time search. Our code can be found in https://github.com/yunsaijc/PLaT.

💡 Research Summary

The paper “Latent Chain‑of‑Thought as Planning: Decoupling Reasoning from Verbalization” introduces PLaT (Planning with Latent Thoughts), a novel framework that separates the internal reasoning process from the external language generation in large language models. Traditional Chain‑of‑Thought (CoT) methods require the model to emit explicit textual tokens at every reasoning step, which leads to two major problems: (1) path collapse, where an early sub‑optimal token prunes alternative reasoning trajectories, and (2) high computational cost due to the generation of long intermediate token sequences. Recent latent‑reasoning approaches mitigate these issues by moving part of the reasoning into continuous hidden states, but they typically treat the latent states as opaque end‑to‑end mappings and enforce a fixed number of latent steps during inference, limiting flexibility and interpretability.

PLaT addresses these limitations by reformulating latent reasoning as a planning problem in a high‑dimensional continuous space. The architecture consists of two distinct modules: a Planner and a Decoder. The Planner receives the encoded question, projects it into an initial latent state, and then autoregressively generates a deterministic trajectory of latent planning states ˜sₖ,ᵢ (i = 1…N_L) for each reasoning step k. These latent states live in a continuous manifold and are not sampled from a distribution, which preserves structural stability. The Decoder aggregates the N_L micro‑states using an Exponential Moving Average (EMA) to form a stabilized planning vector Sₖ. Sₖ is then projected and used as a soft prefix for a standard language model head, which generates the textual segment yₖ. Crucially, the Decoder conditions only on the current aggregated state, forcing the Planner to embed all necessary historical information into Sₖ.

Training proceeds in two phases. First, supervised fine‑tuning (SFT) optimizes a reconstruction loss: the cross‑entropy between ground‑truth text and the Decoder’s output conditioned on Sₖ. Gaussian noise is added to the latent states during SFT to encourage the Decoder to learn the manifold structure rather than memorizing point‑wise mappings. Second, a reinforcement‑learning (RL) stage refines the Decoder while keeping the Planner frozen. The authors introduce Group Relative Policy Optimization (GRPO), a variant of PPO that samples G different verbalizations from the same latent trajectory, computes normalized advantages based on answer correctness and format, and updates the Decoder parameters to maximize expected advantage. This design isolates exploration to the language generation phase, preserving the deterministic planning backbone.

For inference, the authors propose “Lazy Decoding.” Because the Planner operates entirely in latent space, the model can generate the full latent trajectory without producing intermediate text. At each step, only the first token of the Decoder’s output is greedily predicted; if it is a special answer token, the model fully decodes the final answer, otherwise it continues planning. This dramatically reduces token‑by‑token overhead while still allowing on‑demand inspection of intermediate reasoning by decoding any latent state.

Experiments are conducted on the GSM8K‑Aug dataset using a GPT‑2‑small backbone to ensure fair comparison with prior latent‑reasoning baselines. Results reveal a clear trade‑off: greedy (single‑sample) accuracy of PLaT is lower than that of existing latent models, but its Pass@k scaling curve is steeper, indicating superior exploration of the solution space when multiple samples are drawn. Moreover, latent states can be decoded into coherent natural‑language steps, providing interpretability that many prior latent methods lack.

In summary, PLaT contributes three major advances: (1) a clean separation of reasoning and verbalization that enables dynamic termination and computational efficiency, (2) deterministic latent planning that maintains structural integrity while allowing flexible exploration during decoding, and (3) a scalable inference strategy (Lazy Decoding) that reduces overhead without sacrificing interpretability. The work positions latent planning as a promising direction for building more flexible, transparent, and efficient reasoning systems in large language models, and suggests future extensions to larger architectures and non‑mathematical domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment