Mugi: Value Level Parallelism For Efficient LLMs

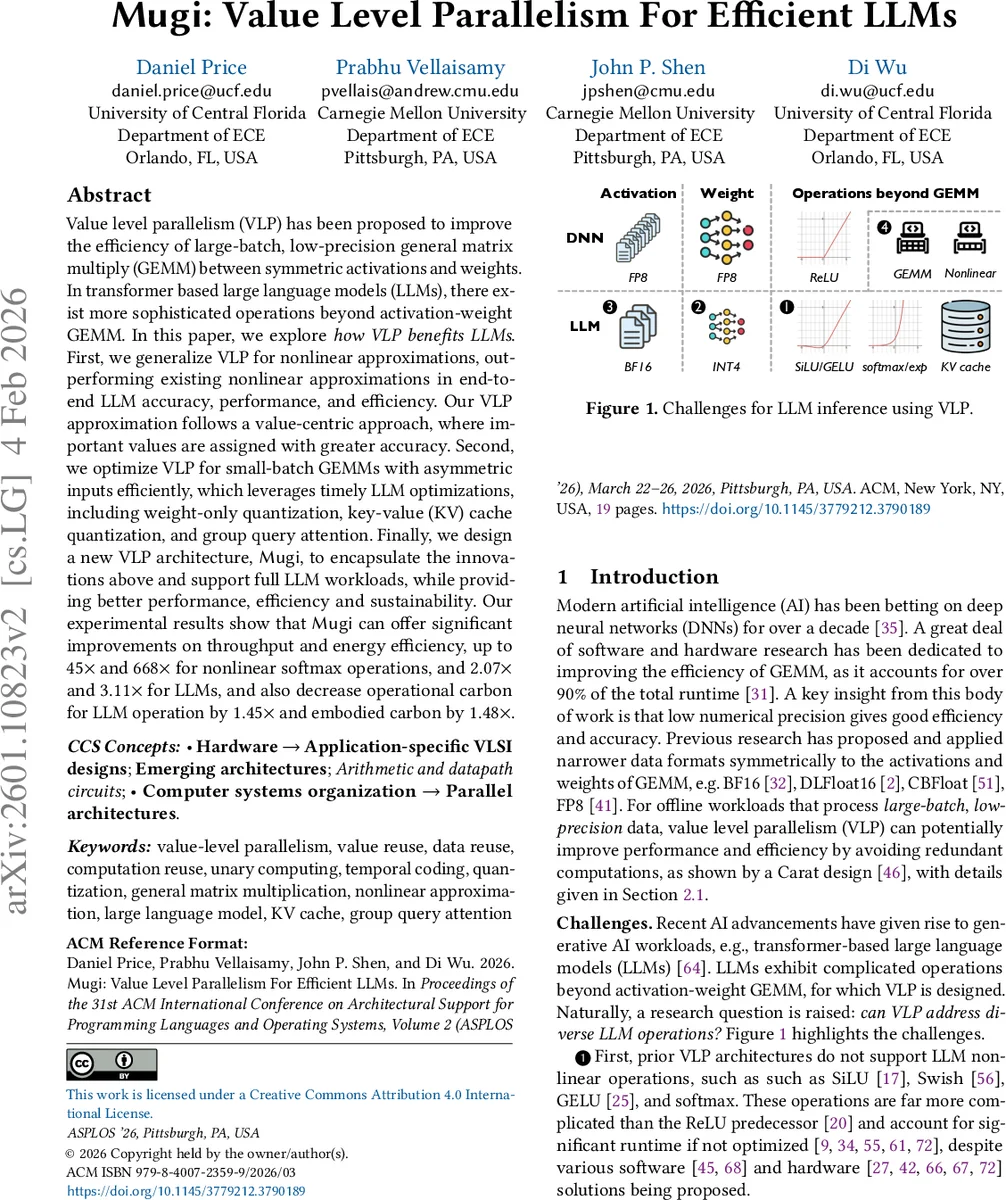

Value level parallelism (VLP) has been proposed to improve the efficiency of large-batch, low-precision general matrix multiply (GEMM) between symmetric activations and weights. In transformer based large language models (LLMs), there exist more sophisticated operations beyond activation-weight GEMM. In this paper, we explore how VLP benefits LLMs. First, we generalize VLP for nonlinear approximations, outperforming existing nonlinear approximations in end-to-end LLM accuracy, performance, and efficiency. Our VLP approximation follows a value-centric approach, where important values are assigned with greater accuracy. Second, we optimize VLP for small-batch GEMMs with asymmetric inputs efficiently, which leverages timely LLM optimizations, including weight-only quantization, key-value (KV) cache quantization, and group query attention. Finally, we design a new VLP architecture, Mugi, to encapsulate the innovations above and support full LLM workloads, while providing better performance, efficiency and sustainability. Our experimental results show that Mugi can offer significant improvements on throughput and energy efficiency, up to $45\times$ and $668\times$ for nonlinear softmax operations, and $2.07\times$ and $3.11\times$ for LLMs, and also decrease operational carbon for LLM operation by $1.45\times$ and embodied carbon by $1.48\times$.

💡 Research Summary

The paper introduces Mugi, a novel hardware architecture that extends Value Level Parallelism (VLP) beyond its original focus on large‑batch, low‑precision GEMM to address the full spectrum of operations found in transformer‑based large language models (LLMs). The authors first identify four key challenges that prevent existing VLP designs (e.g., Carat) from being effective for LLM inference: (1) lack of support for complex nonlinear functions such as softmax, SiLU, and GELU; (2) misalignment with modern asymmetric quantization schemes (weight‑only quantization (WOQ) and KV‑cache quantization (KVQ)) that use BF16‑INT4 formats; (3) inefficiency on the small batch sizes (≤8) required for real‑time inference; and (4) duplicated hardware for nonlinear ops and GEMM, which inflates both operational and embodied carbon.

To overcome these obstacles, Mugi proposes three tightly coupled innovations. First, it formulates a VLP‑based nonlinear approximation that works by splitting each input into sign‑mantissa (S‑M) and exponent (E) fields. The S‑M part indexes a row of a lookup table (LUT) that stores pre‑computed values for a contiguous range of inputs, while the exponent part selects the final value from that row via a temporal subscription mechanism. This two‑step process enables massive value reuse across the array, because many inputs share the same LUT row and only differ in the timing of their temporal spikes. The authors further improve accuracy by adopting a value‑centric strategy: they round mantissas to a reduced bit‑width (typically 3–4 bits) to shorten spike duration, and they allocate higher precision to the most frequent exponent range (approximately –3 to +4 for softmax, SiLU, and GELU).

Second, Mugi adapts VLP to asymmetric, small‑batch GEMM. It custom‑designs data formats that accommodate BF16 activations with INT4 weights (WOQ) and BF16‑INT4 KV‑cache (KVQ). By leveraging Grouped Query Attention (GQA), which turns many GEMV operations into small‑batch GEMM, Mugi can broadcast weight rows and lean output buffers, dramatically reducing memory traffic and buffer area.

Third, Mugi unifies the hardware for both nonlinear approximations and GEMM within a single array of temporal converters, accumulators, and shared LUTs. This eliminates the need for separate vector units, cutting silicon area by roughly 30 % and reducing both operational power and embodied carbon.

The evaluation spans several state‑of‑the‑art LLMs (e.g., LLaMA‑7B, GPT‑NeoX‑20B). For isolated nonlinear kernels, Mugi achieves up to 45× higher throughput and 668× better energy efficiency for softmax, while maintaining comparable accuracy. In end‑to‑end LLM inference, it delivers 2.07× speed‑up and 3.11× energy savings. Carbon modeling shows a 1.45× reduction in operational CO₂‑eq and a 1.48× reduction in embodied CO₂‑eq.

Overall, the paper’s contributions are: (1) the first VLP‑based input‑centric approximation for complex nonlinear functions; (2) a systematic adaptation of VLP to asymmetric, small‑batch GEMM used in modern LLM pipelines; (3) a hardware‑level integration that reuses the same array for both GEMM and nonlinear ops, improving sustainability; and (4) a thorough experimental validation demonstrating significant performance, efficiency, and carbon‑footprint gains. Limitations include the added timing‑control complexity of temporal spikes and the need for ASIC‑level validation beyond RTL simulation. Future work could explore higher‑bit precision support, scaling to larger token windows, and extending the approach to other nonlinear primitives such as attention‑score scaling.

Comments & Academic Discussion

Loading comments...

Leave a Comment