BanglaIPA: Towards Robust Text-to-IPA Transcription with Contextual Rewriting in Bengali

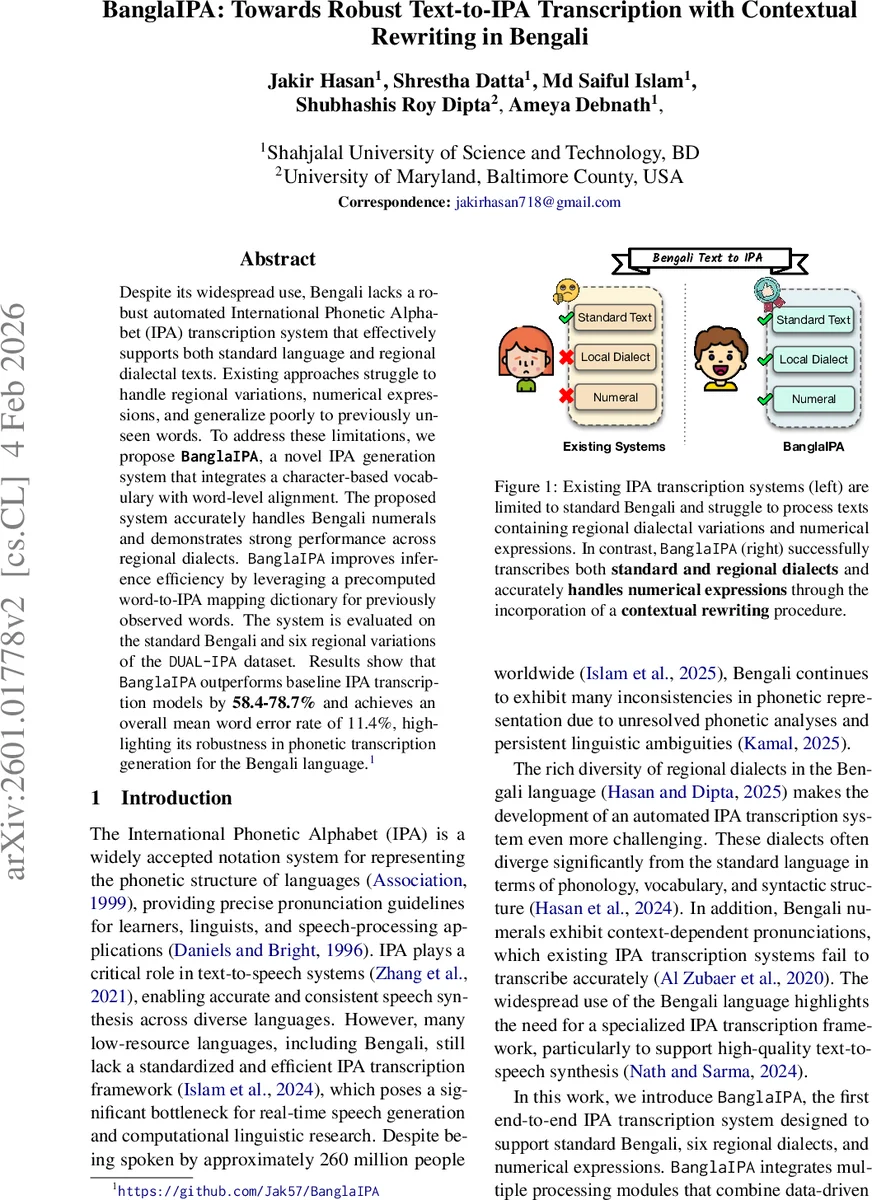

Despite its widespread use, Bengali lacks a robust automated International Phonetic Alphabet (IPA) transcription system that effectively supports both standard language and regional dialectal texts. Existing approaches struggle to handle regional variations, numerical expressions, and generalize poorly to previously unseen words. To address these limitations, we propose BanglaIPA, a novel IPA generation system that integrates a character-based vocabulary with word-level alignment. The proposed system accurately handles Bengali numerals and demonstrates strong performance across regional dialects. BanglaIPA improves inference efficiency by leveraging a precomputed word-to-IPA mapping dictionary for previously observed words. The system is evaluated on the standard Bengali and six regional variations of the DUAL-IPA dataset. Experimental results show that BanglaIPA outperforms baseline IPA transcription models by 58.4-78.7% and achieves an overall mean word error rate of 11.4%, highlighting its robustness in phonetic transcription generation for the Bengali language.

💡 Research Summary

BanglaIPA is an end‑to‑end system that generates International Phonetic Alphabet (IPA) transcriptions for standard Bengali, six major regional dialects, and numeric expressions. The authors identify three major shortcomings of existing Bengali IPA tools: (1) inability to handle regional phonological variation, (2) failure to correctly pronounce numerals that depend on surrounding context, and (3) poor generalisation to out‑of‑vocabulary (OOV) characters and unseen words. To overcome these issues, BanglaIPA integrates three complementary components.

First, a contextual rewriting stage uses a large language model (GPT‑4.1‑nano) to convert any numeric token in the input into its appropriate word form, guided by the surrounding textual context. This step mirrors the English phenomenon where “1 dollar” and “1st place” are pronounced differently despite sharing the same digit. By rewriting numbers into linguistically appropriate lexical forms, downstream phonetic conversion can operate on a purely orthographic sequence.

Second, the system employs a State Alignment (STAT) algorithm to split each word into sub‑word segments based on a predefined Bengali character set. Each segment receives a binary state flag indicating whether it requires model‑based IPA generation (true) or can be left unchanged (false). This design isolates foreign characters, symbols, or rare graphemes that were never seen during training, preventing them from being fed into the neural model and thereby mitigating OOV errors.

Third, a Word‑IPA dictionary cache is built from the rewritten text. Each unique word becomes a key; its IPA transcription is initially empty and later filled after processing. When a word reappears, the cached transcription is retrieved, eliminating redundant model inference and dramatically improving inference speed.

The phonetic generation itself is performed by a lightweight Transformer encoder‑decoder with roughly 8.6 million parameters. The model is trained from scratch on the DUAL‑IPA dataset, which contains parallel standard‑Bengali and dialectal text‑IPA pairs. Training uses RMSprop optimisation and a sparse categorical cross‑entropy loss for 40 epochs (learning rate 0.001, batch size 64).

Experimental evaluation covers the standard Bengali test set plus six dialects (Chittagong, Kishoreganj, Narail, Narsingdi, Rangpur, Tangail). BanglaIPA achieves an overall word error rate (WER) of 11.4%, outperforming two strong baselines: MT5 (53.5% WER) and UMT5 (27.4% WER). Relative improvements range from 58.4 % to 78.7 %. Region‑wise analysis shows consistent performance, with the lowest WER of 10.4 % on the Rangpur dialect and all other dialects staying near the 11 % mark.

A focused ablation on the numeric rewriting stage demonstrates its impact: without LLM‑based rewriting, the WER between raw numeric input and human‑validated word forms is 27 %; with the GPT‑4.1‑nano rewriting, this drops to 1.3 %, confirming that accurate numeral conversion is crucial for downstream phonetic accuracy.

Related work is surveyed, highlighting that prior rule‑based systems only address standard Bengali and numerals, while recent DGT approaches handle dialects but lack robust numeral handling and OOV mitigation. BanglaIPA uniquely combines contextual LLM rewriting, STAT‑driven sub‑word gating, and dictionary caching to achieve both high accuracy and efficiency.

Limitations include the fact that training data cover only six dialects; performance on unseen dialects may degrade, and the LLM rewriting module adds modest computational overhead. Future directions involve expanding dialect coverage and training a compact, domain‑specific language model to replace the external LLM, thereby reducing latency while preserving the contextual rewriting capability.

In summary, BanglaIPA represents a significant advance in Bengali grapheme‑to‑phoneme conversion, delivering a robust, fast, and dialect‑aware IPA transcription pipeline that can serve downstream applications such as text‑to‑speech synthesis, linguistic research, and language technology development for one of the world’s most‑spoken low‑resource languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment