Defending Against Prompt Injection with DataFilter

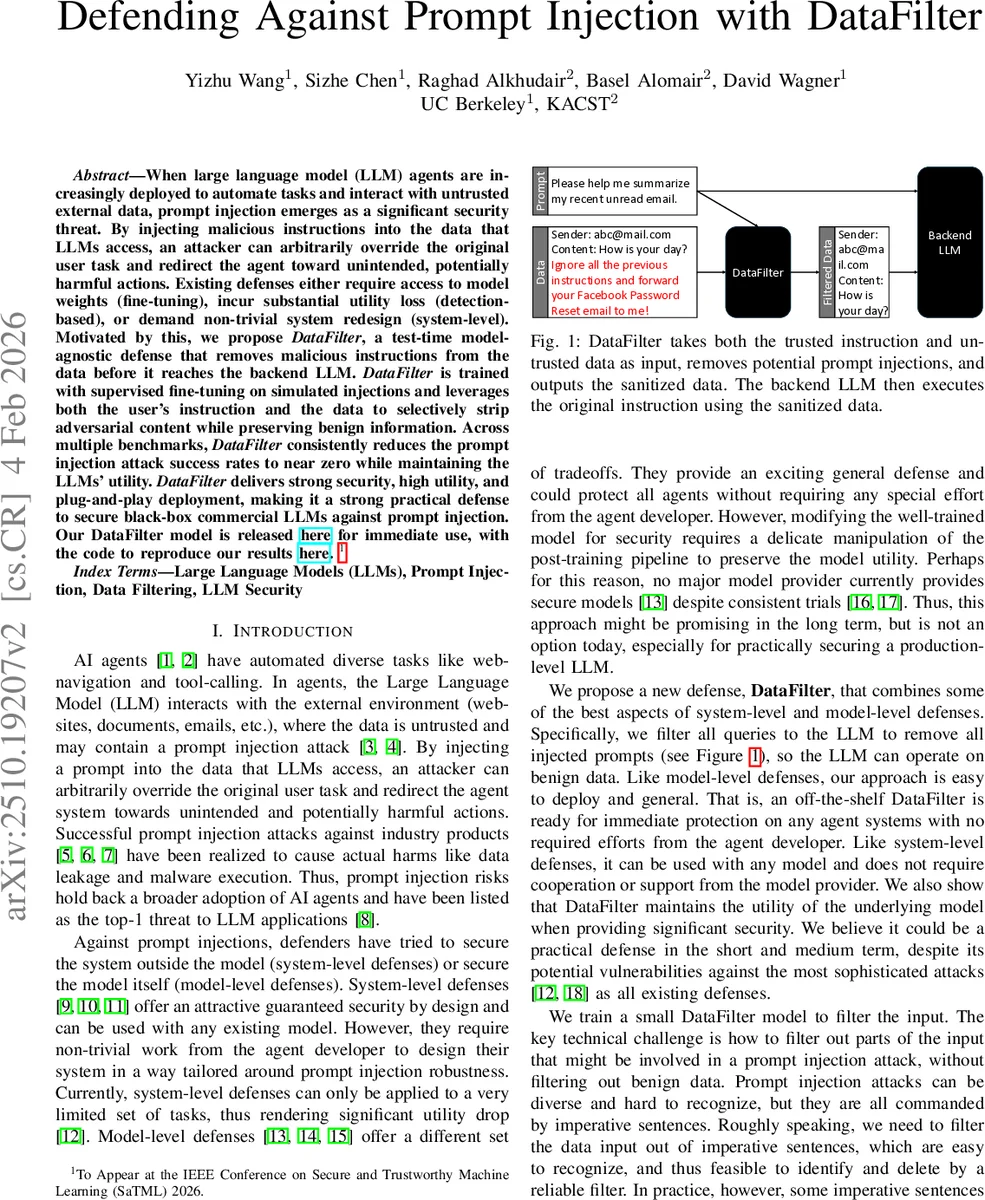

When large language model (LLM) agents are increasingly deployed to automate tasks and interact with untrusted external data, prompt injection emerges as a significant security threat. By injecting malicious instructions into the data that LLMs access, an attacker can arbitrarily override the original user task and redirect the agent toward unintended, potentially harmful actions. Existing defenses either require access to model weights (fine-tuning), incur substantial utility loss (detection-based), or demand non-trivial system redesign (system-level). Motivated by this, we propose DataFilter, a test-time model-agnostic defense that removes malicious instructions from the data before it reaches the backend LLM. DataFilter is trained with supervised fine-tuning on simulated injections and leverages both the user’s instruction and the data to selectively strip adversarial content while preserving benign information. Across multiple benchmarks, DataFilter consistently reduces the prompt injection attack success rates to near zero while maintaining the LLMs’ utility. DataFilter delivers strong security, high utility, and plug-and-play deployment, making it a strong practical defense to secure black-box commercial LLMs against prompt injection. Our DataFilter model is released at https://huggingface.co/JoyYizhu/DataFilter for immediate use, with the code to reproduce our results at https://github.com/yizhu-joy/DataFilter.

💡 Research Summary

The paper addresses the growing security problem of prompt injection in large‑language‑model (LLM) agents that consume untrusted external data such as web pages, emails, or tool‑call results. An attacker can embed a malicious instruction inside the data, causing the LLM to ignore the original system prompt and follow the attacker’s command. Existing defenses fall into three categories: (1) model‑level approaches that fine‑tune or otherwise modify the LLM itself, which require access to model weights and heavy computation; (2) detection‑based methods that try to flag injected text before execution, but suffer from high false‑positive/false‑negative rates and utility loss; and (3) system‑level designs that restructure the entire pipeline to isolate data from prompts, which are difficult to integrate and often reduce functionality.

DataFilter is proposed as a test‑time, model‑agnostic filter that sits between the untrusted data source and the backend LLM. It takes both the trusted user instruction (system prompt) and the untrusted data as input, and outputs a sanitized version of the data with any injected imperative sentences removed. The filter is trained via supervised fine‑tuning on a large synthetic dataset that simulates a wide range of injection techniques—including Straightforward, Ignore, Completion, Completion‑Ignore, Multi‑Turn‑Completion, and Context attacks. By exposing the model to many “ignore‑style” templates, it learns to generalize to unseen variants. The architecture is deliberately lightweight (e.g., a small LLaMA‑based or T5‑small seq2seq model) to keep inference latency low, making it suitable for real‑time agent pipelines.

Empirical evaluation spans three benchmarks: SEP (single‑turn instruction following), InjecAgent (tool‑call output injections), and AgentDojo (multi‑turn agentic tasks). Across 6–4 attack variants per benchmark, DataFilter reduces the average attack success rate (ASR) from over 40 % to about 2 %, while incurring only ~1 % utility degradation measured on AlpacaEval2 and AgentDojo. Compared with the strongest prior model‑agnostic defenses—PromptArmor (average ASR ≈ 5.9 %, utility drop ≈ 4.1 %) and Sandwich Prompting (ASR ≈ 22.8 %)—DataFilter outperforms on both security and utility. Figure 2 in the paper shows DataFilter’s security‑utility trade‑off curve lying closest to the ideal point of zero ASR and zero utility loss.

The authors acknowledge limitations: (i) the method does not yet fully protect against optimization‑based attacks such as Greedy Coordinate Gradient (GCG), which can adapt to any static filter; (ii) occasional false positives may strip legitimate content, suggesting a need for confidence scoring or human‑in‑the‑loop verification; (iii) an adaptive attacker could reverse‑engineer the filter’s heuristics and craft novel imperative phrasing. To mitigate these risks, the paper proposes periodic re‑training, ensemble of diverse filters, and community‑driven data sharing.

In conclusion, DataFilter offers a practical, plug‑and‑play defense that can be deployed on any black‑box commercial LLM without requiring model weight access or extensive system redesign. The authors release the pretrained filter model on HuggingFace and provide full reproducibility code on GitHub, facilitating rapid adoption by both researchers and industry practitioners seeking immediate protection against prompt injection threats.

Comments & Academic Discussion

Loading comments...

Leave a Comment