Scaling Spoken Language Models with Syllabic Speech Tokenization

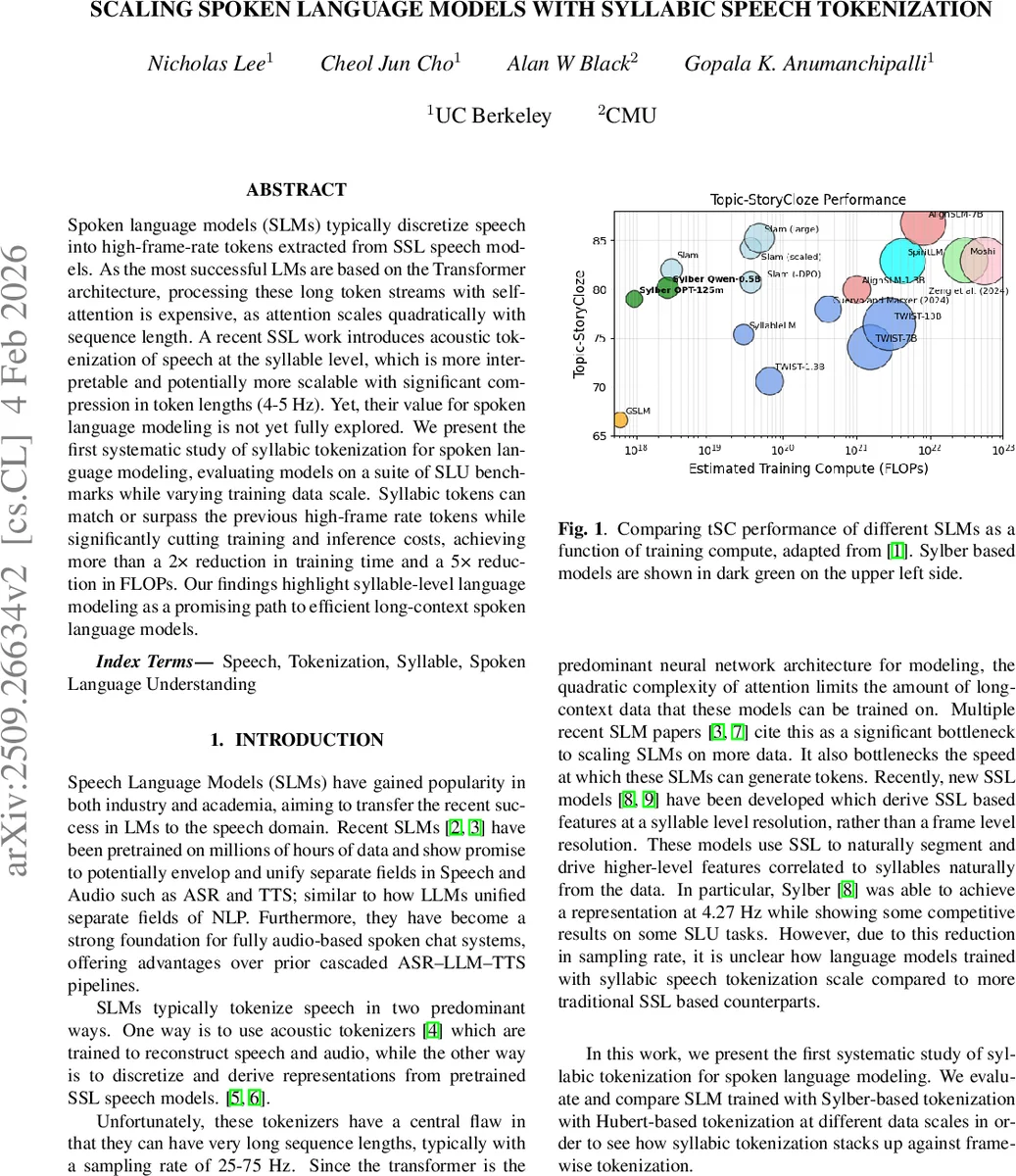

Spoken language models (SLMs) typically discretize speech into high-frame-rate tokens extracted from SSL speech models. As the most successful LMs are based on the Transformer architecture, processing these long token streams with self-attention is expensive, as attention scales quadratically with sequence length. A recent SSL work introduces acoustic tokenization of speech at the syllable level, which is more interpretable and potentially more scalable with significant compression in token lengths (4-5 Hz). Yet, their value for spoken language modeling is not yet fully explored. We present the first systematic study of syllabic tokenization for spoken language modeling, evaluating models on a suite of SLU benchmarks while varying training data scale. Syllabic tokens can match or surpass the previous high-frame rate tokens while significantly cutting training and inference costs, achieving more than a 2x reduction in training time and a 5x reduction in FLOPs. Our findings highlight syllable-level language modeling as a promising path to efficient long-context spoken language models.

💡 Research Summary

The paper investigates whether syllable‑level acoustic tokenization can replace the conventional high‑frame‑rate tokenization used in spoken language models (SLMs) and still achieve competitive performance while dramatically reducing computational costs. Traditional SLMs rely on tokenizers such as HuBERT that produce tokens at 25‑75 Hz, leading to very long token sequences. Because Transformer‑based language models have quadratic attention complexity, these long sequences become a major bottleneck for scaling both the amount of training data and the context length.

Recent self‑supervised speech (SSL) research introduced Sylber, a model that extracts embeddings aligned with natural syllable boundaries and operates at roughly 4‑5 Hz. The authors ask: does this coarse, linguistically motivated representation retain enough information for downstream spoken language understanding (SLU) tasks, and how does it affect scaling behavior?

To answer this, they conduct a systematic comparison between Sylber‑based tokenization and HuBERT‑based tokenization using the Slamkit framework. Sylber embeddings are clustered with k‑means into vocabularies of 5 k, 10 k, 20 k, and 40 k units; HuBERT tokens use a 500‑unit vocabulary with deduplication to halve the effective sampling rate to 25 Hz. Two Transformer backbones are evaluated: a smaller OPT‑125M and a larger Qwen2.5‑0.5B, both initialized with text‑pretrained weights in a TWIST‑style fashion.

Training data are incrementally increased across three mixes: (1) LibriSpeech alone, (2) LibriSpeech + LibriLight, and (3) LibriSpeech + LibriLight + Spoken TinyStories (STS). Each model is trained for a single epoch with hyperparameters identical to prior work, allowing a clean comparison of tokenization effects across data scales.

Four evaluation metrics are used: (i) sBLIMP – a spoken version of the BLIMP grammaticality test, (ii) sSC – spoken story cloze where the model must distinguish a relevant continuation from distractors, (iii) tSC – topic story cloze with a stronger topical mismatch, and (iv) Generation Perplexity (GenPPL) – measured by generating speech tokens, converting them to audio with a vocoder, transcribing with Whisper‑large‑v3‑turbo, and scoring the resulting text with Llama‑3.2‑1B. For generation, the maximum token length is set to 150 for HuBERT and 30 for Sylber to keep the generated audio duration comparable, acknowledging Sylber’s 5× coarser time resolution.

Key findings:

-

Token Count Reduction – Sylber reduces the total number of training tokens by roughly a factor of five (e.g., 13.1 M vs 66.4 M for LibriSpeech). This directly translates into lower memory footprints and faster training.

-

Performance on SLU Benchmarks – Across all data scales, Sylber matches or exceeds HuBERT on sBLIMP, achieving higher grammaticality scores despite seeing far fewer tokens. For sSC, Sylber lags behind HuBERT when only LibriSpeech + LibriLight are used, but overtakes it once STS is added, suggesting that richer narrative data benefits the syllable representation. tSC results show a near‑linear improvement for Sylber, reaching parity with HuBERT after the full data mix.

-

Generation Perplexity – Sylber models exhibit steeper declines in GenPPL as training tokens increase, indicating faster convergence in generative quality.

-

Training Efficiency – On an 8‑GPU A100‑80GB DGX node, the full HuBERT‑based model (Qwen2.5‑0.5B) required 8.5 hours, whereas the Sylber‑20k model completed in 3 hours, a >2× speedup. FLOP counts are reduced by roughly fivefold.

-

Vocabulary Size Effects – Across the four Sylber vocabularies, 20 k tokens consistently yields the best or near‑best scores. Increasing vocab size beyond this does not bring substantial gains, implying that the coarse k‑means clustering already captures the essential syllabic variability.

The authors also note correlations between pretraining mix and benchmark performance: adding STS improves sSC markedly for both tokenizers, while its impact on sBLIMP is mixed, sometimes slightly decreasing scores for Sylber‑OPT models. This mirrors observations from prior HuBERT studies, suggesting that data composition influences downstream tasks similarly across tokenization schemes.

In conclusion, syllable‑level tokenization provides a compelling path toward scalable, cost‑effective spoken language models. By compressing the token stream by a factor of five, Sylber enables longer effective context windows, faster training, and lower inference costs without sacrificing (and sometimes improving) performance on grammaticality, story‑completion, and generation tasks. The work opens several avenues for future research: more sophisticated clustering or learned discrete representations for syllables, integration with multimodal speech‑text models, and exploration of even larger data regimes to validate scaling laws for syllable‑based SLMs.

Comments & Academic Discussion

Loading comments...

Leave a Comment