Less Precise Can Be More Reliable: A Systematic Evaluation of Quantization's Impact on CLIP Beyond Accuracy

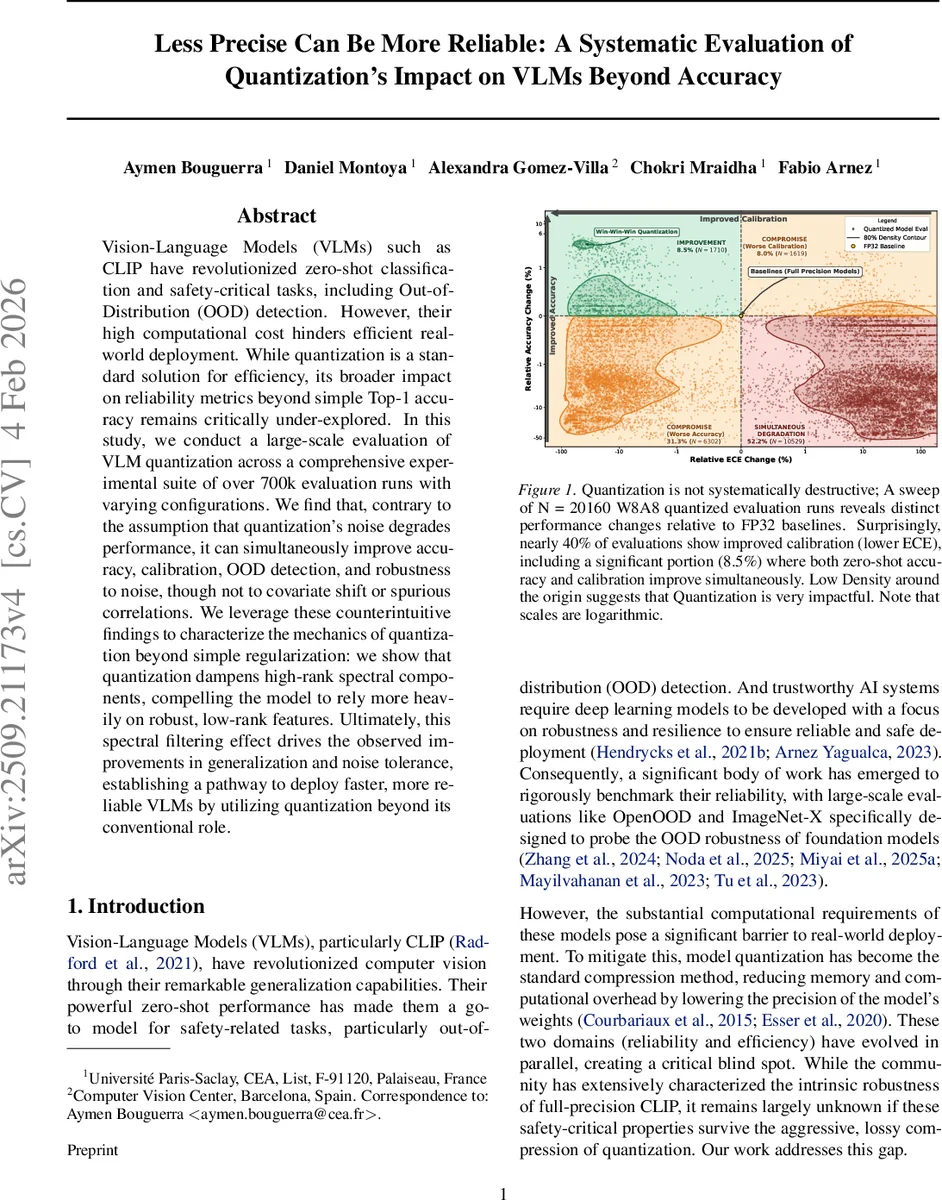

Vision-Language Models (VLMs) such as CLIP have revolutionized zero-shot classification and safety-critical tasks, including Out-of-Distribution (OOD) detection. However, their high computational cost hinders efficient real-world deployment. While quantization is a standard solution for efficiency, its broader impact on reliability metrics beyond simple Top-1 accuracy remains critically under-explored. In this study, we conduct a large-scale evaluation of VLM quantization across a comprehensive experimental suite of over 700k evaluation runs with varying configurations. We find that, contrary to the assumption that quantization’s noise degrades performance, it can simultaneously improve accuracy, calibration, OOD detection, and robustness to noise, though not to covariate shift or spurious correlations. We leverage these counterintuitive findings to characterize the mechanics of quantization beyond simple regularization: we show that quantization dampens high-rank spectral components, compelling the model to rely more heavily on robust, low-rank features. Ultimately, this spectral filtering effect drives the observed improvements in generalization and noise tolerance, establishing a pathway to deploy faster, more reliable VLMs by utilizing quantization beyond its conventional role.

💡 Research Summary

This paper presents a comprehensive, large‑scale study of how quantization affects the reliability of CLIP‑style vision‑language models (VLMs) beyond simple top‑1 accuracy. The authors evaluate ten diverse VLM architectures—including ViT‑based CLIP, ConvNeXt, SigLIP, ALIGN, and CoCa—under two deployment scenarios: (1) visual‑only quantization, where only the image encoder is quantized, and (2) joint visual‑text quantization, where both encoders share the same reduced precision. Sixteen quantization techniques are examined, split evenly between data‑free post‑training quantization (PTQ) methods (e.g., MinMax, SmoothQuant, IGQ‑ViT) and data‑aware quantization‑aware training (QA‑T) approaches (e.g., LSQ, Straight‑Through Estimator variants, LoRA‑based fine‑tuning). Bit‑widths of 4, 6, and 8 bits are explored, yielding 20 160 distinct model‑quantizer configurations. In total, more than 700 000 evaluation runs are performed across a battery of reliability benchmarks.

The authors define five reliability dimensions: (1) robustness to quantization noise across bit‑widths and methods, (2) uncertainty quality and calibration measured by Expected Calibration Error (ECE), (3) out‑of‑distribution (OOD) detection using AUROC, FPR95, and six scoring functions (MSP, Energy, Entropy, MCM, NegLabel, EOE), (4) robustness to natural and synthetic distribution shifts (ImageNet‑A,‑R,‑V2,‑Sketch, CIFAR‑10‑C), and (5) sensitivity to spurious correlations, quantified via ΔRSG and Added Vulnerability metrics derived from CounterAnimal‑style splits. This multi‑metric approach allows the authors to capture nuanced trade‑offs that single‑metric studies miss.

Key empirical findings are striking. Approximately 40 % of quantized models exhibit lower ECE than their full‑precision counterparts, and 8.5 % improve both accuracy and calibration simultaneously. OOD detection generally benefits from quantization, with average AUROC gains of 2–3 % across the six scoring functions. Noise robustness (e.g., CIFAR‑10‑C at severity 3) also improves, suggesting that quantization acts as a regularizer that suppresses sensitivity to high‑frequency perturbations. Conversely, performance under covariate shift (e.g., ImageNet‑Sketch) and on spurious‑correlation test sets degrades, indicating that quantization discards fine‑grained, high‑rank features that are useful for handling distributional changes but also prone to overfitting.

To explain these contradictory effects, the authors conduct a spectral analysis of the weight matrices and activation maps. Quantization behaves like a low‑pass filter: it attenuates high‑rank (high‑frequency) components while preserving low‑rank (low‑frequency) structure. This “spectral filtering” forces the model to rely more heavily on coarse, globally coherent features, which are inherently more robust to random noise and better for OOD discrimination. However, the loss of high‑rank detail harms performance on tasks that require subtle texture or shape cues, such as domain‑shift benchmarks and spurious‑feature detection.

The study also uncovers the pivotal role of parameter redundancy (or “capacity margin”). Models that occupy the “high‑redundancy zone”—typically larger architectures trained on curated datasets (e.g., DFN‑ViT‑B‑32)—retain performance after quantization and often improve. In contrast, models in the “low‑redundancy zone,” such as small Base‑32 variants trained on noisy LAION‑2B data, suffer severe accuracy drops because they lack surplus representational capacity to absorb quantization noise. A strong linear correlation (r ≈ 0.91) between PTQ degradation and sensitivity to weight dropout confirms that redundancy is the primary buffer against quantization artifacts.

Another nuanced insight concerns the difference between PTQ and QA‑T. PTQ methods preserve the original FP32 embedding manifold; higher cosine similarity between original and quantized visual embeddings predicts better accuracy. QA‑T, however, decouples performance from embedding fidelity: fine‑tuning can move the model to a new, quantization‑friendly minimum that diverges from the FP32 representation yet yields higher accuracy. This demonstrates that quantization can act not only as a compression tool but also as an implicit regularizer that reshapes the loss landscape toward flatter minima.

In summary, the paper establishes that quantization can be a double‑edged sword: it can simultaneously boost efficiency, calibration, and OOD detection while compromising robustness to covariate shift and increasing reliance on spurious cues. The net effect depends critically on model size, data quality, and the amount of redundant capacity available. Practitioners aiming to deploy VLMs in real‑time, safety‑critical settings should therefore consider quantization as a strategic design choice—selecting appropriate bit‑widths, quantization methods, and possibly augmenting redundancy (e.g., via larger models or data cleaning) to reap reliability gains without sacrificing performance on the target distribution. Future work suggested includes designing quantization schemes that explicitly control the spectral cutoff, and developing predictive metrics for redundancy to guide quantization decisions a priori.

Comments & Academic Discussion

Loading comments...

Leave a Comment