Evaluating and Steering Modality Preferences in Multimodal Large Language Model

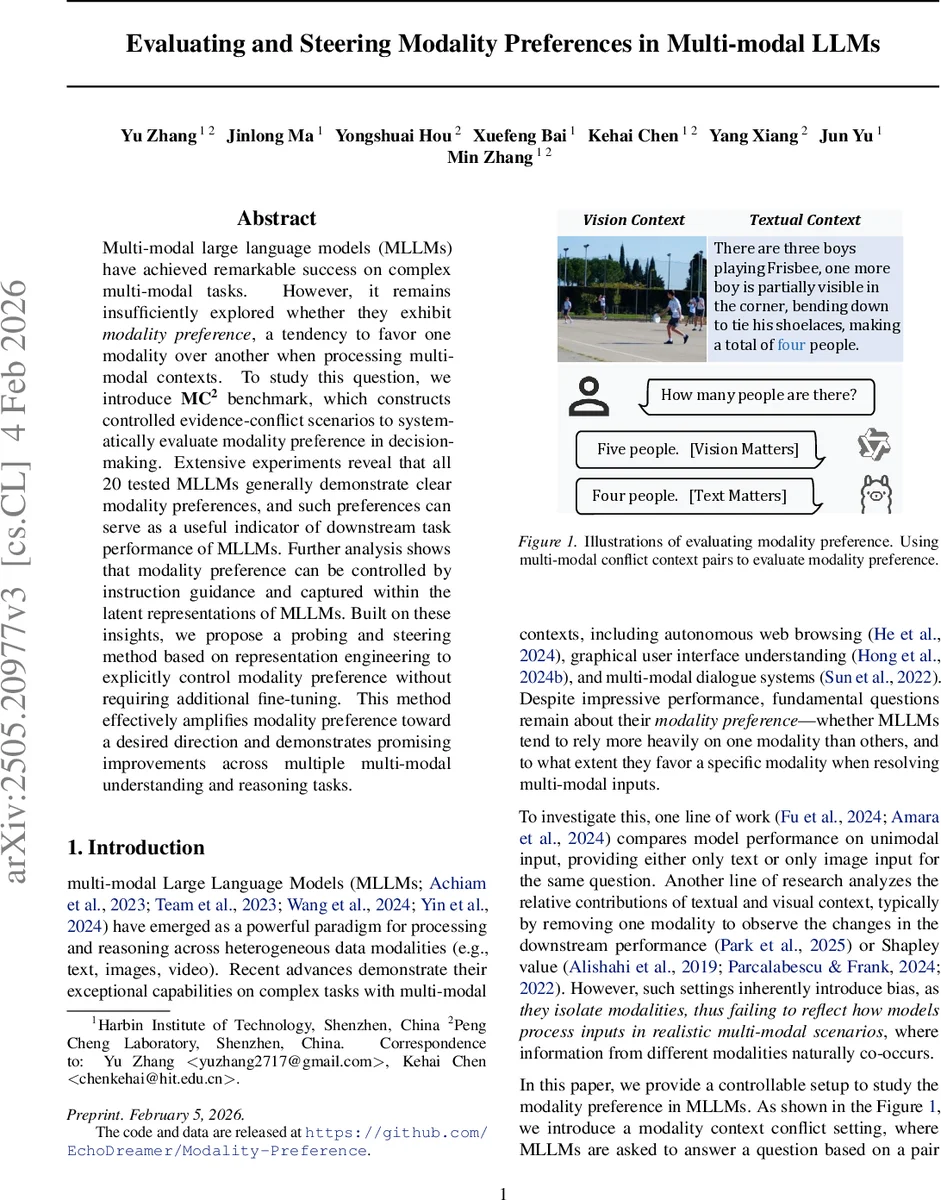

Multi-modal large language models (MLLMs) have achieved remarkable success on complex multi-modal tasks. However, it remains insufficiently explored whether they exhibit $\textbf{modality preference}$, a tendency to favor one modality over another when processing multi-modal contexts. To study this question, we introduce $\textbf{MC\textsuperscript{2}}$ benchmark, which constructs controlled evidence-conflict scenarios to systematically evaluate modality preference in decision-making. Extensive experiments reveal that all 20 tested MLLMs generally demonstrate clear modality preferences, and such preferences can serve as a useful indicator of downstream task performance of MLLMs. Further analysis shows that modality preference can be controlled by instruction guidance and captured within the latent representations of MLLMs. Built on these insights, we propose a probing and steering method based on representation engineering to explicitly control modality preference without requiring additional fine-tuning. This method effectively amplifies modality preference toward a desired direction and demonstrates promising improvements across multiple multi-modal understanding and reasoning tasks.

💡 Research Summary

The paper investigates a previously under‑explored behavior of multimodal large language models (MLLMs): modality preference, i.e., a systematic tendency to rely more heavily on one modality (text or vision) when both are presented together. To measure this phenomenon in a controlled manner, the authors introduce the MC² benchmark (Modality Context Conflict). MC² consists of 2,000 carefully curated samples derived from the TDIUC visual‑question‑answer dataset. For each sample, a question is paired with two conflicting pieces of evidence: a visual context (image + caption) that supports one answer (Aᵥ) and a textual context (synthetically generated distractor text) that supports a different answer (Aₜ). The question is reformulated into a binary‑choice format, and the order of answer options is swapped to eliminate positional bias. Models are required to answer based solely on the provided contexts; high accuracy on isolated visual or textual contexts (>95 %) is verified to ensure that any deviation in the conflict setting is due to modality preference rather than comprehension failure.

The authors evaluate 20 open‑source MLLMs (including LLaVA‑1.5‑7B, OneVision‑7B, InternVL3‑14B, Qwen2.5‑VL‑7B, etc.) together with the proprietary ChatGPT‑4o‑mini. For each model, they compute three scores: S_vision (proportion of conflict‑pair answers aligned with the visual context), S_text (proportion aligned with the textual context), and S_others (inconsistent or ambiguous answers). The Vision Ratio is defined as S_vision / (S_vision + S_text); values above 0.5 indicate a visual preference, below 0.5 a textual preference. The results reveal a clear pattern: the majority of models exhibit a strong textual bias, while only a few larger models (e.g., Qwen2.5‑VL, InternVL3) show a modest visual tilt. The authors further correlate Vision Ratio with downstream performance on tasks that are either vision‑heavy (VQA‑2.0, OK‑VQA, NLVR2) or text‑heavy (multimodal dialogue, document‑grounded QA). Models with higher Vision Ratios consistently outperform on vision‑centric benchmarks, confirming that modality preference is a useful predictor of task suitability.

Beyond measurement, the paper explores two avenues for steering modality preference without full fine‑tuning. First, instruction guidance: adding explicit prompts such as “Please prioritize the image information” shifts the Vision Ratio upward by 8–12 percentage points across most models. Second, representation engineering: the authors probe the latent space of each MLLM (e.g., cross‑attention outputs, multimodal token embeddings) to identify a direction v that correlates with modality preference. By adding a small vector δ·v to the representation before the final decoding step, they can amplify or suppress the model’s reliance on a chosen modality. This intervention is training‑free, computationally cheap, and yields consistent performance gains of 2–5 percentage points on a suite of multimodal understanding and reasoning tasks.

The contributions are threefold: (1) a novel, publicly released benchmark (MC²) that isolates modality preference from knowledge or comprehension confounds; (2) an extensive empirical analysis linking model scale, training data composition, and architecture to observed preferences; (3) a practical, training‑free steering technique based on prompt engineering and latent‑space manipulation, demonstrating that modality preference can be both measured and deliberately controlled. The work opens a new evaluation dimension for multimodal systems—how much they rely on each modality—and provides tools for developers to align model behavior with the demands of specific downstream applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment