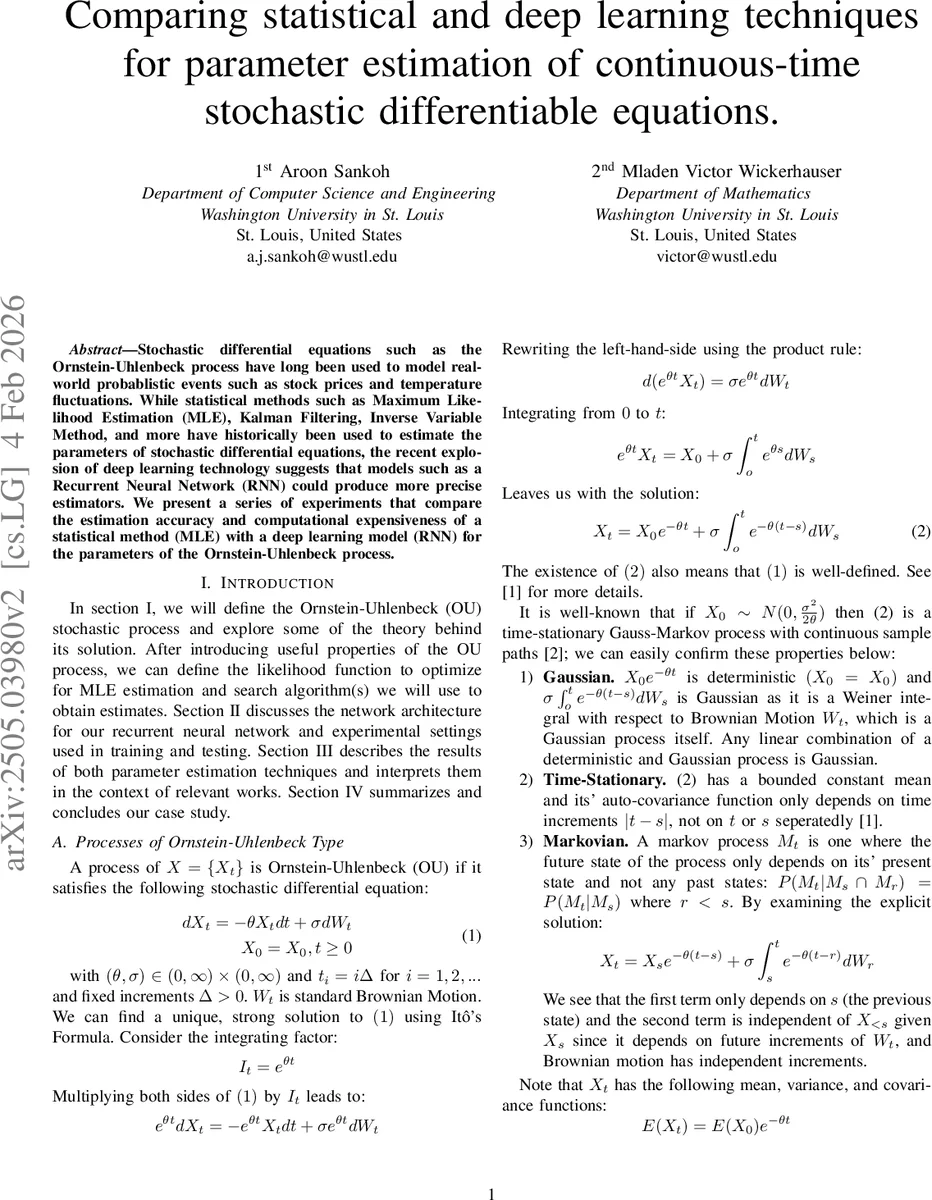

Comparing statistical and deep learning techniques for parameter estimation of continuous-time stochastic differentiable equations

Stochastic differential equations such as the Ornstein-Uhlenbeck process have long been used to model realworld probablistic events such as stock prices and temperature fluctuations. While statistical methods such as Maximum Likelihood Estimation (MLE), Kalman Filtering, Inverse Variable Method, and more have historically been used to estimate the parameters of stochastic differential equations, the recent explosion of deep learning technology suggests that models such as a Recurrent Neural Network (RNN) could produce more precise estimators. We present a series of experiments that compare the estimation accuracy and computational expensiveness of a statistical method (MLE) with a deep learning model (RNN) for the parameters of the Ornstein-Uhlenbeck process.

💡 Research Summary

This paper investigates the problem of estimating the drift (θ) and diffusion (σ²) parameters of the Ornstein‑Uhlenbeck (OU) stochastic differential equation by comparing a classical statistical approach—Maximum Likelihood Estimation (MLE)—with a modern deep‑learning approach—a two‑layer Long Short‑Term Memory (LSTM) recurrent neural network (RNN). The authors first derive the OU process solution, its Gaussian stationary distribution, and the exact transition density, which leads to a closed‑form log‑likelihood function. Because the likelihood is non‑convex in θ, they adopt a hybrid optimization pipeline: (i) a Generalized Method of Moments (GMM) provides initial guesses for θ and σ²; (ii) a BFGS quasi‑Newton method refines the estimates locally; and (iii) when the likelihood surface remains problematic, a basin‑hopping global search evaluates BFGS from multiple random starting points. This combination yields reliable MLE results but requires substantial CPU time (≈115 s per experiment) and GPU memory (≈531 MB) for the likelihood evaluations and global search.

For the deep‑learning side, the authors design a lightweight architecture that can be trained on modest hardware (a 2 GB GPU, 6 GB RAM, and two CPU cores). The network receives a normalized trajectory of 500 time steps as input, processes it through two stacked LSTM layers, passes the final hidden state through an ELU activation, and finally maps it to the two‑dimensional parameter vector via a fully‑connected layer. They train the model with the Huber loss, which behaves like mean‑squared error for small residuals and like mean‑absolute error for large outliers, and they weight the loss components (wθ = 1, wσ² = 0.5) to compensate for the different scales of θ and σ². Training proceeds for 100 epochs with a batch size of 128 and a learning rate of 0.001 using the Adam optimizer. The total training time is about 2 hours 15 minutes, and inference on 500 test trajectories takes only 8 seconds, consuming roughly 63 MB of GPU memory.

Experiments are conducted on four representative parameter regimes: (1) strong mean reversion (θ = 2, σ² = 1), (2) weak mean reversion (θ = 0.2, σ² = 1), (3) high volatility (θ = 0.5, σ² = 4), and (4) low volatility (θ = 0.5, σ² = 0.25). For each regime, 5 000 simulated OU paths are generated, yielding a total dataset of 20 000 trajectories. The dataset is split 80 %/20 % for training and validation.

Results show a nuanced trade‑off. In estimating θ, the RNN consistently achieves lower mean absolute error and smaller standard deviation than MLE, indicating higher precision, especially in the strong and weak mean‑reversion regimes. However, for σ² the RNN’s performance deteriorates markedly in the high‑ and low‑volatility regimes: its estimates are biased and exhibit large variance, leading to root‑mean‑square errors several times larger than those of MLE. MLE remains robust across all volatility levels, delivering accurate and precise σ² estimates even though it is computationally heavier.

From a computational perspective, MLE’s bottleneck is the evaluation of the log‑likelihood and the global basin‑hopping search, which dominate the runtime and memory usage. In contrast, once the RNN is trained, inference is extremely fast and lightweight, making it attractive for real‑time or large‑scale applications where many parameter estimates are required.

The authors deliberately limit the hardware to a low‑end GPU to demonstrate reproducibility for researchers without access to high‑performance clusters. They acknowledge that the RNN’s generalization ability was not tested on unseen parameter combinations, and that the training set covers only four discrete regimes. They suggest that expanding the training data to include a broader, denser grid of θ and σ² values, as well as deeper or wider network architectures, could improve σ² estimation.

In conclusion, the study confirms that classical MLE remains the gold standard for diffusion‑parameter estimation when the model is correctly specified, while deep‑learning‑based RNNs excel at estimating drift parameters with high precision and minimal inference cost. The two approaches are complementary: MLE provides reliable volatility estimates, whereas RNNs offer rapid, accurate drift estimates and scalability. Future work may explore hybrid schemes that combine the statistical rigor of MLE with the representation power of neural networks to achieve uniformly superior performance across all OU parameters.

Comments & Academic Discussion

Loading comments...

Leave a Comment