LLM-ABBA: Understanding time series via symbolic approximation

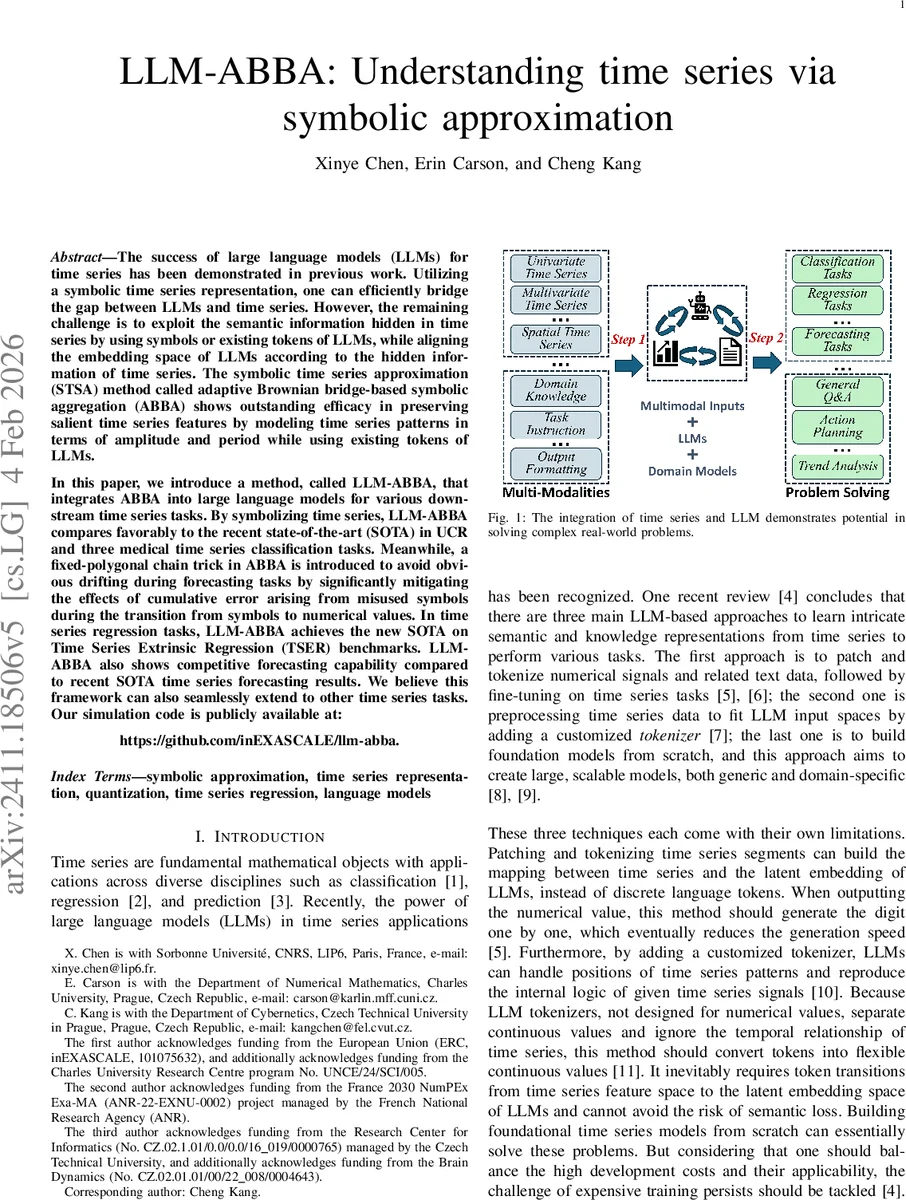

The success of large language models (LLMs) for time series has been demonstrated in previous work. Utilizing a symbolic time series representation, one can efficiently bridge the gap between LLMs and time series. However, the remaining challenge is to exploit the semantic information hidden in time series by using symbols or existing tokens of LLMs, while aligning the embedding space of LLMs according to the hidden information of time series. The symbolic time series approximation (STSA) method called adaptive Brownian bridge-based symbolic aggregation (ABBA) shows outstanding efficacy in preserving salient time series features by modeling time series patterns in terms of amplitude and period while using existing tokens of LLMs. In this paper, we introduce a method, called LLM-ABBA, that integrates ABBA into large language models for various downstream time series tasks. By symbolizing time series, LLM-ABBA compares favorably to the recent state-of-the-art (SOTA) in UCR and three medical time series classification tasks. Meanwhile, a fixed-polygonal chain trick in ABBA is introduced to avoid obvious drifting during forecasting tasks by significantly mitigating the effects of cumulative error arising from misused symbols during the transition from symbols to numerical values. In time series regression tasks, LLM-ABBA achieves the new SOTA on Time Series Extrinsic Regression (TSER) benchmarks. LLM-ABBA also shows competitive forecasting capability compared to recent SOTA time series forecasting results. We believe this framework can also seamlessly extend to other time series tasks. Our simulation code is publicly available at: https://github.com/inEXASCALE/llm-abba

💡 Research Summary

The paper introduces LLM‑ABBA, a framework that integrates the Adaptive Brownian Bridge‑based symbolic aggregation (ABBA) method with large language models (LLMs) to tackle a wide range of time‑series tasks. The authors first describe the ABBA pipeline, which consists of (1) compression via an adaptive piecewise‑linear continuous approximation (APCA). Given a tolerance parameter, the algorithm partitions the original series into N segments, each represented by a length and an increment, and guarantees that the reconstruction error is bounded by a Brownian‑bridge model. (2) Digitization, where each segment is clustered and assigned a unique symbol from a predefined alphabet. Because each symbol corresponds to a real‑valued cluster centre, it can be directly mapped to an existing LLM token, eliminating the need for a custom tokenizer.

LLM‑ABBA feeds the resulting symbol sequence to an LLM. For classification, the symbol string is used as input to predict class labels. For regression and forecasting, the task is reformulated as a next‑token prediction problem, allowing the LLM to generate future symbols that are later decoded back into numeric values. A key innovation is the “fixed‑polygonal chain” trick: during reconstruction, the start point of each segment is fixed, preventing error propagation across segments and dramatically reducing drift in long‑horizon forecasts.

Training employs QLoRA (quantized LoRA) adapters, which keep the base LLM frozen while only updating low‑rank adapter weights. This approach drastically reduces memory and compute requirements yet preserves the LLM’s extensive linguistic knowledge, enabling it to learn the chain‑of‑patterns (COP) inherent in time‑series data.

Empirical evaluation spans the UCR archive (128 datasets), three medical time‑series classification benchmarks, and the Time Series Extrinsic Regression (TSER) suite. LLM‑ABBA outperforms prior state‑of‑the‑art methods on classification accuracy (1.5–3 percentage points gain), sets new best RMSE scores on TSER, and achieves competitive MSE/MAE on multistep forecasting compared with recent Transformer‑based forecasters. All code and data are released at https://github.com/inEXASCALE/llm-abba, ensuring reproducibility.

The authors acknowledge limitations: ABBA’s hyper‑parameters (tolerance, clustering settings) heavily influence performance; long series may generate many symbols, hitting LLM token‑length limits; and the symbolization step inevitably discards fine‑grained numeric detail. Future work includes automated hyper‑parameter tuning, pre‑training language models on symbolic sequences for better compression, extending the approach to multimodal settings, and developing online updating mechanisms for streaming data. Overall, LLM‑ABBA demonstrates that symbolic approximation can serve as an effective bridge between raw numerical time series and the rich semantic capabilities of large language models, opening a new paradigm for unified, efficient time‑series analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment