Revisiting 360 Depth Estimation with PanoGabor: A New Fusion Perspective

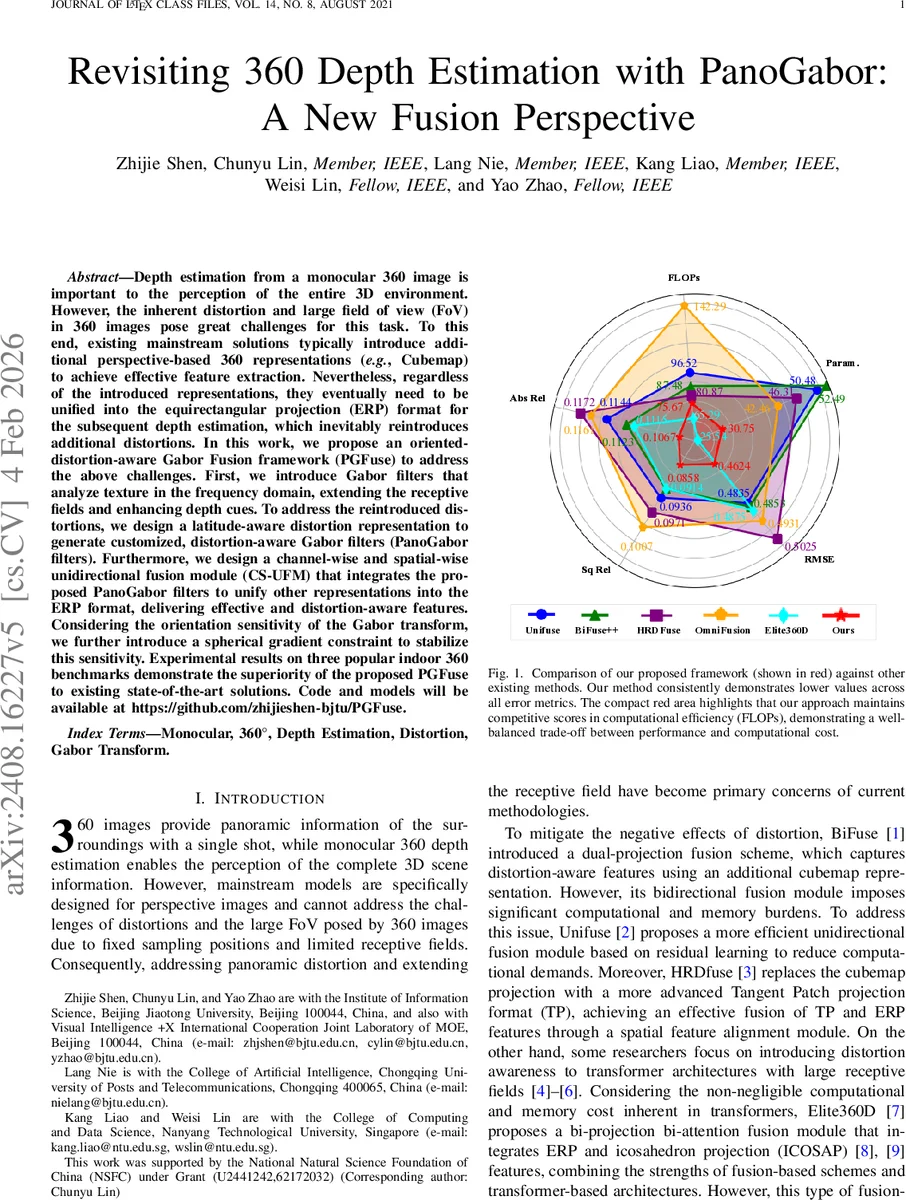

Depth estimation from a monocular 360 image is important to the perception of the entire 3D environment. However, the inherent distortion and large field of view (FoV) in 360 images pose great challenges for this task. To this end, existing mainstream solutions typically introduce additional perspective-based 360 representations ({e.g., Cubemap) to achieve effective feature extraction. Nevertheless, regardless of the introduced representations, they eventually need to be unified into the equirectangular projection (ERP) format for the subsequent depth estimation, which inevitably reintroduces the troublesome distortions. In this work, we propose an oriented distortion-aware Gabor Fusion framework (PGFuse) to address the above challenges. First, we introduce Gabor filters that analyze texture in the frequency domain, thereby extending the receptive fields and enhancing depth cues. To address the reintroduced distortions, we design a linear latitude-aware distortion representation method to generate customized, distortion-aware Gabor filters (PanoGabor filters). Furthermore, we design a channel-wise and spatial-wise unidirectional fusion module (CS-UFM) that integrates the proposed PanoGabor filters to unify other representations into the ERP format, delivering effective and distortion-free features. Considering the orientation sensitivity of the Gabor transform, we introduce a spherical gradient constraint to stabilize this sensitivity. Experimental results on three popular indoor 360 benchmarks demonstrate the superiority of the proposed PGFuse to existing state-of-the-art solutions. Code and models will be available at https://github.com/zhijieshen-bjtu/PGFuse

💡 Research Summary

The paper introduces PGFuse, a novel framework for monocular 360° depth estimation that explicitly tackles the distortion re‑introduction problem inherent in existing fusion‑based methods. Traditional approaches improve feature extraction by projecting the equirectangular panorama into auxiliary representations such as cubemaps or tangent‑patches, but they inevitably merge all features back into the ERP format, re‑inducing severe latitude‑dependent distortion, especially near the poles. PGFuse addresses this in two complementary stages.

First, it employs a dual‑cube strategy: two cubemap views (the original orientation and a 45° rotated version) are processed by a shared‑weight encoder (e.g., a ResNet backbone). This reduces geometric distortion locally, mitigates seam artifacts, and preserves information that would otherwise be lost during ERP resampling. The extracted cubemap features are spatially aligned and re‑projected into the ERP domain.

The second stage is the core contribution—a distortion‑aware Gabor fusion mechanism. Gabor filters, inspired by the human visual system, capture spatial frequency and orientation information, thereby extending the receptive field and strengthening depth cues. However, standard Gabor kernels assume uniform sampling and are highly sensitive to rotation, making them unsuitable for ERP images directly. To overcome this, the authors derive a latitude‑aware distortion factor K(ϕ)=1/ cos(ϕ)−1, which quantifies how area stretches from the sphere to the ERP plane. Using K(ϕ), they adapt the Gabor parameters (frequency, scale, orientation) for each latitude, creating PanoGabor filters that are intrinsically aware of the ERP distortion pattern.

These PanoGabor filters are embedded in the Channel‑wise and Spatial‑wise Unidirectional Fusion Module (CS‑UFM). CS‑UFM first fuses the PanoGabor‑enhanced features with the ERP and cubemap features along the channel dimension, then refines the combined representation spatially with 2‑D convolutions. This unidirectional design avoids the heavy computational and memory overhead of bidirectional fusion schemes (e.g., BiFuse) while preserving all relevant information.

Because Gabor responses are orientation‑sensitive, the authors introduce a spherical gradient constraint. The predicted ERP depth map is projected onto tangent patches, and Sobel operators compute gradients on these patches; the resulting gradient field regularizes the orientation of the Gabor responses, stabilizing training and improving robustness.

Extensive experiments on three widely used indoor 360° datasets—Stanford2D‑3D, Matterport3D, and Structured3D—show that PGFuse consistently outperforms state‑of‑the‑art methods such as BiFuse, UniFuse, HRDfuse, and Elite360D across all standard metrics (RMSE, Absolute Relative, Squared Relative, δ<1.25). Notably, error reductions are most pronounced at high latitudes, confirming the effectiveness of the latitude‑aware Gabor design. Moreover, PGFuse achieves these gains with a modest FLOPs budget and parameter count, indicating a favorable performance‑efficiency trade‑off suitable for real‑time applications.

The paper also discusses limitations: evaluations are confined to static indoor scenes, leaving open the question of generalization to dynamic or outdoor environments. Additionally, the PanoGabor parameters are learned end‑to‑end on specific datasets, which may affect cross‑domain transferability; future work could explore domain‑agnostic parameter initialization or meta‑learning strategies.

In summary, PGFuse presents a principled solution to the long‑standing issue of distortion re‑introduction in 360° depth estimation by integrating a distortion‑aware Gabor filter bank with an efficient unidirectional fusion module. This approach not only enhances depth accuracy—especially in heavily distorted polar regions—but also maintains computational efficiency, making it a promising candidate for deployment in robotics, AR/VR, and other panoramic vision systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment